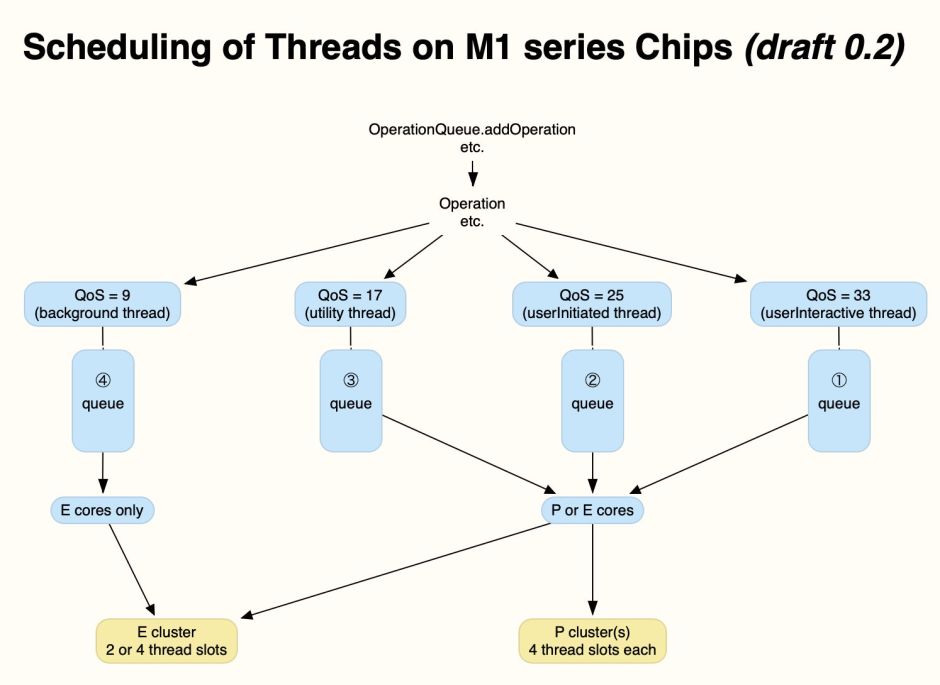

So far in this series, I’ve explained how the allocation of an app’s threads to different core types determines performance and more, how that’s controlled by setting the Quality of Service (QoS), and how macOS provides only limited means for the user to alter that. This leads to the best solution, that apps and services provide controls for the user, the subject of this article.

Basics

There are many different routes used to create executable threads in macOS. One basic method is to create an OperationQueue:

let myQueue = OperationQueue()

for which you can set two important variables:

maxConcurrentOperationCount, the maximum number of queued Operations (threads) that can run at the same time;qualityOfService, the QoS for Operations (threads) added to that queue.

It’s then just a matter of adding Operations (threads) to that OperationQueue and letting macOS allocate them to cores and execute them:

myQueue.addOperation {

… code

}

These queues might correspond to those shown in the last article.

Background threads

To run the threads from that queue on the E cores, set the QoS for the OperationQueue to QualityOfService.background. Sometimes it’s simpler to use a numerical value, in this case

QualityOfService.init(rawValue: 9)

so that it works more easily with values set by slider controls. For my example, that might be

myQueue.qualityOfService = QualityOfService.background

Deciding the maximum number of queued threads is more complicated. In some circumstances, there can only be a single thread, in which case

myQueue.maxConcurrentOperationCount = 1

This has the disadvantage on M1 Pro and Max chips that both the E cores may then be run at around 1000 MHz, half their maximum frequency, roughly doubling execution time. Setting the maximum number of threads to at least 2 should ensure that they’re run at maximum frequency on those chips, although not on the original M1.

My preference for multithreading is therefore to set the maximum number of threads to 2.

Performance threads

To run the threads from a queue on the P cores, set the QoS for the OperationQueue to any of the three higher values. In practice, the difference is more subtle than that between QualityOfService.background and the higher three QoS, and is unlikely to be apparent unless there’s contention between threads running at higher QoS. If you’re also supporting an E-core-only option, then the two most useful QoS are likely to be

QualityOfService.userInitiated, with a rawValue of 25QualityOfService.userInteractive, with a rawValue of 33.

App main threads are normally run at QualityOfService.userInteractive.

If there can be more than a single thread, one useful strategy is to set the maximum number of threads to multiples of four, the number of P cores in a cluster. When the P cores are largely idle, that should allow macOS to allocate threads to one cluster of P cores at a time, allowing any other P core clusters (on M1 Pro/Max/Ultra chips) to remain idle. For good performance, that should be

myQueue.maxConcurrentOperationCount = 4

to occupy all four P cores in an original M1, the first P cluster in M1 Pro/Max, or the first of the four P clusters in an M1 Ultra.

For very best performance, the maximum thread count should be set to the total number of P cores, so will vary between 4 for the original M1 and 16 for the M1 Ultra. That could be simplified in many instances to setting:

myQueue.maxConcurrentOperationCount = 8

although that’s likely to spill over to the E cores in the original M1.

Checking core count

Optimal number of threads depends on the number of each type of core available. As far as I can see, the most convenient way to discover those is using

sysctl hw.perflevel0.physicalcpu

for P cores, and

sysctl hw.perflevel1.physicalcpu

for E cores.

There may be more elegant ways in the API to achieve that.

Example user control

Technical apps intended for advanced users might give the user full control over the maximum number of threads and their QoS, but few users are likely to arrive at optimal combinations without experimentation. In most cases, it’s better to give users a simple choice between a small number of pre-selected combinations. For general use, where there’s no inherent limitation in the number of threads, I’d suggest:

- background, with 2 threads at QoS background (9);

- normal speed, with 4 threads at QoS userInitiated (25);

- maximum speed, with 8 threads at QoS userInteractive (33).

I use a slider with those settings marked using emoji, in this case additionally providing manual settings (at the right) used as a tool for exploring core allocation and performance.

Where there’s little difference in performance between the second and third of those options, the first could be made available in a single checkbox to run the app’s threads in the background.

It’s also important to document this control properly: several apps currently have options to alter “CPU priority”, but don’t make it clear whether that alters core allocation in Apple silicon chips, which is vague and confusing.

What the user can do

If you use an app that performs time-consuming largely CPU-bound tasks which you feel would benefit from better user control, suggest this to its developer as a new feature you consider would enhance it for everyone using that app on Apple silicon Macs. Given that multithreading should already be setting qualityOfService and maxConcurrentOperationCount, the added work of giving the user control is small. Benefits to those using higher performance M-series chips could also be substantial.

This applies largely where CPU performance is one of the main limiting factors. Apps which are more dependent on graphics are normally more constrained by GPU performance, so requiring a different approach. Developers also have to be careful in more elaborate use of concurrency, where giving the user control could have unintended consequences in delaying completion of dependent threads. There’s also one class of app for which such controls are completely ineffective, the subject of the next article in this series.

Previous articles

Making the most of Apple silicon power: 1 M-series chips are different

Making the most of Apple silicon power: 2 Core capabilities

Making the most of Apple silicon power: 3 Controls

Making the most of Apple silicon power: 4 Frequency

Making the most of Apple silicon power: 5 User control

Does removing I/O throttling make backups faster?

MacSysAdmin 2022 video (watch)

MacSysAdmin 2022 Keynote slides (download)