Given the importance of the Quality of Service (QoS) assigned to each thread in an app or service, in determining which CPU cores those threads will be run on, what control does the user have?

For the few apps that give the user direct control over QoS and thread processing, that can be excellent. In the next article I’ll look at how this can be done without baffling users, and what the rewards are in terms of energy saving against performance. For everything else, the control is in the command tool taskpolicy, and its equivalent code function setpriority(). To understand how they work, I need to dig a bit deeper into the way that threads are managed in queues.

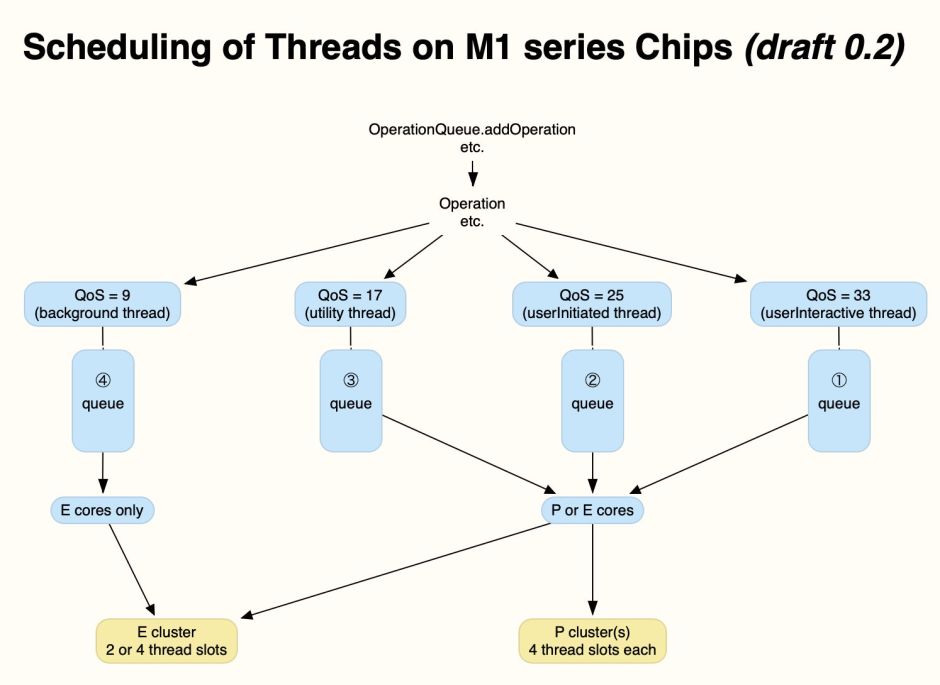

When an app creates threads for execution, they’re added to a queue of threads according to QoS, with one queue for each of the four main QoS values. macOS then dispatches threads from the front of each of those queues, allocating those in the QoS 9 queue to E cores only, and those in the other three queues to P cores when possible, or E cores when no P core is available. This is summarised in the diagram below.

taskpolicy

In this context, taskpolicy offers two options:

taskpolicy -b -p PID

where PID is the ID number of the process, which demotes that process’s threads to run on E cores alone, and

taskpolicy -B -p PID

which promotes those threads to run on P and E cores.

After looking at the effect of these options on apps running threads at different QoS settings, what actually happens in Monterey is:

- when demoted using -b, all threads are run on E cores alone;

- when demoted with -b, then promoted with -B, threads at higher QoS that were demoted are returned to running on P and E cores, but those running at the lowest QoS remain running on E cores alone;

- changes using -b and -B don’t alter queues, just change core allocation for the three higher QoS queues.

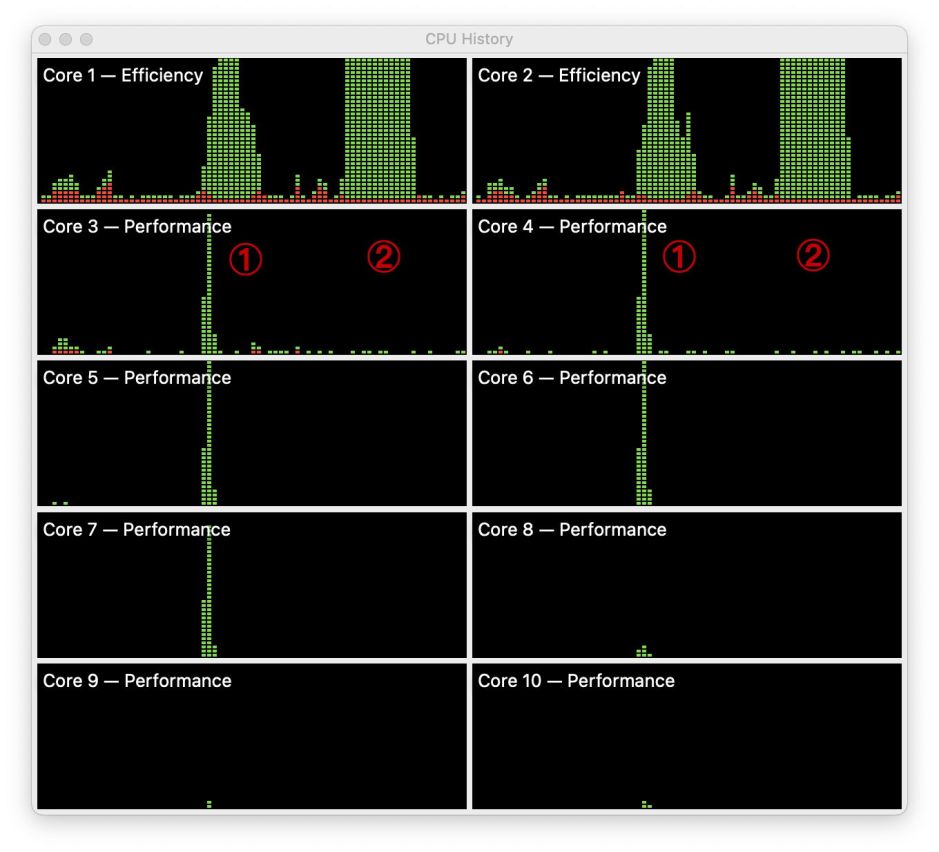

This is shown in a short test, in Activity Monitor’s CPU History window, reading from the left in columns, for this M1 Pro chip.

In the first test, marked by the figure 1, the app runs a series of threads at alternating maximum and minimum QoS, without the use of taskpolicy. Short peaks on the P cores reflect those threads run from the high QoS queue, and a prolonged peak on the two E cores at the top results from the odd-numbered threads running on those.

Shown at the figure 2 is the effect of running taskpolicy -b on the whole process (all its threads). This successfully demotes all threads, from both queues, to run on the E cores alone. What it doesn’t do, though, is alter the order of completion of the threads, which are still run in the same sequence as determined by their original QoS. Furthermore, running taskpolicy -B (not shown here) returns the high QoS threads to the P cores, but doesn’t affect low QoS threads, which are still run on the E cores.

In practice, this means you can use taskpolicy to run all the threads of a process on the E cores, as background threads, but you can’t run background threads on P cores, only those already assigned higher QoS. The command thus functions as a brake, but not as an accelerator. So far, all attempts to discover a method of promoting background threads to run as if they had higher QoS have failed.

I know of one utility which currently uses this feature to give the user control over apps and services: St. Clair Software’s App Tamer, which has an option to Run this app on the CPU’s efficiency cores among its many other valuable tools.

I/O throttling

Not only can’t you promote threads set with a background QoS, but even if you could there’s another important control which could still limit their performance: I/O throttling. This is best-known in Time Machine backups, which are run at background QoS with IOPOL_THROTTLE set.

IOPOL_THROTTLE is one of five different policies supported by macOS to determine I/O throughput, here access to storage. Although taskpolicy can run tasks with IOPOL_THROTTLE removed, it’s unable to modify that on background tasks like backupd which are already running. The only method known to be able to remove I/O throttling is to disable it globally, another feature offered in App Tamer.

Global removal of I/O throttling could have unexpected consequences, and isn’t considered a wise choice for general use. The good news, though, is that I/O throttling doesn’t appear to be automatic for threads running with lowest QoS. But for those with it set, simply changing QoS or core allocation could only lead to limited improvement in performance.

By far the best solution to these problems is for apps and services to give the user control options, the subject of the next article.

Previous articles

Making the most of Apple silicon power: 1 M-series chips are different

Making the most of Apple silicon power: 2 Core capabilities

Making the most of Apple silicon power: 3 Controls

Making the most of Apple silicon power: 4 Frequency

Does removing I/O throttling make backups faster?

MacSysAdmin 2022 video (watch)

MacSysAdmin 2022 Keynote slides (download)