There’s a major difference between the two most popular versions of Apple’s M1 chips: the original has four E and four P cores, while the M1 Pro and Max have only two E cores and eight P cores. It follows that the latter should deliver twice the performance of the original design when running higher QoS threads on their P cores. It also implies that Macs with M1 Pro or Max chips run multiple threads at lowest QoS, for background tasks, at half the speed of the original M1 chip. That would be embarrassing if true: a basic MacBook Air or mini would complete tasks such as Time Machine backups and Spotlight indexing in half the time taken by an expensive MacBook Pro or Studio with an M1 Max.

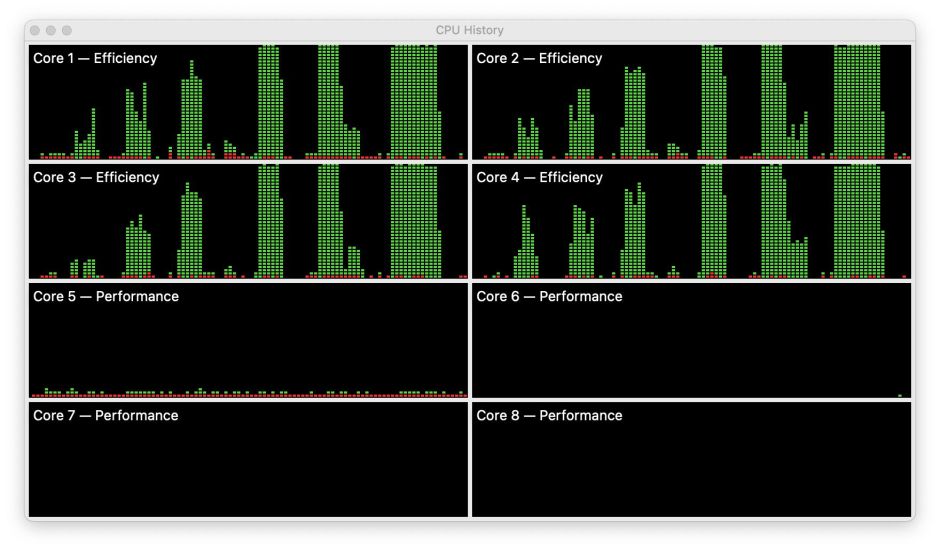

To understand how macOS addresses this, I look back at what happens when an original M1 chip’s E cores are loaded with threads running at lowest QoS.

From the left, they run 1, 2, 3, 4, 6 and 8 threads. They complete in the same time until the number of threads exceeds the number of E cores, and take longer when there are more threads than cores, as only one thread runs on each core at a time, so additional threads are queued by GCD until the first batch of four threads has completed.

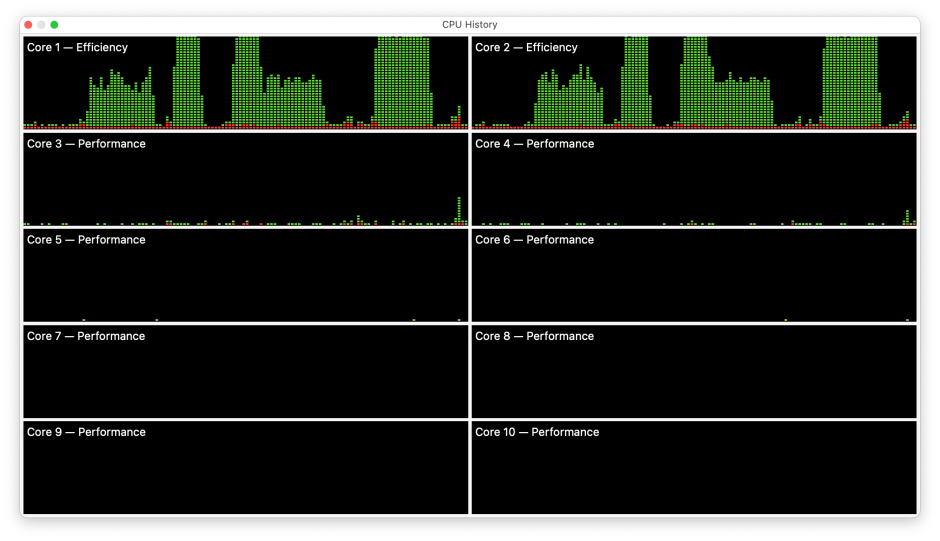

What happens on an M1 Pro or Max is quite different.

Here’s a similar sequence of 1-4 threads on an M1 Pro chip. Note how the width (duration) of the second test with 2 threads is obviously smaller than the first with a single thread. Looking at the time taken to complete those two threads, it’s about half that for a single thread, although the area shown for those two tests appears similar.

This doesn’t make sense, and highlights a major shortcoming in using Activity Monitor’s CPU History window to study how macOS uses the cores in Apple silicon chips. It’s only when you look at the frequency those E cores were running at that sense returns, and the explanation is revealed.

Run one thread on the two E cores in an M1 Pro or Max, and the cores run at a frequency of around 1000 MHz, half their maximum. Run two threads at the same time, and their frequency is boosted to their maximum of 2064 MHz.

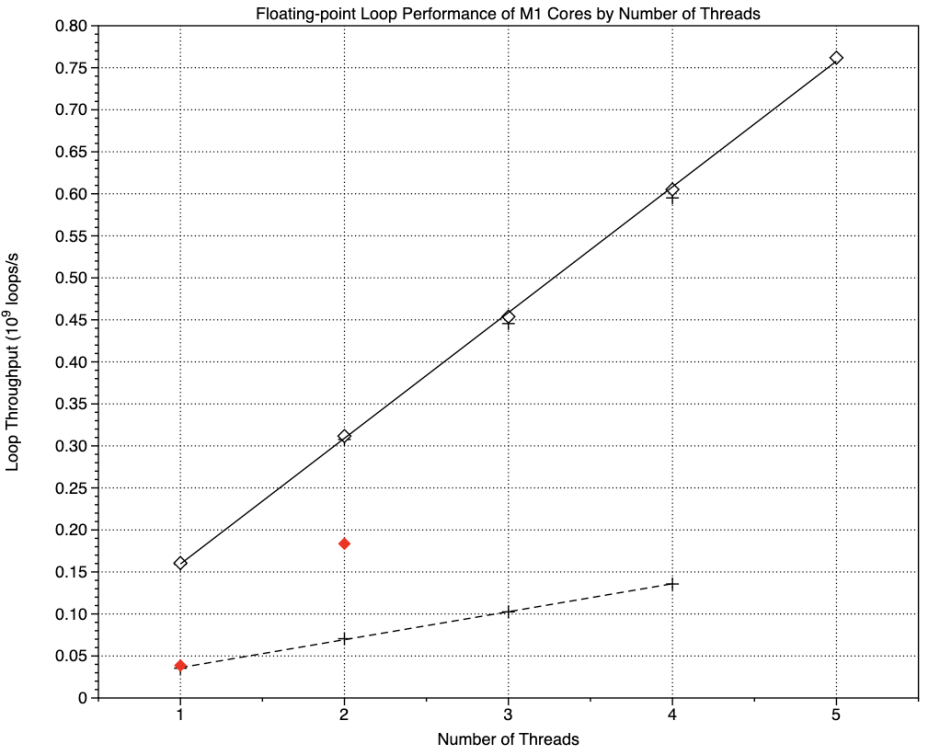

One way to look at this more precisely is to graph the speed of execution for different numbers of threads against the number of threads, or notional E cores as each thread is effectively allocated to a single core.

The upper solid line shows this relationship for P cores being used at maximum QoS. Each core effectively adds 0.15 billion loops/second to total throughout, whether on an original M1 (+ points) or M1 Pro (♢ unfilled diamonds). Although not shown here, on an M1 Pro that line continues up to its total of eight P cores.

The broken line below shows the same relationship for E cores in an original M1, this time each adding 0.033 billion loops/second, 22% of the throughout of each P core. Shown in red, though, are the equivalent points for the two E cores in an M1 Pro (or Max): with one core, throughput is the same as an original M1, but with both cores active, throughput more than doubles that of two cores on the original chip. That’s macOS controlling the E core frequency to ensure that M1 Pro and Max chips don’t perform any slower than those in the original M1, and in fact are here slighter faster than all four E cores together, running at 1000 MHz.

This clearly illustrates the danger of believing Activity Monitor’s figures for CPU % and its CPU History window: while they appear to show core allocation faithfully, because they don’t take into account the frequency of cores in Apple silicon chips, they will mislead.

Putting these observations together shows that macOS has different strategies for managing E cores in different chips:

- On the original M1, all four E cores are run at a frequency of about 1000 MHz when running threads of lowest QoS, further enhancing their economy of power. However, when those same cores are used to run threads of higher QoS, they will normally run at their maximum frequency, so sacrificing energy efficiency for better performance.

- E cores in M1 Pro/Max chips are run at maximum frequency when they’re loaded with two or more minimum QoS threads, giving up some energy efficiency to deliver performance at least as good as the E cores in the original M1. When higher QoS threads spill over onto E cores in an M1 Max/Pro, they’re run at maximum frequency to deliver better performance, closer to that of P cores.

- macOS not only allocates threads to cores, but also controls their frequency according to the number of E cores available and thread QoS.

By this stage I hope that it’s clear how important QoS is in determining how macOS allocates threads to different cores, and sets their frequency. But if QoS is hard-coded into apps and services, then how can the user have any influence over the controls in macOS? That’s the starting point for the next article.

Previous articles

Making the most of Apple silicon power: 1 M-series chips are different

Making the most of Apple silicon power: 2 Core capabilities

Making the most of Apple silicon power: 3 Controls

MacSysAdmin 2022 video (watch)

MacSysAdmin 2022 Keynote slides (download)