Having looked at the differences in Apple silicon chips and their two types of CPU core, this article explains how macOS allocates threads to different cores.

There are three methods of core allocation currently in use in chips with multiple types of core:

- core allocation can be left to the apps and services themselves, as in Linux running native on Apple silicon;

- a hardware Thread Director can allocate threads to cores according to a set of rules, as in Alder Lake chips;

- the operating system can allocate threads to cores according to their declared priorities, as in macOS on Apple silicon.

Dispatch

In macOS, tasks that can be run in parallel are divided into blocks of code termed threads. Each thread is passed to be run using the Dispatch, or Grand Central Dispatch (GCD) system, which manages queues of threads of different priority and dispatches them to different cores.

Let’s say a program needs to convert four million values, each independent of the others. Its developer decides to divide that up into four different threads, each doing a quarter of the overall task. The code then creates those four threads and hands them on for dispatch at the same priority. GCD then puts the four threads into the same queue, and they’re allocated to the next available CPU cores for execution. If there happen to be four free cores, then they’ll each be loaded onto a core in rapid succession and executed immediately.

When that app creates those threads, it has two fundamental choices to make: how many threads, and their priority. The latter is set by an assigned Quality of Service (QoS), chosen from the macOS standard list:

- QoS 9 (binary 001001), named background and intended for threads performing maintenance, which don’t need to be run with any higher priority.

- QoS 17 (binary 010001), utility, for tasks the user doesn’t track actively.

- QoS 25 (binary 011001), userInitiated, for tasks that the user needs to complete to be able to use the app.

- QoS 33 (binary 100001), userInteractive, for user-interactive tasks, such as handling events and the app’s interface.

There’s also a ‘default’ value of QoS between 17 and 25, and an unspecified value.

Where all cores in a processor are identical, in an Intel Mac, assigning QoS to threads determines the order in which they’re allocated to available cores, so that userInteractive (QoS 33) threads are completed first, and background (QoS 9) after them. If there’s no competition for cores, because they’re largely free, the effect of QoS is relatively small.

QoS and core types

Where the chip contains two different core types, in Apple silicon, QoS is also used to determine which types of core a thread can be allocated to. According to the rules currently used in macOS, threads with QoS between 17 and 33 are run preferentially on P cores, but when no P cores are available, they can be run on E cores. However, threads with QoS of 9, background, are confined to E cores and are never run on P cores, even if they’re all idle and the E cores are busy.

This is shown when tasks are run using an increasing number of threads with the highest QoS. This original M1 chip is here being subjected to a series of loads from increasing numbers of CPU-intensive threads. Its two clusters, E0 and P0, are distinguished by the blue boxes. With 1-4 threads at high QoS (from the left), the load is borne entirely by the P0 cluster, then with 5-8 threads the E0 cluster takes its share.

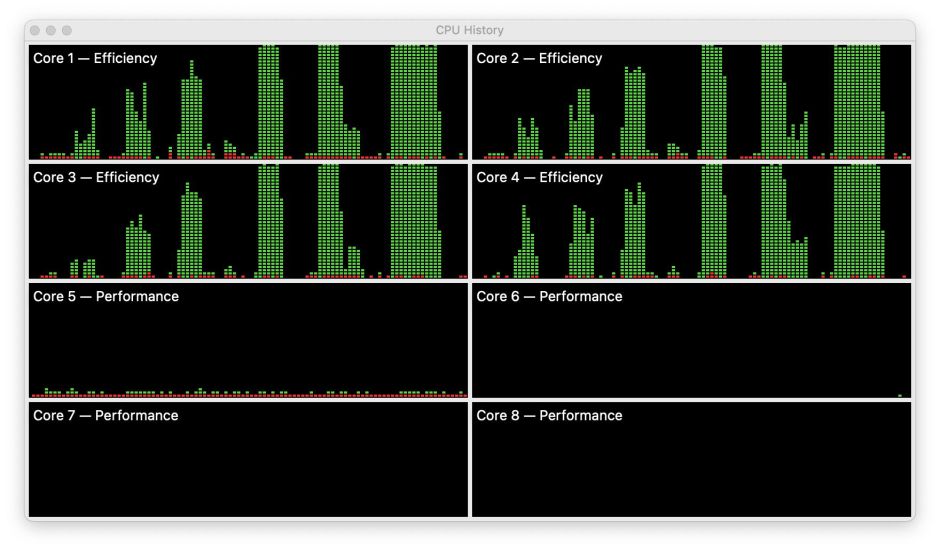

In this sequence of tests at lowest QoS on an M1 Mac mini, I increased the number of identical test threads from 1 at the left to 4, 6 and 8 at the right. Over the first four tests, the height of the bars increases until they reach 100% on each of the four E cores, but the width of each test (time taken) remains constant at around 5-6 squares until the number of processes exceeds the number of E cores. No matter how many threads an app runs at the lowest QoS, they’re confined to the E cores alone.

In practice, the great majority of threads run at minimum QoS on the E cores alone are the many services in macOS. The best time to see this is shortly after a Mac has started up, when there’s an intense scan by MRT (at least until recently with the arrival of its substitute), prolonged indexing for Spotlight, and a Time Machine backup.

On Intel Macs with their single core type, these may be sufficient to run the fans up, and some Macs become quite sluggish until they’re done. It’s exceptional for the Apple silicon Mac user to even be aware of all this service activity unless you happen to open Activity Monitor with its CPU History window, where you’ll see the E cores working flat out. Because the P cores are almost unaffected, you can launch apps normally, and they are as brisk and responsive as when little else is happening.

This highlights one triumph of Apple silicon chips in user psychology: longstanding problems that are notorious for bringing Intel Macs to their knees, like crashing mdworker processes, no longer have any adverse effects on user processes running primarily on the P cores.

This all depends on wise use of QoS. User apps that don’t yet allocate QoS to their threads can only be assigned default values, however inappropriate. Even worse, any tendency by third-party software to over-inflate the priority of its threads will work to the detriment of threads which do need high QoS, and affect the user.

Assigning threads the right QoS isn’t invariable either. Taking a simple example of compressing files, there are times when we want that action to complete as quickly as possible, so would prefer a high QoS, and other times when we’re happy to wait if that keeps other apps running faster. As QoS settings are normally baked into an app’s code, there are times when the user might be grateful to be in control.

This is made more complex by choice of the number of threads to use, where a task can be run in more than one thread. For example, on an M1 Pro or Max chip running with little other load, a task divided into eight threads and run on the P cores should complete quickest. Change the QoS to confine that to just the E cores, and there’s no benefit in running it in eight threads, as two will perform marginally better given that there are only two E cores available.

There’s another problem hidden here: if QoS were the only control over core allocation and thread performance, then background threads running on the original M1 chip with its four E cores would be more likely to complete quickly than on higher-performance M1 Pro and Max chips, with only two E cores. I’ll look at that in the next article in this series.

Previous articles

Making the most of Apple silicon power: 1 M-series chips are different

Making the most of Apple silicon power: 2 Core capabilities

MacSysAdmin 2022 video (watch)

MacSysAdmin 2022 Keynote slides (download)