Ever since Apple was unwise enough to suggest that it might check certain images to see whether they were Child Sexual Abuse Material (CSAM), rumours have been rife that it has pressed ahead and now does that. I gather a new claim is being pushed out that this is performed in Ventura 13.1, so this article is an attempt to determine whether there’s any truth in that.

This claim boils down to Apple automatically being sent identifiers of images that a user has simply ‘browsed in the Finder’ without that user’s consent or awareness. I should make it clear that this hasn’t been demonstrated: as far as I’m aware the only evidence provided is that a Mac on which images were being ‘browsed in the Finder’ tried to make an outgoing connection from mediaanalysisd to an Apple server at that time, as revealed by the software firewall Little Snitch.

When Apple was intending to check for CSAM, it kindly explained how it aimed to do that, by generating identifiers, known as neural hashes, for images. A moment’s thought should indicate that uploading every image is neither sensible nor practical; instead, some form of concise identifier is essential. Unlike normal hashes, which are intended to amplify the smallest change in the source file, neural hashes are intended to distinguish images according to their content and characteristics.

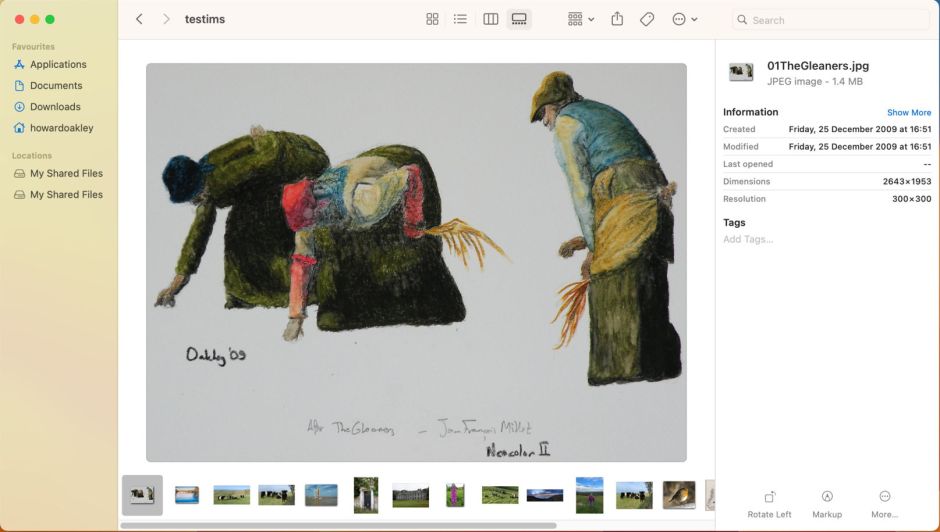

Not only did Apple explain the principles of its intended detection system, but it gave us a free demonstration of those in macOS Monterey, with Visual Look Up (VLU). That enables your Mac, with a little help from Apple’s servers to match neural hashes, to identify paintings, breeds of dog and cat, and sundry other subjects in images.

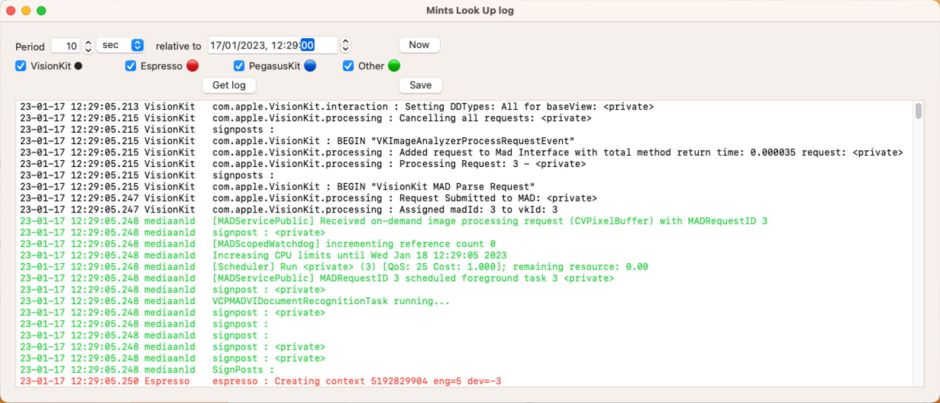

I have taken a deep look inside the processes involved in VLU, to the point where one of my free apps, Mints, can easily obtain a full account of them from the Unified log. I therefore performed a series of tests in a macOS 13.1 virtual machine running in Viable on my Mac Studio M1 Max, to discover what might explain the observation reported, and whether that supported the claim being made.

Gallery browsing

To get an idea of whether mediaanalysisd or any other component involved in image analysis or neural hash generation was active when looking through images in a Gallery window in the Finder, I loaded 18 assorted images in different formats into the ~/Documents folder of my VM, opened a gallery view of them, and looked through them for a period of one minute. I then captured all log entries for that period, a total of more than 40,000, and saved that excerpt to a file, using Ulbow. Not only was there no evidence of any image analysis taking place, but in that period there were no log entries from mediaanalysisd at all. Not one.

I repeated this over a period of 30 seconds, this time using Mints to display all log entries associated with VLU and Live Text. There were none at all in that period.

Visual Look Up

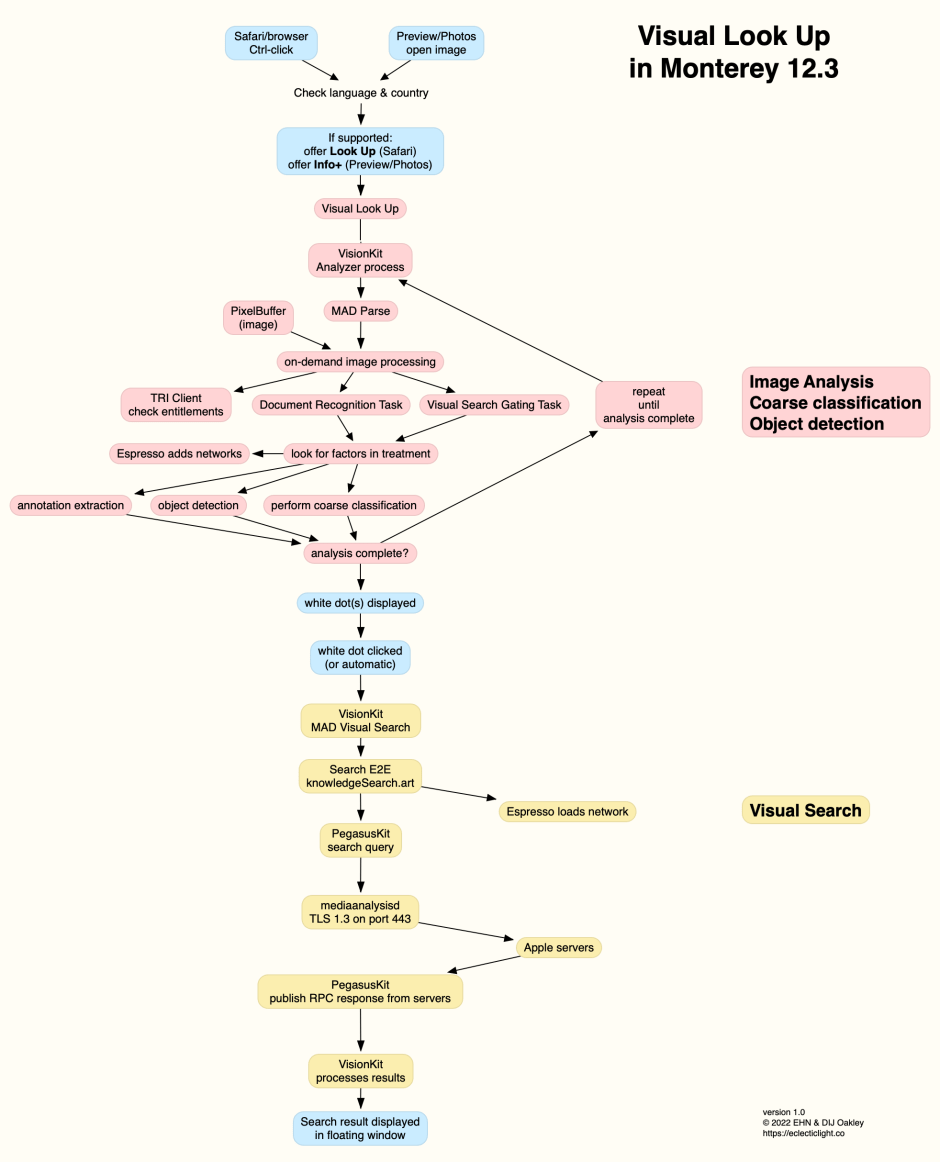

Although I studied VLU and Live Text in detail in Monterey, before going any further I wanted to confirm that they behave similarly in macOS 13.1, and write similar sequences of messages in the log. I therefore obtained a log extract using Mints for single image VLU using Preview. This confirmed that messages and processes appear very similar to those I had analysed before. These are summarised in the following diagram.

Note that mediaanalysisd doesn’t contact Apple’s servers until late in the process, to perform matching of the neural hashes generated by the preceding image analysis. The response from those servers then enables VLU results to be displayed in a window over the image.

QuickLook Preview

Although the original description given was ‘Finder browsing’, for some that might include the display of images as QuickLook Previews, by selecting the image and pressing the Spacebar. In my previous examination of VLU and Live Text, this wasn’t a feature that I had investigated. I therefore obtained log excerpts for two images being opened in QuickLook Preview. One of those images contained some handwritten text, the other did not.

For both images, VisionKit initiated image analysis when the image was being opened in its preview window. For the image which didn’t contain text, this completed in a total processing time of 615 ms, failed to recover any text from that image, and attempted no remote connections. The image containing text took longer, 881 ms, and returned text of length 65 ‘DD’ (as given in the log) after a considerably more elaborate series of processes, including one outgoing secure TCP or Quic connection by mediaanalysisd lasting 58 ms, before the completion of Visual Search Gating.

This is consistent with the briefer task used in Live Text, and quite different from VLU. There is thus no evidence of the generation of neural hashes or any search query by PegasusKit typical of the later stages of VLU.

Conclusions

- There is no evidence that local images on a Mac have identifiers computed and uploaded to Apple’s servers when viewed in Finder windows.

- Local images that are viewed in QuickLook Preview undergo normal analysis for Live Text, and text recognition where possible, but that doesn’t generate identifiers that could be uploaded to Apple’s servers.

- Images viewed in apps supporting VLU have neural hashes computed, and those are uploaded to Apple’s servers to perform look up and return its results to the user, as previously detailed.

- VLU can be disabled by disabling Siri Suggestions in System Settings > Siri & Spotlight, as previously explained.

- Users who want to block all such external

mediaanalysisdlook-ups can do so using a software firewall to block outgoing connections to Apple’s servers by that process through port 443. That may well disable other macOS features. - Trying to harvest VLU neural hashes to detect CSAM would be doomed to failure for many reasons, most of which were raised with Apple at the time of its original proposals, and remain valid today.

- Alleging that a user’s actions result in controversial effects requires demonstration of the full chain of causation. Basing claims on the inference that two events might be connected, without understanding the nature of either, is reckless if not malicious.

If you doubt the accuracy or veracity of anything I have written above, then all the tools that I used are free, available from the links I’ve provided, and I look forward to reading your results.