Last week there was a surprise storm when Apple announced its plans to better protect our children. While I have my views on its proposals, which are almost certainly different from yours, what has become apparent is that few who have passed comment understand Apple’s proposals. Before deciding whether they’re a big step forward or a gross infringement of privacy, I believe it’s vital to understand just what Apple is intending to introduce.

For a start, there isn’t just one change, but three separate changes, one of which is part of Parental Controls, and another is some fairly uncontentious improvements in Siri and Search. By far the most controversial have been those which will apply to all who store images in iCloud using iPhotos or Photos (which Apple somewhat anachronistically refers to as iCloud Photos), which will be checked for CSAM (Child Sexual Abuse Material) before being uploaded. These raise many questions, in particular how images can be checked reliably, which is what I’m going to try to explain here.

Apple’s detailed announcement is essential reading, but its re-use of terms such as hashes can confuse, and it’s also easy to gain the impression that image matching is all performed by “an AI”. There’s much more to it, and while Machine Learning (ML) is involved, I don’t think those working in AI would consider this an AI system as such.

Image classification

We’re most used to ML being used to classify images, a function well-supported by Apple’s operating systems and used by Apple’s and third-party apps. It’s now fairly straightforward to produce an app which can tell the difference between different painting styles, and hazard a good guess who appears in your family photos. We’re also aware of the comical misclassifications which are quite common, and make amusing tweets. That’s why it would be useless for detecting CSAM.

While we’re thinking about others analysing your photos, though, remember that when you give an app full access to your photos in Privacy controls, you’re allowing it to rifle through them and run whatever classification methods it chooses. If you’re concerned that others might have an unhealthy interest in interpreting your Photos library, the first thing you need to do is keep a close watch on anything gaining access. Privacy dialogs may be a pain, but they have an important purpose too.

The perfect match

At the other end of the scale, file integrity checking apps use cryptographic hashes to check that files are perfect down to the very last bit. To do this, they calculate a hash, a large binary number, and compare that with what it should be. Using this technique to match images guarantees that any matches would be perfect, but it also fails to notice images which we can see are identical, or very close matches, but differ slightly. Maybe they’re JPEGs which use slightly different compression, one is a crop of the other, or the colour has been adjusted.

Trying to detect CSAM using cryptographic hashes would therefore be largely fruitless, and easily circumvented.

Matching colours

Allow me a slight diversion here for the sake of explanation. Let’s match colours instead of detailed images. Judging by eye, we’d take the two swatches, expose them under the same light, and compare them. You can do this numerically using a colour-measurement device, which can provide their colour co-ordinates.

There are several different systems for obtaining co-ordinates: above is the HSB method using Hue, Saturation and Brightness.

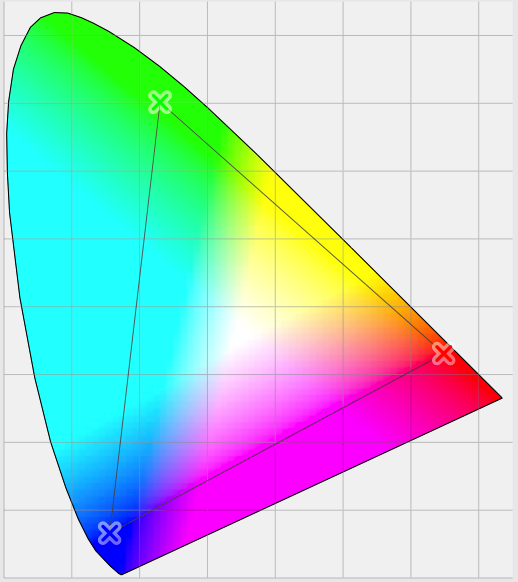

Colour space diagrams like this using xyz co-ordinates are a bit more abstract, perhaps, but work similarly.

An app can determine whether two colour measurements match within a defined tolerance, which allows for subtle differences in the surfaces being compared, and to a degree in lighting.

PhotoDNA

Imagine now analysing an image by converting it to monochrome or even black and white, dividing it up into squares, and using those to produce a set of many co-ordinates which characterise that image. That’s essentially how PhotoDNA, developed by Hany Farid and Microsoft Research, works. Each image that you upload to social media, including Twitter and Facebook, is analysed by cloud servers to produce a ‘hash’, which is compared with a huge database of hashes of known CSAM.

There’s a delicate balance here between sensitivity and specificity. If each hash excludes too many near-identical images, then it will be highly specific but not sensitive enough; if it includes any dissimilar images, then it will be too sensitive and not specific enough. The method used to generate the hashes is thus critical to the success of PhotoDNA.

Currently, PhotoDNA only runs on servers, and no one seems to know whether it would ever be suitable for popular devices like iPhones. Although it’s extending to cover videos as well, the computational effort involved in that may well be beyond what is reasonable for a mobile phone: it handles video by extracting key frames and performing similar hash-matching for those. For multi-shot videos lasting several minutes or more, that could well be demanding even for computer servers to perform in anything like real time.

NeuralHash

Apple has adopted a similar approach, but with some important differences.

The first step is to develop a system of numeric image descriptors, like colour co-ordinates, which characterise each image. This involves Machine Learning, to discover which co-ordinates are best suited to the task, learning on huge collections – hundreds of thousands – of CSAM images which have been gathered by organisations like NCMEC. What this produces is a set of (real) numbers for each image and its variants, which are then combined into a unique hash for that group of images. The first of those steps uses a convolutional neural network, hence the name NeuralHash.

The goal here is to ensure that an iPhone can compute a NeuralHash for an image in real time, and quickly compare that against a local database of NeuralHash values for known CSAM. Each NeuralHash has to have high sensitivity and specificity, so that the chances of false positives and negatives are extremely low.

Training the convolutional neural network involves presenting it pairs of images. Some consist of the original CSAM image and a modified version of it which is perceived to be the same; other pairs consist of the original image and an image which isn’t seen as being the same at all. Only when the neural network performs well at matching both pairs correctly is it then ready for testing.

Matching NeuralHashes

The set of real numbers for each image cluster is extremely large, and not amenable to direct comparison. The integer NeuralHash is generated using a single bit for the ‘result’ of each of the co-ordinates measured, a process known as Hyperplane Locality Sensitivity Hashing.

Apple then uses an extended version of a Private Set Intersection (PSI) protocol to match the NeuralHashes of images to be transferred to iCloud Photos against those for known CSAM, which are supplied by organisations like NCMEC. This has to be performed so that NeuralHashes of ‘innocent’ images (which don’t match known CSAM) aren’t released from the device to Apple, and ensures that Apple’s server only learns about the matches if a threshold in number is reached. If the threshold is set at 5, for instance, the server will only know of matches if there are more than five matches. That is achieved using Threshold Secret Sharing. Additional protection for the user is provided by the use of a Safety Voucher. Apple describes each of these quite elaborate protections in its documentation, available from here.

The threshold serves another purpose: controlling the rate of false positives. There is a very small risk that any image matching could produce a positive result when the images are different and shouldn’t have been matched. Apple should have a good idea of that risk from testing of the method used to generate NeuralHashes. To further reduce the risk of such errors occurring, the system requires more than one positive match to occur before Apple is notified of the likelihood of CSAM content in the images to be transferred to iCloud Photos. And tweaking the threshold is a simple way to adjust the sensitivity of the whole matching system.

Extension

PhotoDNA was extended in 2016 to cover not only CSAM but images and video with extremist and terrorist content. As it performs matching on cloud servers, such extensions can be accommodated relatively easily.

Apple’s matching relies completely on local computation of the NeuralHash for each item to be checked. If it were to adopt the key frame approach to matching video too, each video to be checked would need to be decoded, key frames identified and extracted, then a NeuralHash computed on every one. Mobile devices with limited memory and constraints to multitasking are far from ideal platforms for such tasks.

There are also issues with extending the types of images matched. Operating the same system to match images out of the domain on which the convolutional neural network was trained and tested carries significant risk of loss of sensitivity and specificity. Extending matches to different types of image isn’t just a matter of adding more NeuralHashes to the list, and only Apple can tell how domain-specific its current solution is. In some domains, matching performance could be so poor as to be as comical as flawed attempts at image classification.

Does it need to work well?

There’s an argument, with support from Game Theory, that says that Apple can set a high threshold for the number of matches, and only detect and report a few cases of CSAM. Indeed, even that may be unnecessary to drive anyone currently sharing CSAM to abandon the use of iCloud Photos altogether.

The worst outcome would be for a high rate of false positives, which would only strengthen arguments against these measures. Whether any outcome would result in more prosecutions for or any reduction in CSAM is a matter of speculation, as are the motives of those involved. What is abundantly clear, though, is that Apple has devoted extensive engineering and development effort already, and is unlikely to be willing to abandon the scheme in a hurry.