Apple silicon Macs are distinctive for containing SoCs with at least eight cores of two different types, designed for efficiency (E) and performance (P). macOS provides elaborate mechanisms for allocating threads to cores, to ensure maximum economy in energy use while delivering the performance the user expects. This is based on designated Quality of Service (QoS): threads which are assigned the lowest QoS for ‘background’ tasks are allocated only to E cores, while those with higher QoS are preferentially allocated to P cores, although they can also be run on E cores when necessary. Full details are given in this article.

vCPUs

When a guest operating system is run in virtualization, its code is run on virtual cores, known as vCPUs, which are managed by the hypervisor with the host operating system. As no information is provided on how these manage the allocation of vCPUs, this article looks at how they and real cores are managed in lightweight virtualization, using the Virtualization framework, on Apple silicon Macs in macOS Monterey.

First clues come from the Unified log. When an Apple silicon Mac starts up, once the kernel is loaded onto the single core running it, it starts up the other cores in sequence:

0.067302 ml_processor_register>cpu_id 0x0 cluster_id 0 cpu_number 0 is type 1

0.067352 cpu_start() cpu: 0

0.067371 ml_processor_register>cpu_id 0x1 cluster_id 0 cpu_number 1 is type 1

0.067391 cpu_start() cpu: 1

0.067412 ml_processor_register>cpu_id 0x2 cluster_id 1 cpu_number 2 is type 2

0.067435 [ 6.186958]: arm_cpu_init(): cpu 1 online

0.067437 cpu_start() cpu: 2

0.067457 ml_processor_register>cpu_id 0x3 cluster_id 1 cpu_number 3 is type 2

0.067481 cpu_start() cpu: 3

0.067537 [ 6.187037]: arm_cpu_init(): cpu 2 online

0.067541 ml_processor_register>cpu_id 0x4 cluster_id 1 cpu_number 4 is type 2

0.067574 [ 6.187097]: arm_cpu_init(): cpu 3 online

0.067576 cpu_start() cpu: 4

0.067599 ml_processor_register>cpu_id 0x5 cluster_id 1 cpu_number 5 is type 2

0.067632 [ 6.187157]: arm_cpu_init(): cpu 4 online

0.067634 cpu_start() cpu: 5

0.068030 [ 6.187215]: arm_cpu_init(): cpu 5 online

0.068034 ml_processor_register>cpu_id 0x6 cluster_id 2 cpu_number 6 is type 2

0.068467 cpu_start() cpu: 6

0.068491 ml_processor_register>cpu_id 0x7 cluster_id 2 cpu_number 7 is type 2

0.068537 [ 6.188066]: arm_cpu_init(): cpu 6 online

0.068539 cpu_start() cpu: 7

0.068558 ml_processor_register>cpu_id 0x8 cluster_id 2 cpu_number 8 is type 2

0.068609 [ 6.188120]: arm_cpu_init(): cpu 7 online

0.068611 cpu_start() cpu: 8

0.068628 ml_processor_register>cpu_id 0x9 cluster_id 2 cpu_number 9 is type 2

0.068669 [ 6.188196]: arm_cpu_init(): cpu 8 online

0.068671 cpu_start() cpu: 9

0.069630 [ 6.188258]: arm_cpu_init(): cpu 9 online

That boot was on an M1 Pro, and shows its two E cores of type 1 in cluster 0 being started first, followed by cluster 1 of four P cores (type 2), then cluster 2 of four P cores. On completion there are ten cores, numbered 0-9, online in their three clusters. Note how cpu 0 isn’t declared as being online, as that has already been running the kernel.

In a lightweight virtualizer configured to use 4 vCPUs, the log in the guest macOS is similar:

0.651790 ml_processor_register>cpu_id 0x0 cluster_id 0 cpu_number 0 is type 0

0.651800 cpu_start() cpu: 0

0.651849 ml_processor_register>cpu_id 0x1 cluster_id 0 cpu_number 1 is type 0

0.651854 cpu_start() cpu: 1

0.651869 ml_processor_register>cpu_id 0x2 cluster_id 0 cpu_number 2 is type 0

0.651875 cpu_start() cpu: 2

0.651894 ml_processor_register>cpu_id 0x3 cluster_id 0 cpu_number 3 is type 0

0.651904 cpu_start() cpu: 3

0.652065 [ 0.651939]: arm_cpu_init(): cpu 1 online

0.652065 [ 0.651962]: arm_cpu_init(): cpu 2 online

0.652065 [ 0.651963]: arm_cpu_init(): cpu 3 online

One subtle difference is that these vCPUs are all of type 0, indicating that they’re vCPUs and not E or P cores. There’s also no correlation between the cpu_id given and the host’s physical cores, nor in the cluster_id: in this case, the vCPUs generally mapped to physical cores 2-5 in cluster 1.

How many vCPUs?

When configuring a virtual machine (VM), a lightweight virtualizer specifies the number of vCPUs to be used. The framework imposes limits on the range in VZVirtualMachineConfiguration.minimumAllowedCPUCount and .maximumAllowedCPUCount, but that maximum can be set above the number of physical cores in that SoC, as reported in ProcessInfo.processInfo.processorCount.

The effect of exceeding physical core count can be disastrous. Asking for 11 vCPUs when only 10 physical cores are available appears tolerable, but ask for 12 and all the physical cores are maxed out, resulting in painfully slow booting and both host and guest grinding to a halt. Practical limits for the number of vCPUs are thus from 1 to the total number of physical cores (n), although virtualization is normally most effective with a slightly narrower range, such as 2 to (n – 1).

Mapping vCPUs to physical cores

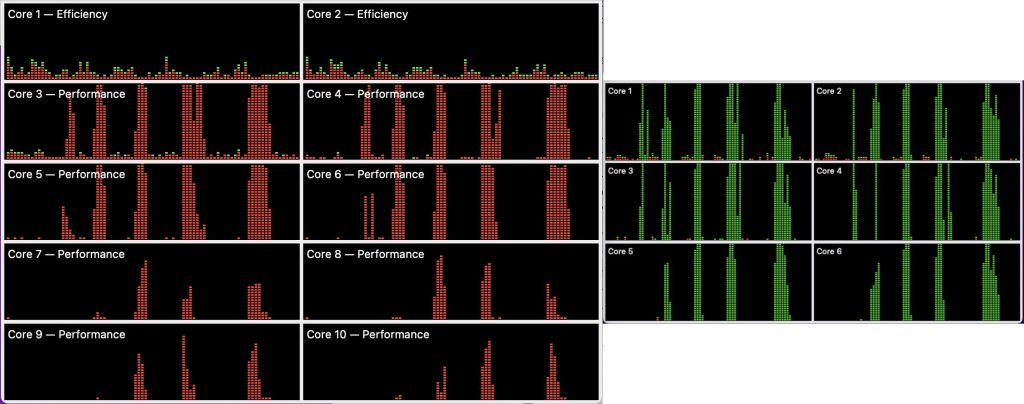

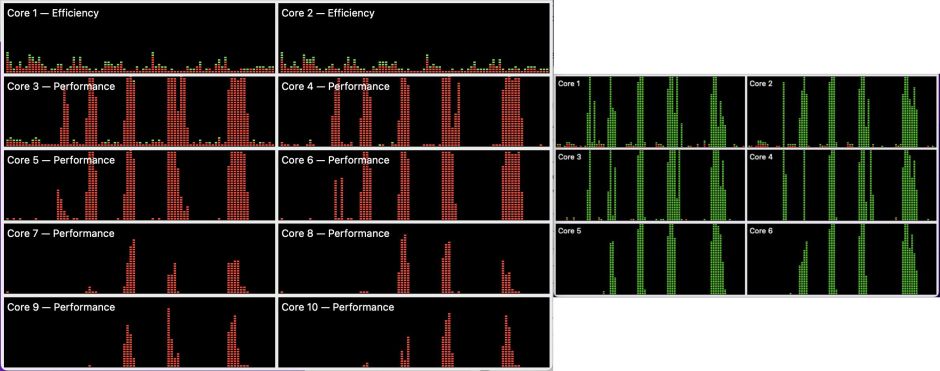

To understand how host macOS maps vCPUs to physical cores, I ran a series of tests in which I loaded the vCPUs in guest Monterey, with 2, 4 and 6 vCPUs set for that guest. Load was provided by AsmAttic running a tight CPU-bound floating-point loop, and virtualization was performed on the M1 Max in a Mac Studio by Viable. In the following screenshots, CPU History windows in Activity Monitor are shown for the host (left) and guest (right). The number of threads in each bout of work was increased from 2, towards the left, to 8 (and 9, for 6 vCPUs).

CPU % shown on 2 vCPUs (four bursts in the right half of the righthand window) was reflected in raised CPU % in all four physical P cores in the first P cluster, with slight overspill to cores 7 and 8. The pattern seen on the physical cores is the same as running two threads on the host at a relatively high QoS.

With 4 vCPUs, the four bouts of high workload seen on the guest’s vCPUs at the right are reflected in a similar pattern on the physical cores of the host, at the left. There’s more overspill into the second P cluster, but the total seen on the physical cores is essentially the same as would be seen with four threads running there at a relatively high QoS.

This extends to 6 vCPUs too, where there’s an additional fifth bout of 9 threads of workload in the guest (right). Total CPU % in the physical cores at the left is similar to 6 threads being run on the host, and there’s no overspill on its E cores. Note that, as in the two previous screenshots, green user work on the guest is shown as red system work on the host.

Extended to all 10 cores, the E physical cores show the expected load, as if 10 threads were running on the host.

These demonstrate conclusively that vCPUs aren’t mapped one-to-one with physical cores, but as if each vCPU was run as a relatively high QoS thread on the host, and shared across those physical cores in a similar manner.

QoS

No difference was observed in core allocation, either in vCPUs or physical cores in the host, between working threads at minimum and maximum QoS when run on the guest macOS. No QoS setting caused vCPUs to be run exclusively or preferentially on physical E cores, and no differences were seen in times to workload completion, other than those resulting from the different performance of E and P cores. All QoS settings on the host resulted in the same pattern of physical core allocation, first to the P cores, then to E cores when necessary.

This contrasts markedly not only with Apple silicon chips, where low QoS elicits a very different pattern of core allocation, but even on Intel Macs, where low QoS results in constrained performance despite their cores being identical.

Consequences

Those writing virtualizers based on the Virtualization framework need to constrain the number of vCPUs requested in a VM configuration, to avoid asking for more than will perform properly. This is demonstrated clearly in Apple’s sample code.

Code run in guest macOS in the Virtualization framework may perform differently to that run on the host. Services run at low QoS can’t be constrained to E cores, and will contend with threads intended to be run at high QoS. Before launching a VM, users need to consider how many vCPUs to allocate to it, being aware that, when the VM is busy, those vCPUs will effectively reduce the P core capacity available to host processes.

Even though the total remaining physical cores might appear sufficient, a guest will ‘steal’ P cores that could force host user processes to be allocated to E instead of P cores. Overall, though, running vCPUs will reduce energy efficiency, and on notebooks it will reduce endurance on battery. Although I haven’t yet investigated core allocation when running a Linux guest, it’s most likely that the same effects will be seen there too. As a result, lightweight virtualizers should therefore offer the user a choice of the number of vCPUs to use for the guest.