There are two types of CPU core in Apple’s M1 chips which are used by different types of software. Efficiency (E) cores are predominantly used to run services and background processes in macOS, such as making Time Machine backups and maintaining Spotlight indexes. User apps run their threads largely on Performance (P) cores which consume more power to complete their tasks interactively.

macOS doesn’t give developers or users direct control over which type of core processes or threads are run on. Instead, core allocation is determined by setting the Quality of Service (QoS) for processes, threads and their queues. The lowest QoS setting of background (integer equivalent 9) confines that code to the E core cluster; three higher settings allow it to be run on either E or P clusters, thus to run faster but with higher power use. Apps can give the user control over the QoS of their threads, and it’s possible to run code such as command tools at a chosen QoS, but there’s no general method by which the user can control QoS, thus how fast any given process or threads will run.

There are times when the user might wish to accelerate completion of tasks which are normally run exclusively on E cores. For example, knowing that a particular backup might be large, they might elect to leave their Mac to get on with that, wanting to run it as quickly as possible. There are two methods which appear intended to change the QoS of processes: the command tool taskpolicy, and its equivalent code function setpriority().

Latest experience with using those demonstrates that, while they can be used to demote running processes to E cores, they can’t promote processes and threads which are already confined to E cores so that they can use both types. I have recently demonstrated this using the command

taskpolicy -B -p 567

which should promote the process with PID 567 to run on both types of core. When that process is normally confined to running on the E cluster, that command has no effect on its core allocation or performance.

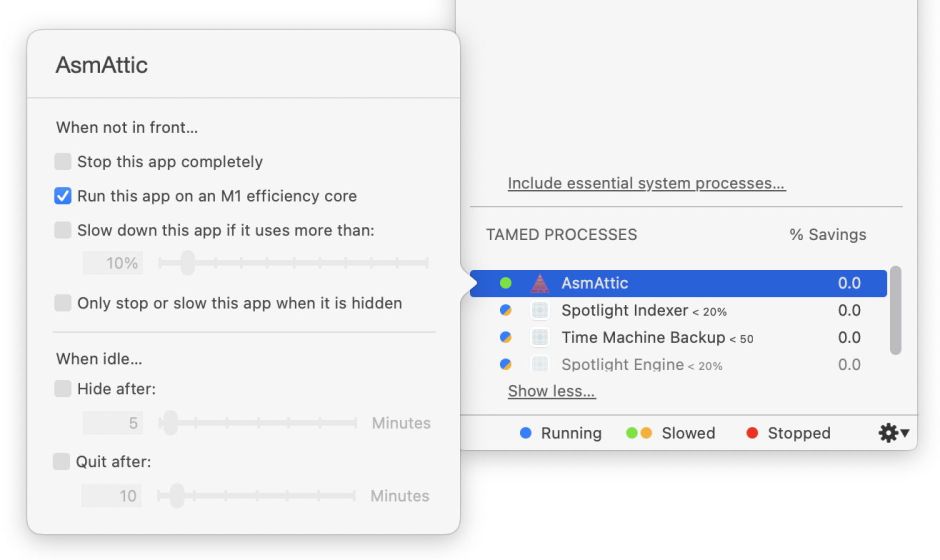

Jon Gotow of St. Clair Software has added an experimental feature using setpriority() to his App Tamer utility, and confirmed this phenomenon. While setpriority() can be used to demote processes to use only E cores, it can’t promote those already confined to E cores to have access to P cores as well.

What might at first appear more puzzling is that taskpolicy and setpriority() can repromote processes and threads which they have demoted. If a process is normally set to run at high QoS, so having access to both E and P cores, and it’s then demoted to run on E cores alone, it can be promoted back to have access to both core types again. This implies that the effects of taskpolicy and setpriority() are the result not of changing QoS, but directly on which cores can be used.

Methods

To investigate this, I modified my AsmAttic test utility so that it can manage two thread queues, one at minimum QoS, the other at maximum. This is used by a new option to alternate test threads between those two extreme QoS values.

Previously, AsmAttic ran one thread queue at a single user-selectable QoS. It creates up to a hundred identical threads and adds those to that queue. As you’d expect, threads are run in the order that they’re created, and after allowing for some to run more slowly when allocated to the E cluster, they normally complete in similar order, with batch effects.

Results

Alternating test threads between different QoS changes this. With modest numbers of odd-numbered threads run at lowest QoS and even-numbered ones at highest QoS, all the high QoS threads, run on P cores, complete first, in roughly the same order that they are added to the queue.

This shows a small demonstration, in which three QoS 33 threads, numbers 6, 4 and 2, completed at an elapsed time of less than 0.66 seconds, followed by QoS 9 threads 1, 3 and 5 after 1.7 and 3.6 seconds.

Running the command

taskpolicy -B -p 567

against AsmAttic’s PID didn’t change results at all, with QoS 9 processes still running slower on the E cluster. However, as expected

taskpolicy -b -p 567

confined all threads to the E cluster, as shown in the CPU History window, and confirmed using powermetrics.

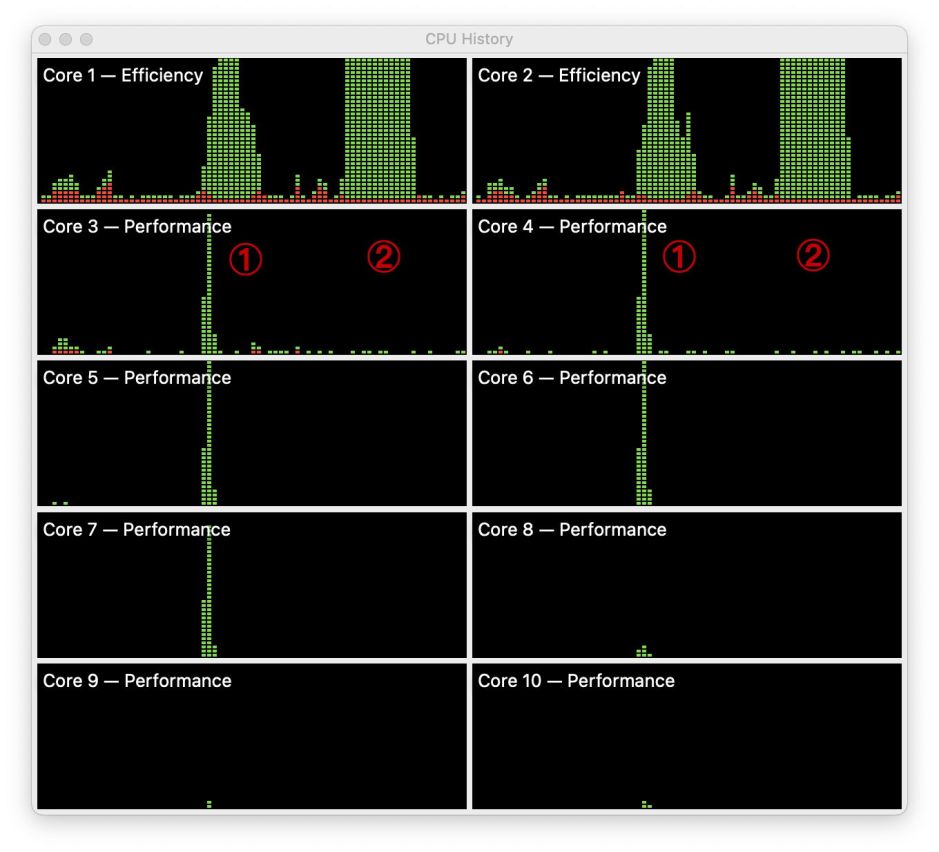

First run, marked by the figure 1, shows these tests being run without the use of taskpolicy. Short peaks on both P clusters reflect those threads run from the high QoS queue, and a prolonged peak on the two E cores at the top results from the odd-numbered threads running on the E cluster.

Shown at the figure 2 is the effect of running taskpolicy -b on AsmAttic’s PID. This successfully demotes all threads, from both queues, to run on the E cluster alone. What it doesn’t do, though, is alter the order of completion of the threads, which are still run in the same sequence as determined by their original QoS. Furthermore, running taskpolicy -B returns the high QoS threads to the P cores, but doesn’t affect low QoS threads.

The most likely explanation is that, on M1 chips, taskpolicy and setpriority() don’t affect QoS, and can only (currently) demote processes and threads which could on the basis of their QoS be assigned to either core type, so that they’re run on E cores alone. This demonstrates a separation between dispatching according to QoS in Grand Central Dispatch (GCD), and allocation to core type. Threads with the lowest QoS are indelibly marked to be run on E cores; those with higher QoS are normally marked capable of being run on either core type, but they can be restricted to just the E cluster.

Conclusions

On current M1 series chips:

- external controls, in

taskpolicyandsetpriority(), appear unable to change QoS, or the dispatching of threads by GCD; - those external controls can limit threads, which on the basis of QoS could be allocated to either core type, to just E cores;

- those external controls cannot promote threads, which on the basis of QoS can only be allocated on E cores, so they can be run on either core type;

- thus threads originally designated for E cores alone can’t be run on P cores;

- promotion of background processes and threads so they can be run more quickly using P cores isn’t currently possible in macOS;

- dispatching threads according to QoS and their allocation to clusters are performed separately in macOS.