A couple of days ago, I explained how macOS controls core allocation and performance of code running on M1 Macs, based on Quality of Service (QoS) set by the app. You might assume that this is all academic, as what an app sets is what you get. Although that’s true at present, an increasing number of apps are giving the user control over how ‘fast’ they should run time-consuming tasks. This article looks at what can be done, and how those E and P cores can work for you.

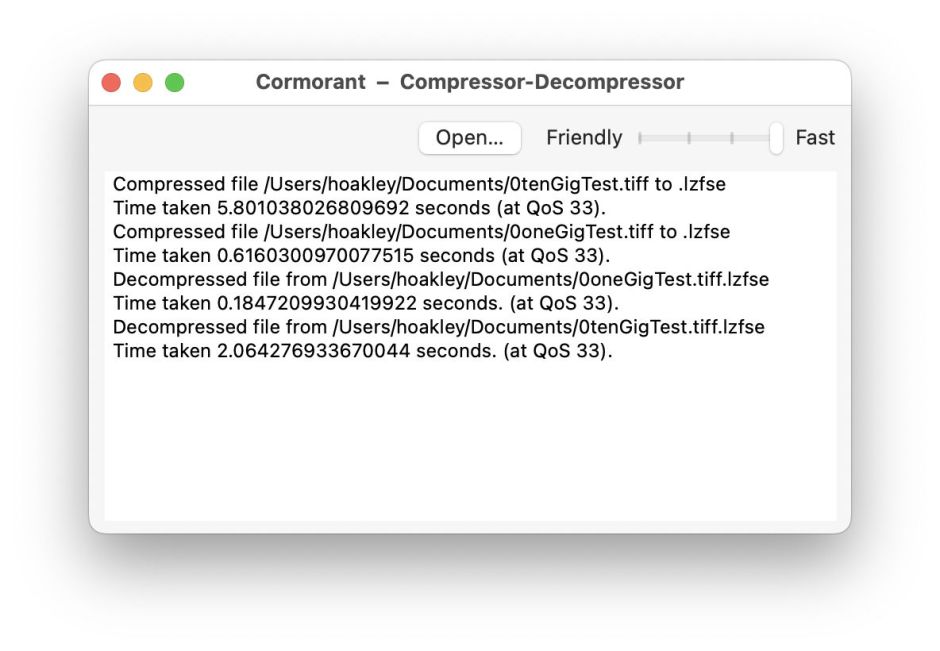

The app I use here is a compression/decompression utility, an ideal example of where speed control can be valuable. Let’s say you need to compress something chunky for distribution, 10-20 GB in all. Knowing this is going to take longer than the twinkling of an eye, you want to keep working as much as you can. Using my free app Cormorant you can opt for any of four different speeds.

At its fastest, you can expect compression on your Mac Studio Max to run at around 1.6 GB/s, while at the friendliest setting it’ll run at about an eighth of that, at 0.2 GB/s. However, the penalty for the high speed is that other apps you’re working with will also slow down, whereas at the slower setting you won’t even notice compression occurring in the background. If you’re doing this on your M1 MacBook Pro, you’ll also be interested to know that fastest compression requires chip power of just under 25 W (still amazing compared to Intel Macs), while the friendly option only needs 2.6 W.

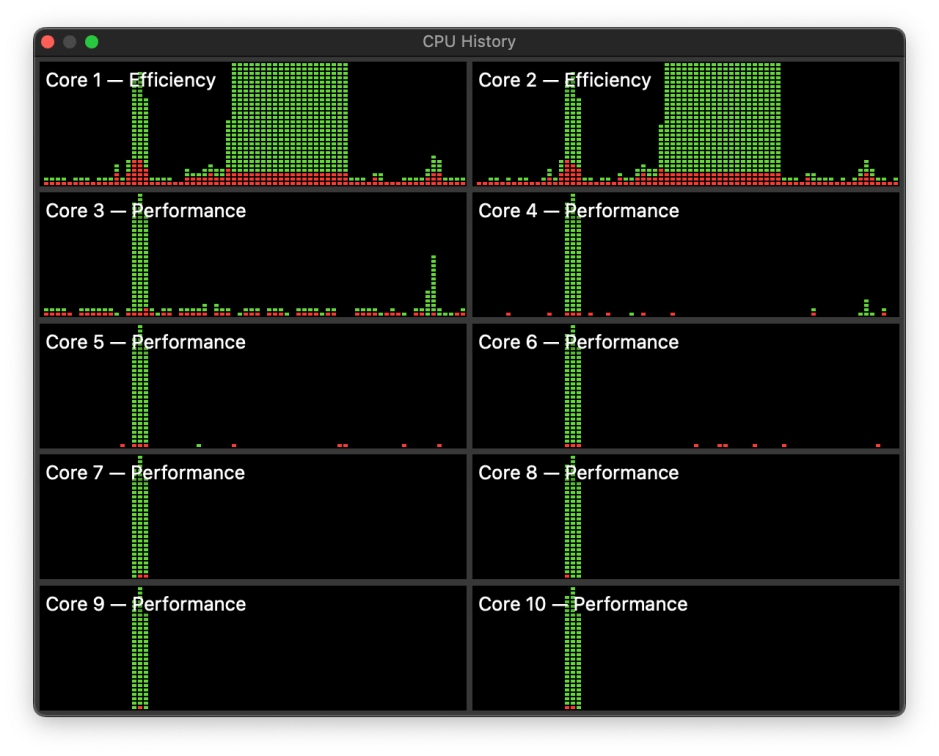

You can see what happens in Activity Monitor’s CPU History window.

This shows two compressions, the first performed at the fastest setting and running very quickly on all ten cores. Shortly after that, the compression was repeated, this time with the speed set to Friendly. That put no load at all on the eight P cores, but pushed the two E cores to 100%. As the GUI and most apps run largely on the P cores, you would’t notice the second compression occurring at all.

Looking in detail at what’s going on in the cores at the time is even more revealing. Using the Friendly (minimum QoS) setting:

- The two cores in the E cluster are at 100% active residency and maximum frequency of 2064 MHz, which uses 656 mW.

- The two clusters totalling eight P cores are idling for almost 100% at a minimum frequency of 600 MHz, and using a combined total of only 54 mW.

When compressing at the Fast setting:

- The two cores in the E cluster are at 83%-87% active residency, maximum frequency, and using 595 mW.

- The eight P cores in their two clusters are all at 99%, maximum frequency, and using just over 19 W in total.

Cormorant uses AppleArchive, a relatively recent feature of macOS which provides high-performance compression and decompression. It can run ten threads simultaneously, making it easier to spread its work over all the cores in M1 series chips. But most of all, it provides the user with direct control over the Quality of Service of its worker threads, to make best use of asymmetric multiprocessing (or heterogeneous computing, if you prefer) in Apple Silicon.

While many of us are happy to leave lengthy compressions running in the background, when we want to decompress an archive, we’re usually in a hurry. The Mac Studio Max, running at Fast decompression, achieves 5.5 GB/s, compared with an Intel 8-core Xeon W which delivers less than half of that, 2.3 GB/s.

Fast and Friendly settings do still have an effect on Intel Macs, but nowhere near the same as on an M1 chip. Ratios between the time taken for compression and decompression on Intel are typically 1.8 (Friendly divided by Fast), making Fast slightly less than twice as fast as Friendly, with little difference in power. The same ratios for the M1 Max in a Studio are between 4 and 14, reflecting its superior performance on Fast, and amazing economy on Friendly.

Relatively few apps give you control over QoS in this way. Recent versions of Carbon Copy Cloner do, when it’s making backups, and I expect that other apps will offer this feature as demand increases from M1 users.