Should you wish to run Asahi Linux, when it’s ready, on your M1 series Mac, you’ll discover that you control which cores your code runs on. macOS isn’t so liberal, and for the moment allows third-party code a choice of two strategies, according to the Quality of Service (QoS) set. This article describes a third strategy which macOS itself uses, but doesn’t apparently offer to third-parties.

There are now three configurations of CPU cores available in M1 series chips:

- original M1, with 4 Efficiency (E) and 4 Performance (P) cores, as in the MacBook Air, MacBook Pro 13-inch, iMac and Mac mini;

- M1 Pro and Max, with 2 E and 8 P cores, as in the MacBook Pro 14- and 16-inch, and Mac Studio (Max);

- M1 Ultra, with 4 E and 16 P cores, as in the Ultra variant of the Mac Studio.

Some MacBook Pro 14-inch notebooks have a reduced M1 Pro chip with only 6 P cores instead of 8.

There are four QoS settings that determine which cores are available to run threads. The lowest ‘background’ QoS constrains threads to available E cores; the other three levels allow threads to be scheduled on either P or E cores, although P cores are used in preference. Available controls can demote threads with higher QoS to constrain them to E cores, but it’s currently not possible to promote threads from the lowest QoS to run on P cores.

macOS adopts a strategy where most, if not all, of its background tasks are run at lowest QoS. These include automatic Time Machine backups and Spotlight index maintenance. This also applies to compression and decompression performed by Archive Utility: for example, if you download a copy of Xcode in xip format, decompressing that takes a long time as the code is constrained to the E cores, and there’s no way to change that.

There are two situations in which code appears to run exclusively on a single P core, though: during the boot process, before the kernel initialises and runs the other cores, code runs on just a single active P core. The other situation is when ‘preparing’ a downloaded macOS update before starting the installation process.

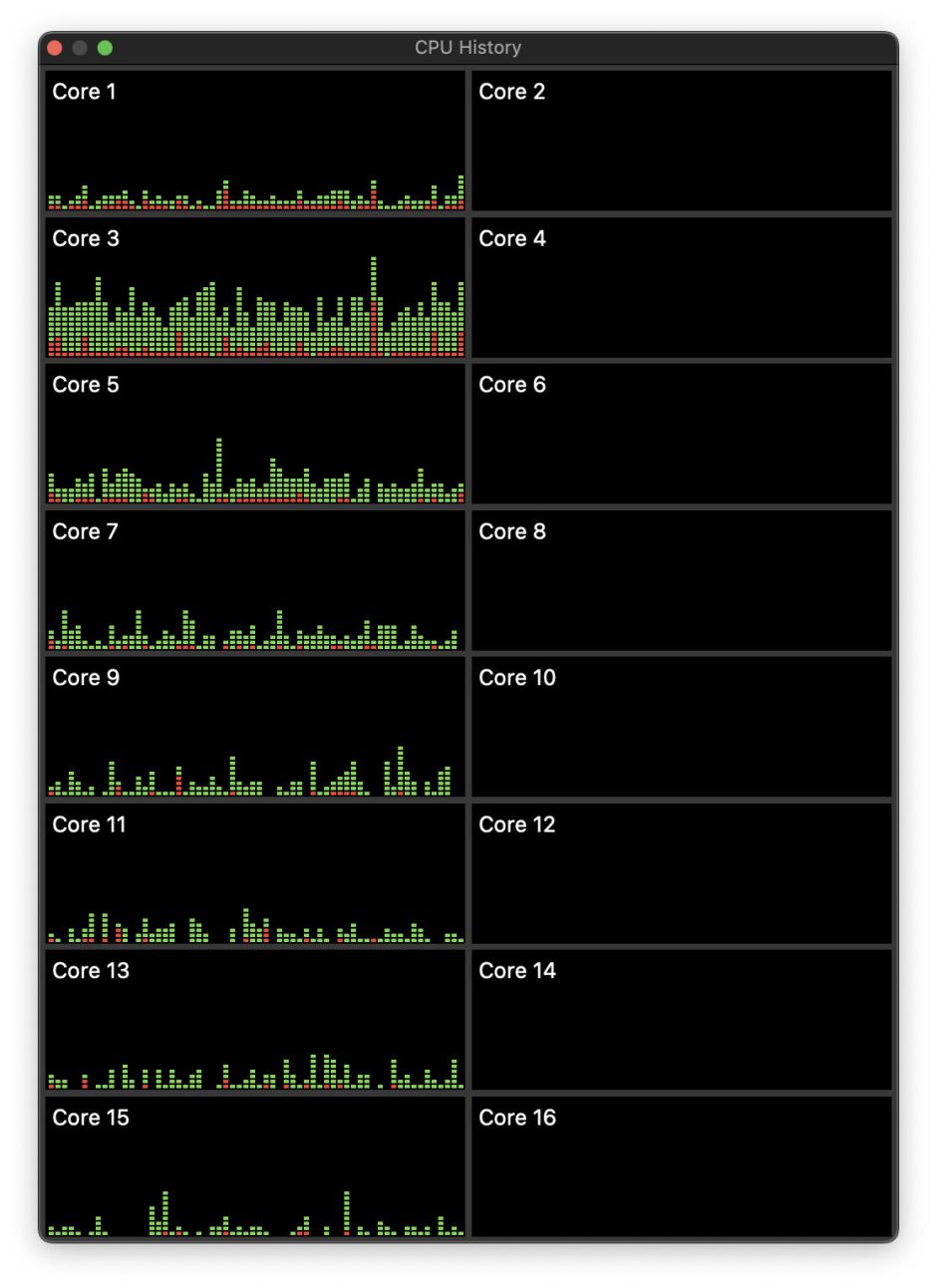

On an Intel Mac, this phase of preparing a macOS update for installation by com.apple.MobileSoftwareUpdate typically takes around 30 minutes at 100% CPU, representing one core-worth of active residency in 5 threads spread fairly evenly over most available cores. That’s shown in the CPU History window from Activity Monitor below.

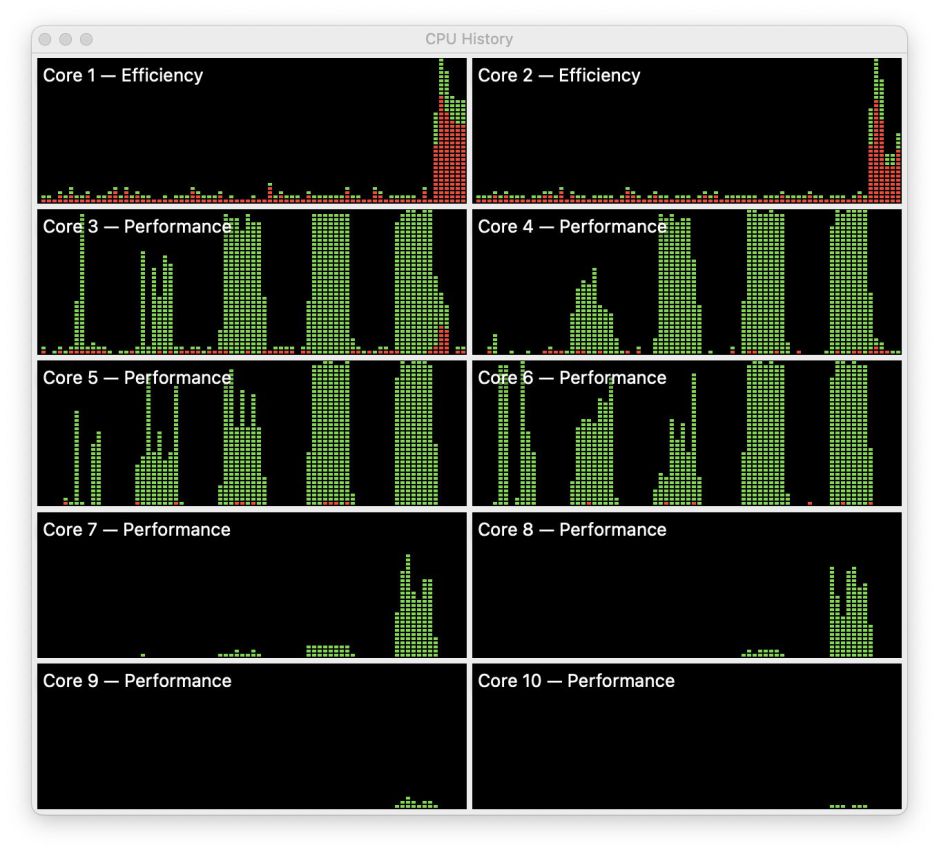

On an M1 Pro chip, the same 5 threads are also given one core-worth of active residency, indicated as 100% CPU, but are confined to a single P core, the first in the first of the two P core clusters (P0, labelled below as Core 3).

This unusual distribution of active residency is sustained throughout the 30 minutes of preparation to install the update. At no time were com.apple.MobileSoftwareUpdate threads seen to spill over to other P cores within that cluster.

Compare with running increasing numbers of test threads at the lowest of the three QoS levels with access to both P and E cores. Five tests with increasing numbers of loading threads are shown above, starting with a single test thread, reported at 100% CPU, st the left. Far from being confined to a single P core, each test with 1-5 threads is run on multiple P cores. With 1-4 threads, active occupancy is almost entirely confined to the four cores in the first P cluster, and only spills over to the second when there are 5 loading threads. At no time did macOS limit threads to just one P core, in the way that it did during preparation of the macOS update.

Apple may have adopted this exceptional strategy to ensure that E cores aren’t used, to allow concurrent background activities such as completion of backing up. By confining these preparations to a single P core, the others are left largely idling and able to support relatively normal user activity during this period.

If those are good reasons for this strategy to be adopted by macOS, they could equally apply to third-party software, for example during lengthy CPU-intensive tasks. What’s sauce for the goose should also be sauce for the gander: Apple needs to consider giving third-party developers the same flexibility that it enjoys.