I was asked why some PDFs would only display properly when opened in Adobe Acrobat, not in Preview or any other third-party PDF viewer on a Mac. Surely PDF is a standard, so why can only one vendor’s products handle those documents properly?

The answer to that question is, as you might expect, not a one-liner. But it brings with it some important lessons about open standards and the internals of macOS. There isn’t any snappy summary, so please bear with me.

In the beginning, computer standards seemed simple. Plain ASCII text can largely be defined on a single page, but doesn’t get you very far when you want to distribute an electronic book. By the time that Adobe handed over its PDF format definition for general use as an ISO standard, it had grown to 750 or so pages of PDF which apparently covered almost everything under the sun, and grew further to form ISO 32000-1.

That proved to be just a baseline, though. Three important areas needed to be further addressed: archival and pre-press use, and accessibility. Taking perhaps the simplest of those, the first, it was clear that changes were needed to ensure that, a century from now, PDFs stored in digital repositories remained as usable as they are today.

The generic standard allows for extraneous components such as fonts and content to be referred to but not embedded. If every PDF that we used today had to include all such data within it, the format would be so inefficient that it couldn’t be universal as intended. But relying on future users to be able to find a suitable copy of each font used, for example, would also cause problems. So separate archival standards for PDF/A evolved. Among other things, they require that each compliant PDF stands alone.

In this case, any PDF rendering engine able to cope with generic PDF isn’t affected. Open PDF/A documents in Preview, and they look absolutely fine. But if you want to convert an existing document to standard-compliant PDF/A, you can’t simply use the output of that same engine. There needs to be another layer which pulls in any extraneous content and embeds it, to ensure that PDF document stands alone. That means complying with another four-part standard, ISO 19005, which defines sub-variants of PDF/A-1, A-2, A-3 and later this year A-4.

So it goes on. PDF/X, for pre-press, is even more complex in order to cater for spot colour, industrial print processes, and more. That’s another few hundred pages of ISO 15930 in seven parts, which need to be implemented on top of the generic PDF engine, to ensure that any PDF/X document is correctly rendered according to those standards. Then there’s PDF/UA in ISO 14289, which is growing in importance in enabling users to have the text within a suitably modified PDF read aloud to them, and more.

There’s often no simple way to convert a generic PDF to a different standard. For PDF/X, images may need to have colour management and ICC Profiles provided, and each page needs an enveloping MediaBox and BleedBox. Creating a PDF/UA document is exceedingly tedious manual work, as all its text content has to be tagged and assembled into reading order, and each image supplied with alternate text, for example.

Expecting general-purpose PDF viewers like Preview to know all the rules of these different standards and to cater for them is simply unreal, and unnecessary. Generating these special-purpose PDF variants is for specialist apps, such as QuarkXPress, Adobe InDesign and Acrobat Pro. Unless you work in a publishing environment, you should never come across a PDF/X document.

PDF/UA faces an uphill struggle. Whilst well-funded archives might be able to afford the software and labour to tag and flow regular PDF documents to improve their accessibility, these form only a small proportion of those going into storage. Until these costs fall substantially, PDF/UA is only going to have very limited impact.

What is more worrying is that PDF formats aren’t designed to be robust in the face of file corruption. It’s not difficult to change a few bytes in a regular or PDF/A document and make it totally unusable.

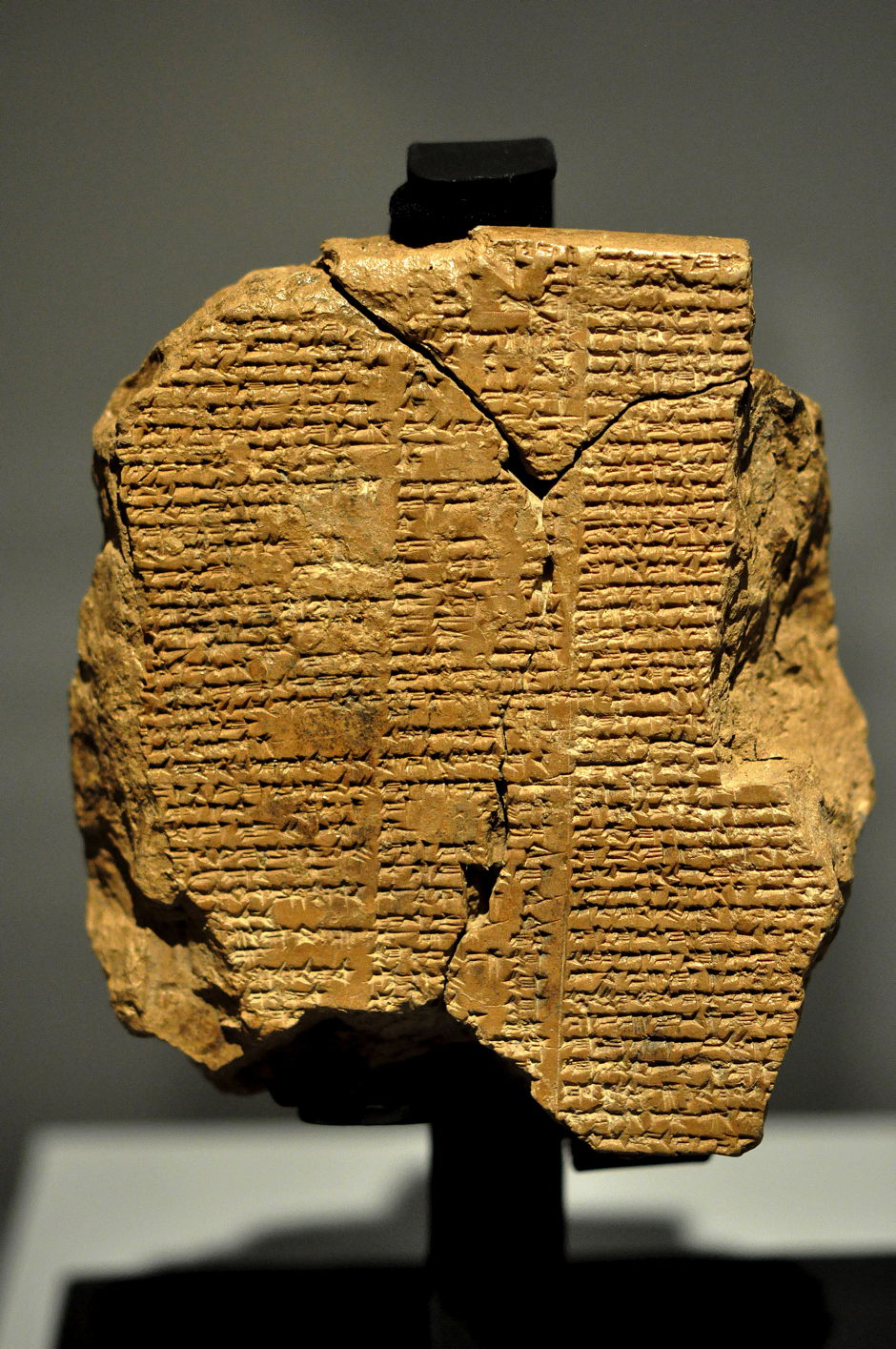

History tells us that crucial records, here the text of the oldest epic to have been committed to writing, often get damaged, in this case over a period of around four millenia. In the mere quarter century that PDF has been around, countless users have suffered file corruption and errors which have made it impossible to open their damaged PDF files.

Users have access to tools which can create, edit, annotate and otherwise work with all variants of PDF, but as far as I can tell, none has any facility to repair a damaged PDF, nor to recover as much of its content as possible. Indeed, in many cases apps simply refuse to open damaged PDFs, or when they can, they offer precious little of the content which remains in the file. There are online services which claim to be able to tackle this, but many PDFs that I have I simply wouldn’t trust to such a site.

Having open standards for PDF has been a major milestone in computing. But having so many standards has brought complexity and incompatibility which are affecting portability. Without good maintenance tools, many of the billions of documents which are being put into archives may prove to have been wasted effort.

PDF has come a long way since 1993, and it still has a long way to go.