Of all the immediately practical tasks to which Artifical Intelligence can be applied, translation is one of the most lucrative.

We should rejoice in the thousands of languages which we have, and work hard to ensure that number does not diminish, but recognise that, wherever a few people gather, chances are that some of them will have different mother tongues. Until someone discovers a real Babelfish – which Douglas Adams wrote into his Hitchhiker’s Guide to the Galaxy as an autotranslation organism – we are stuck with translating using humans.

Except that, after decades of work and tens of billions investment, we should by now be using machine translation (MT) instead of humans. As anyone who has played with MT systems such as Google Translate will have discovered, MT still has a very long way to go before it can come close to a decent human translator.

What always surprises me with Google Translate is how illogical and counter-intuitive it is. You don’t have to look for difficult phrases to translate before it starts to behave oddly: it will often do so with very simple input. I am indebted to Language Log for inspiring this little exploration of its quirks, farts, and foibles.

For most common languages, put in a sentence of the form

I am learning English

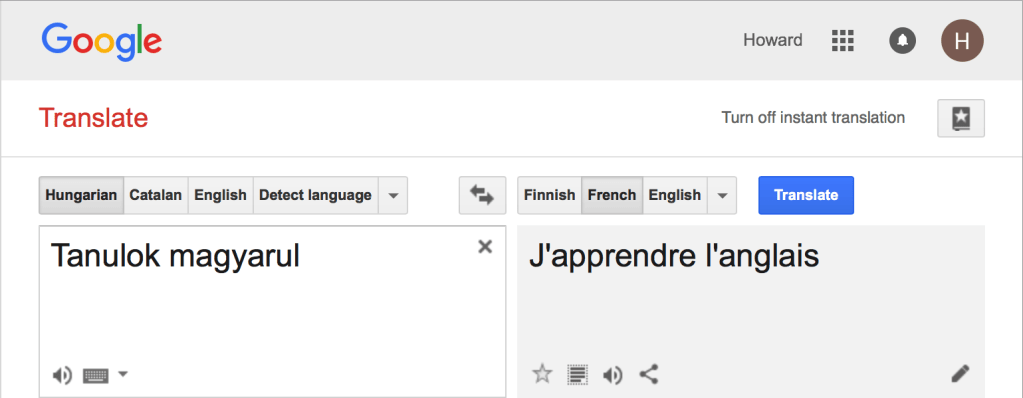

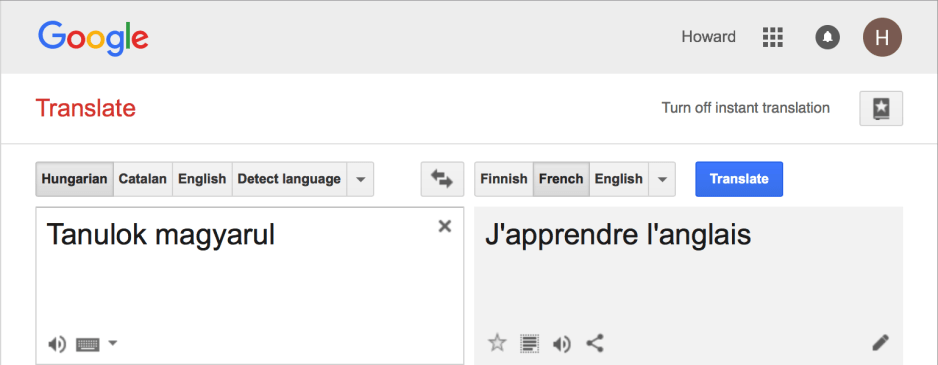

and it translates that correctly into the destination language of your choice. But working with certain languages can elicit strange behaviour. Take the Hungarian tanulok Magyarul, which means I am learning Hungarian. Ask Google to render it into French, and it tells you in good French that I am learning not Hungarian or even French, but English.

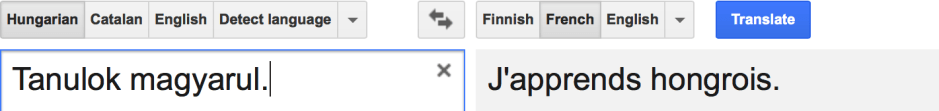

Subtle, and semantically indifferent, changes to the input produce odd changes to the output. Put a period at the end, and now it tells you in French that you are still learning Hungarian.

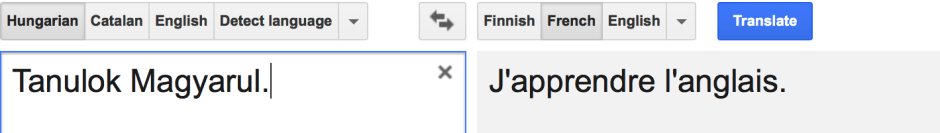

But capitalise the word Magyarul, and it flips back to translate it into English, in French.

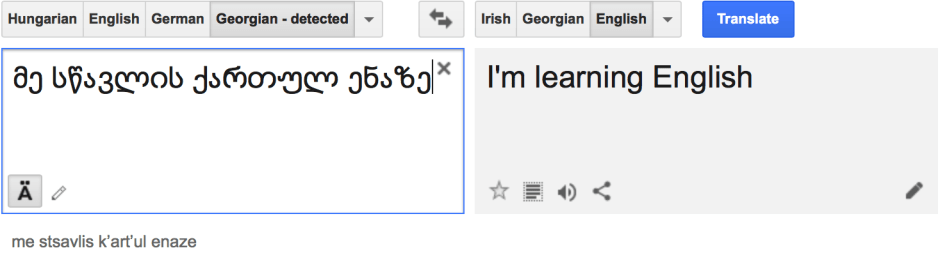

I know very little Georgian, but Google Translate seems to produce quirky results there too. Input the Georgian translation of I am learning the Georgian language, and it again swaps language in the output.

Input the English, and it translates it without any language swap.

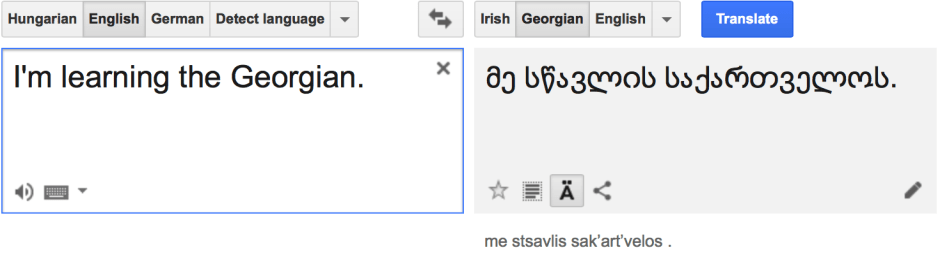

But it appears strangely incapable of translating the plain English sentence I’m learning Georgian.

Change that into an incorrect English form, and you will see a Georgian translation.

So the next time that someone tries to tell you that MT performs really well now, show them these problems with very simple sentences. Professional translators clearly should not be worried about replacement by MT, at least not until the latter has improved greatly.