If you’re unfortunate enough to get Covid-19 infection and have a swab test performed, how confident are you of the meaning of its results? If the test comes back positive, does that make it certain that you have Covid-19? What about if it’s negative, are you clear?

You’re probably familiar with the fact that Covid-19 swab tests have a high rate of false negatives. So most of us might assume that a positive test result is absolutely certain to demonstrate the infection, but a negative test result in around 20-50% of cases might be misleading. But in terms of probability that’s not quite right. Depending on the figures, a positive test result may only be a weak indication that you have Covid-19, and a negative test result may be a very strong indication that you don’t have an active infection. It also depends greatly on how common the condition is in the population at the time.

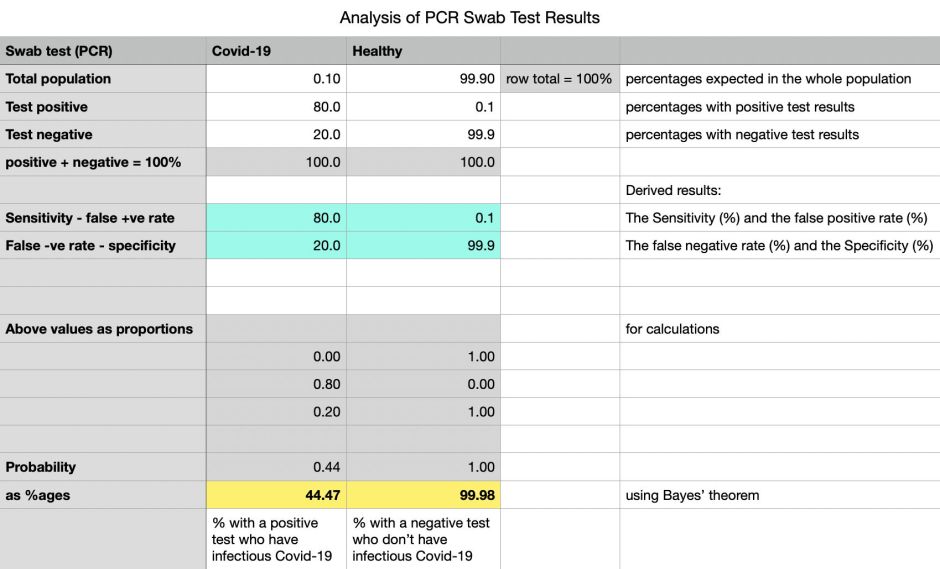

To explain this, I’m going to make some assumptions which probably aren’t far from the case. First, in the local population, only around 0.1% at any time are infectious with Covid-19. That could be much lower, of course, depending on where you are, but should be a good start.

Getting accurate estimates for the performance of the swab or PCR test isn’t easy. Typical ‘good’ results suggest that, of those currently thought to be infectious with Covid-19, around 20% have negative test results. That proportion can rise with the use of home testing kits, different test methods, and so on. It’s harder still to discover how many of those who have positive test results don’t have infectious Covid-19, but this isn’t likely to be zero. Here, I’ll assume that’s very low, at 0.1%.

Using those figures, the swab test looks quite good, with a Sensitivity of 80% and Specificity of 99.9%.

What we must take into account when making predictions of the probability for an individual is the prevalence of the condition for which we’re testing. If it’s incredibly rare, even though the test result may be positive, it’s still extremely unlikely that they have the condition, particularly if there are sufficient false positives – those who don’t have the condition but test positive all the same.

We then apply Bayes’ theorem, which takes into account all the conditional probabilities. I’ve set this up in a Numbers spreadsheet, which you can download from here: covidtests1

This shows that only 44% of those with a positive swab test are likely to have infectious Covid-19, whilst it’s almost certain that those with a negative swab test don’t have infectious Covid-19. The relative rarity of Covid-19 makes swab test results weaker indicators of infection, and negative results stronger indicators of health.

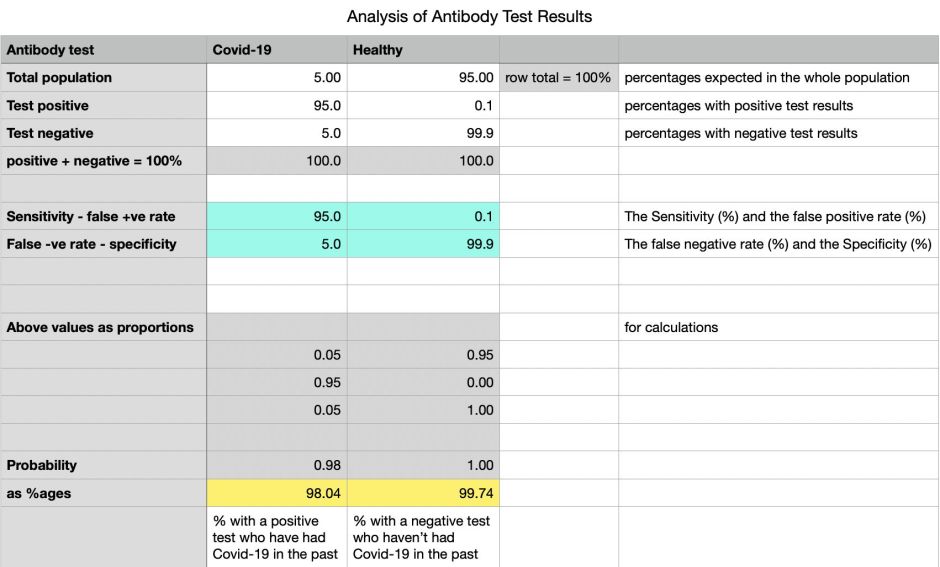

This approach is even more important now that antibody tests are starting to come into use. Early versions of those tests didn’t perform well: even the manufacturers accepted that they had high false negative rates, and some had significant false positives too. One important factor here is that the percentage of the population which is expected to be positive is much higher than those with positive swabs: the latest figures coming from Europe suggest that as many as 5% of the population of countries like Italy and Spain, which have had high case numbers, have antibodies, whereas those in countries which have had few cases, such as Denmark and Norway, only have antibodies in 1% or less.

Using slightly pessimistic figures for the antibody tests which are now being adopted in Europe and the US, positive test results have a 98% chance of showing that someone has had Covid-19 in the past, and a negative result is almost certain to confirm that they haven’t. Try performance figures from older tests, though and you’ll see the effect they have, making antibody tests as unreliable as swab tests for current infection.

If you’re tempted to buy your own antibody test, you might like to check how it performs at prediction using the manufacturer’s figures for false positives and negatives, before parting with any money. It could be cheaper just to toss a coin.

Wikipedia’s entry on Sensitivity and Specificity is an excellent explanation of different ways of evaluating test results like this, and its account of Bayes’ theorem is comprehensive. Be aware, though, that not all statisticians yet accept this approach to probability, and that my example spreadsheet may yet be riddled with errors, and my assumptions false.