Last Christmas, I looked in detail at how the code signatures of apps are checked, or rather how they are so seldom checked. Given that almost all apps are now signed, it might seem logical that each time you launch an app, it should at least have its integrity checked. However, unless an app decides to do it itself, that doesn’t happen, at least not in macOS Mojave and earlier. Not even when the app runs in a sandbox, is hardened or notarized.

There’s nothing inherently wrong with that: those are the rules, and many apps rely on them for accomplishing tasks such as updating themselves in place using the Sparkle system. But what if you want to check that your app hasn’t been quietly subverted, or even just corrupted? Could your app run its own signature check each time that it’s started?

Some apps, security products such as anti-malware scanners, are known targets for malware and their developers normally incorporate extensive self-integrity checks to ensure that each time they run, they know that nothing has nobbled them. Not only that, but they may need to verify their integrity more dynamically too. I’m not suggesting for a moment that you take this to such extremes. But the cost in code and time to perform a basic signature check is very small.

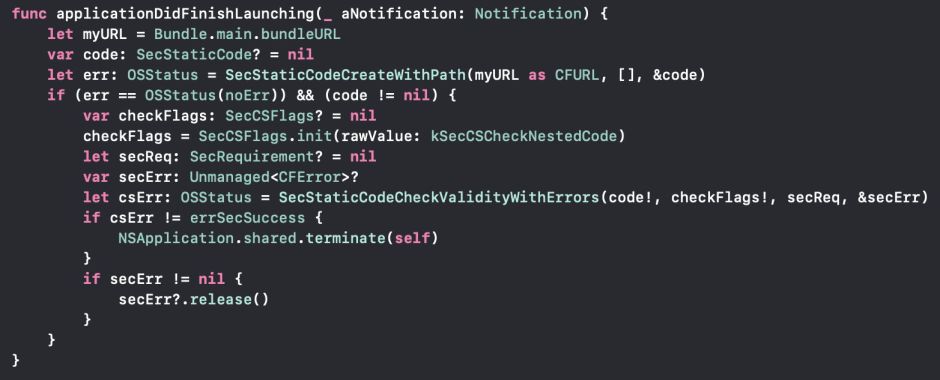

All I have done is add some code to the standard applicationDidFinishLaunching() function built into every app’s App Delegate:

func applicationDidFinishLaunching(_ aNotification: Notification) {

First get your app’s main bundle URL:

let myURL = Bundle.main.bundleURL

Then use that to create it as Static Code for assessment:

var code: SecStaticCode? = nil

let err: OSStatus = SecStaticCodeCreateWithPath(myURL as CFURL, [], &code)

if (err == OSStatus(noErr)) && (code != nil) {

Performing the signature check requires setting up the parameters to be passed. The first is the flag so set the type and depth of testing to be performed. Here I’m opting for a basic check to include all nested code, which shouldn’t check the certificate in full:

var checkFlags: SecCSFlags? = nil

checkFlags = SecCSFlags.init(rawValue: kSecCSCheckNestedCode)

let secReq: SecRequirement? = nil

var secErr: Unmanaged<CFError>?

Then you can perform the check itself, ensuring that it returns any errors:

let csErr: OSStatus = SecStaticCodeCheckValidityWithErrors(code!, checkFlags!, secReq, &secErr)

You choose what to do when an error is returned. The aggressive approach would simply crash the app immediately with the security error; a gentler approach would be to show an alert, then quit when that’s dismissed. I chose a middle way which terminates the app gracefully, but doesn’t hang around explaining why:

if csErr != errSecSuccess {

NSApplication.shared.terminate(self)

}

Finally, remember to release the Unmanaged variable:

if secErr != nil {

secErr?.release()

} } }

The most difficult part of this is testing your app. When freshly built, it should fly through your check and run normally. But unless you test the error condition, you’ll never know whether your code is doing anything at all.

What I do is make a copy of the built app and change a single character in its Info.plist, say in the date shown in its copyright. If the check picks that up, it demonstrates that it’s at least checking the checksum on the app bundle. But if you simply make a copy of the app in your documents folder, change that character, then run the app, it will crash with a macOS security error: the first time an app is run from a new path, macOS performs a full security check on it, and that’s what happens.

What you need to do is move the app to a folder from which it’s usually run, like /Applications. Run it once unmodified to check that it launches fine from there. Then modify that copy by changing a single character or byte, and run it again. On that occasion, macOS shouldn’t react by crashing the app, but your new mechanism should kick in.

Is any of this worth the effort, though? Isn’t a little extra security actually worse than the status quo?

Against a determined attacker, checking integrity without doing a full signature check may not be effective, as malware can easily replace your signature and its checksum with new. But the most likely reason for an app differing from its original checksum – unless it’s been updated by Sparkle or similar – is that it has simply become corrupted in storage. And who knows whether an attacker will go to the lengths of writing a new and correctly-updated signature?

The cost in terms of coding effort or launch time is so small that any benefit seems worth it, provided of course that you warn your users, and not just in the app’s own Help.

I look forward to the debate. In the meantime, at least you now know how to do it.

Postscript

Thanks to @DubiousMind for pointing out that in some circumstances even hash checks on signatures can take some time. If you happen to have 2 GB of data in your app bundle, forcing even the most superficial of signature checks could delay app launch by a minute or more. When tested here, for example, running a full and deep check on Xcode takes just over 30 seconds. You probably wouldn’t want to run such a check if that were the case. But in the great majority of instances, checking hashes is almost instant. It’s not hard to assess this during development.