Apple detailed and demonstrated many exciting technologies at WWDC, which are coming to macOS 10.14 Mojave in the autumn/fall. One which I found most interesting are its new features to support the processing of natural language.

The mass-market goal behind support for natural language processing (NLP) is not macOS, but iOS, where it is intended to let users give apps quite complex oral instructions. These are then converted into text by Siri, and acted upon by iOS and its apps. Ask any linguist, and they’ll tell you that parsing and understanding natural language is one of the most difficult tasks that you can ask a powerful computer to perform. We have all had experiences with Siri which demonstrate the point.

Mojave and iOS 12 therefore have sophisticated features which enable developers to parse text into its parts of speech, and recognise key words like different types of proper noun (people, organisations, locations) so that apps can work out how to respond to instructions like “tell me the quickest route to walk to Central station”.

Features like these are also of crucial importance to related tasks, such as machine translation, learning another language, even improving your own writing.

What is also particularly exciting is that Mojave will support not just a couple of the major languages, such as English, but eventually should be able to parse many more. Apple hasn’t revealed which will be supported in the initial release of Mojave, and which will be added during its lifetime.

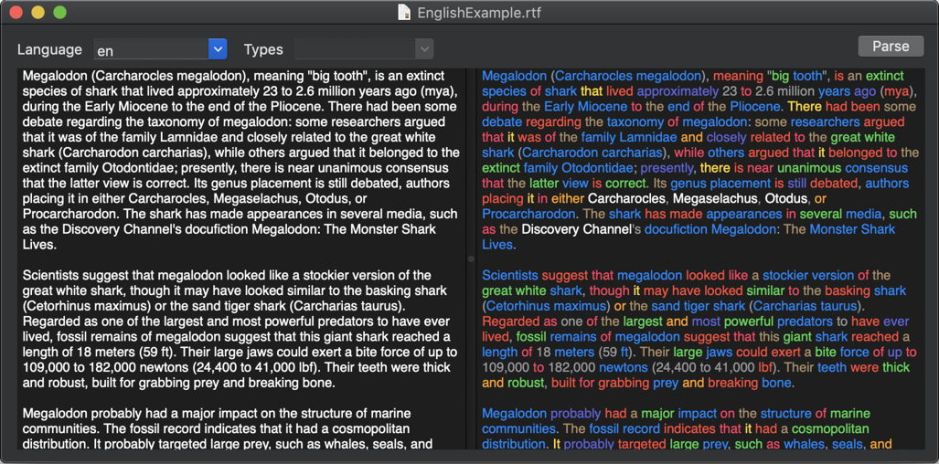

The best way to understand what NLP can do is to use it, of course. I haven’t been able to find any demonstration apps which were shown at WWDC, so using the example code given there, have put together a little app which runs on the current beta-release of Mojave: Nalaprop (a pun on the name of Mrs Malaprop, and natural language processing).

Nalaprop can read plain text files, or you can paste text in for processing. Click on its Parse button, and it tells you which language macOS reckons it is, and displays a parsed version of your text in the panel to the right. This uses colour-coding to show which part of speech each word is: for example, verbs are displayed in red, and nouns in blue.

Nalaprop looks at its best in Dark Mode, although Light Mode is still perfectly usable if you prefer.

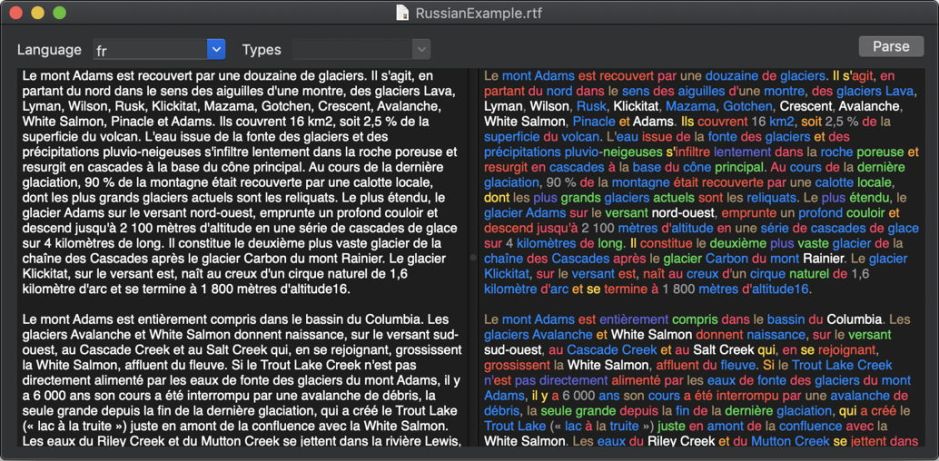

Here it is with a chunk of French.

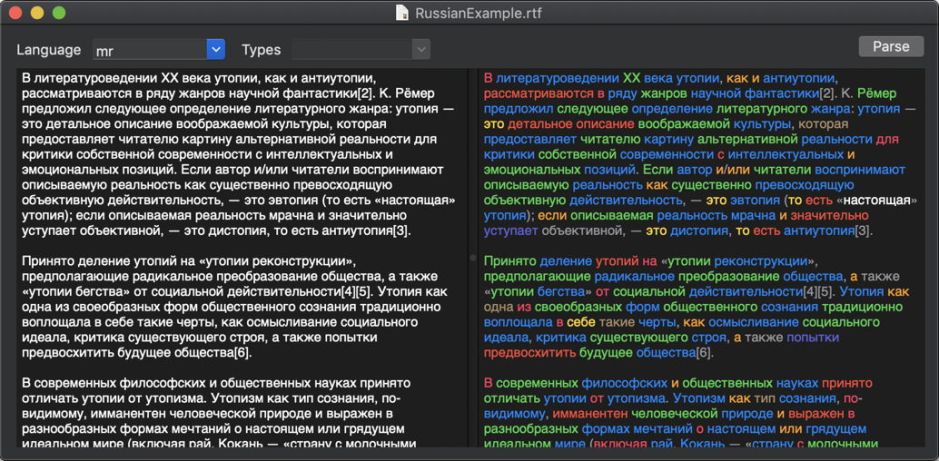

And here it has parsed the Russian text at the left.

You can save the parsed text in Rich Text format (RTF), to preserve its colour-coding.

This is only an alpha release, but comes with a key to the colours used, and the NLP code used, given in Swift 4.2.

This only runs on Mojave, but I thought that it might be a fun demonstration which beta-testers might enjoy. If you’re looking to use Mojave’s NLP features, it also gives you a good idea of their language coverage during this pre-release period. Nalaprop 1.0a1 is available from here: nalaprop10a1

and in Downloads above.