Even an iPod is far quicker at processing data than early Macs. How do modern Macs and iOS devices achieve this, and how much is it worth getting even more cores?

Walk around an Apple store and the variety and complexity of processors inside the models on display is alarming.

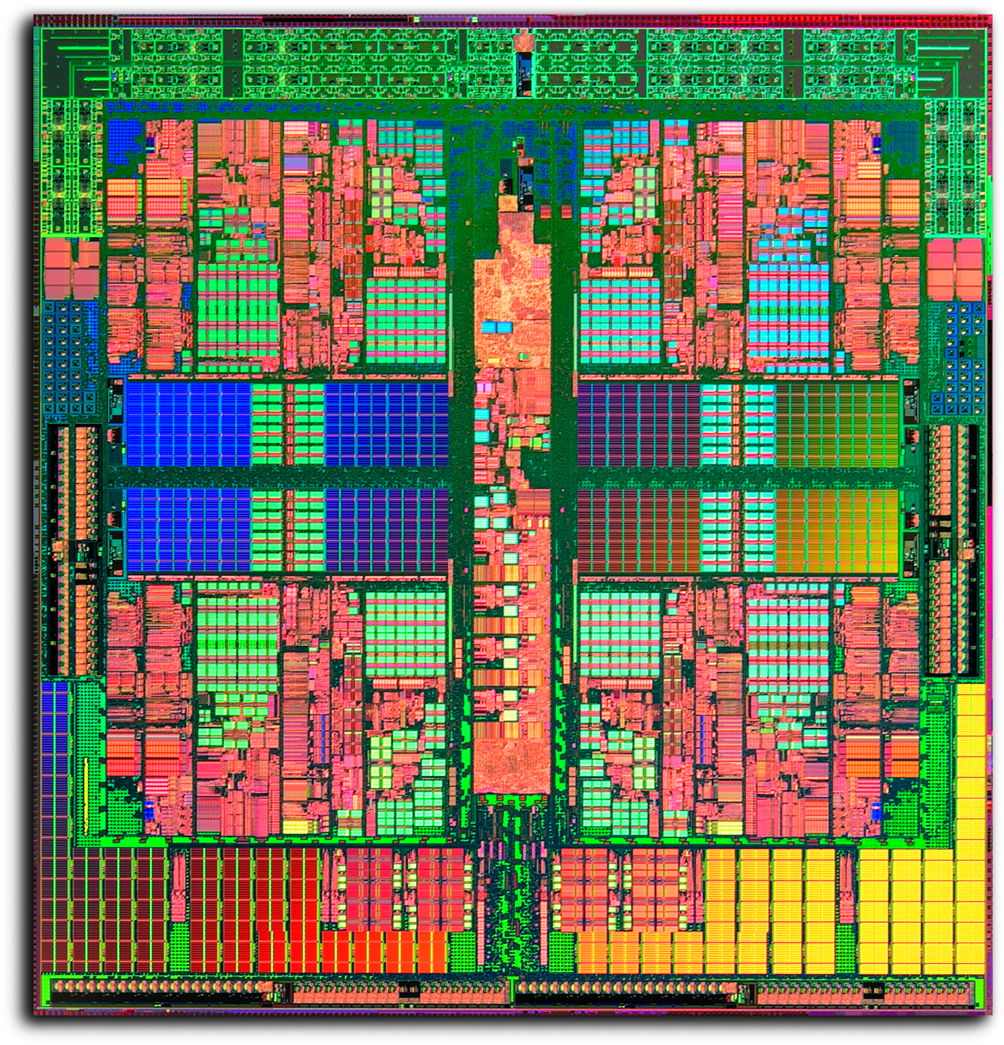

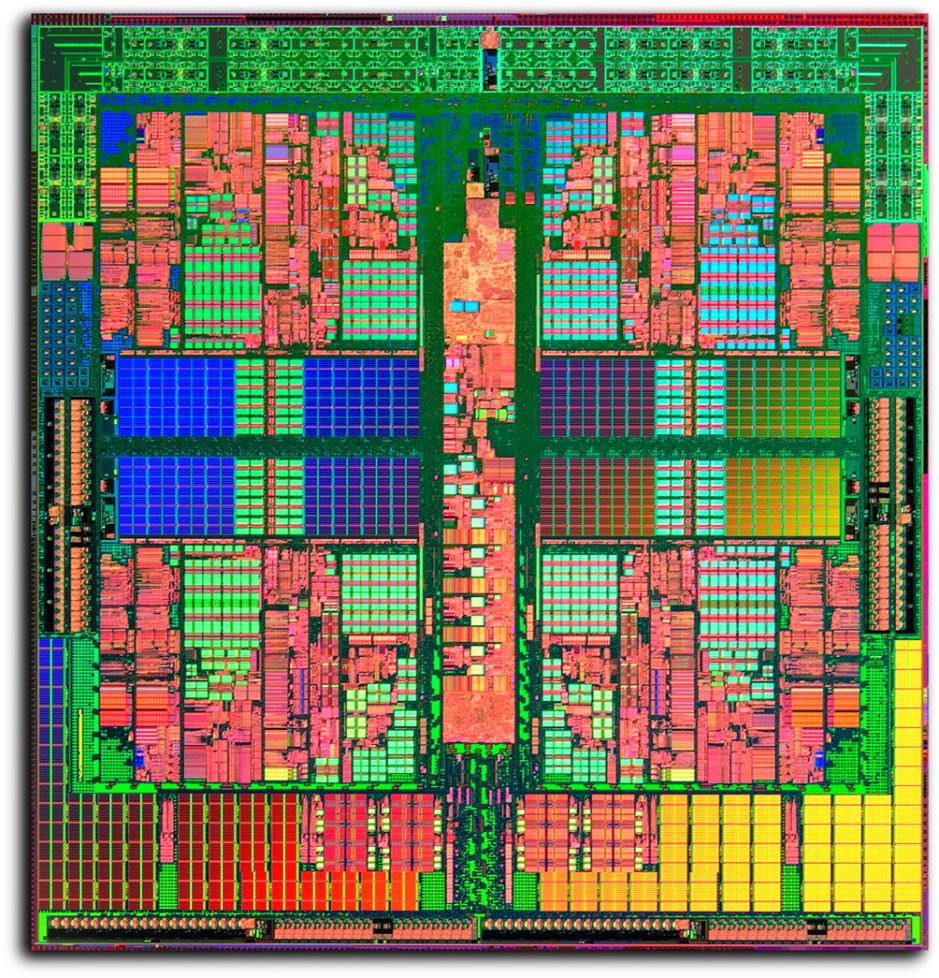

A high-end Mac Pro might have an Intel Xeon E5 processor running at 2.7 GHz and providing 12 cores; the iMac with a gorgeous 27” display has a single Intel Core i5 or i7 with four cores running at up to 4 GHz; the MacBook Air you have fallen in love with has an Intel Core i5 or i7 with just two cores running at up to 2.2 GHz; the iPad has a special Apple A8X system-on-a-chip (SoC) with a three-core Typhoon ARMv8-A processor running at a peak of 1.5 GHz.

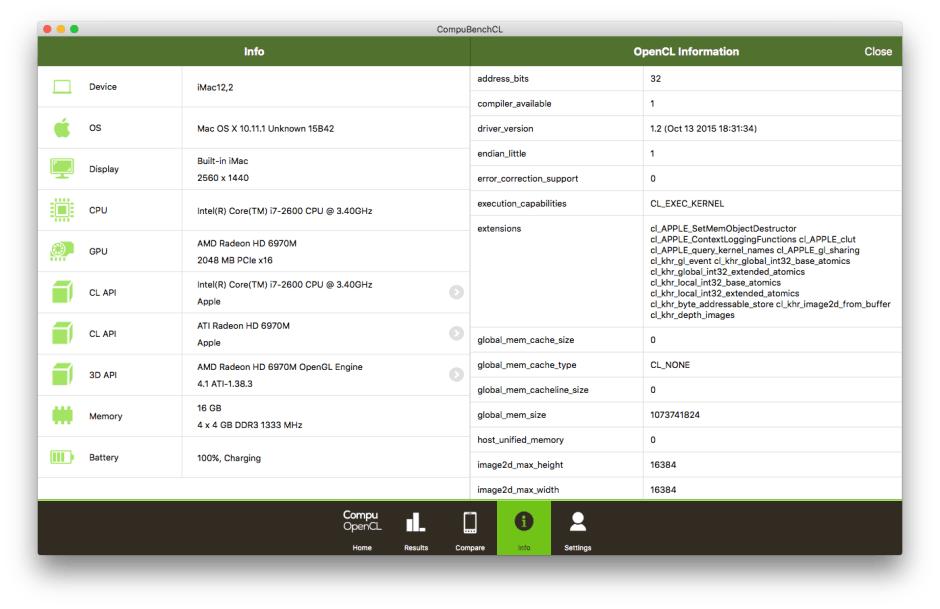

But it gets still more geeky: a dual AMD FirePro D700 graphics card in the Mac Pro has 2048 of its own GPU stream processors delivering 3.5 teraflops, supporting accelerated OpenGL and OpenCL. OpenCL is also supported by the iMac’s built-in AMD Radeon R9 M395X graphics card, but the MacBook Air and iPad have integrated GPUs, Intel HD Graphics 6000 and PowerVR GXA6850 GPU, respectively.

Increasing speed

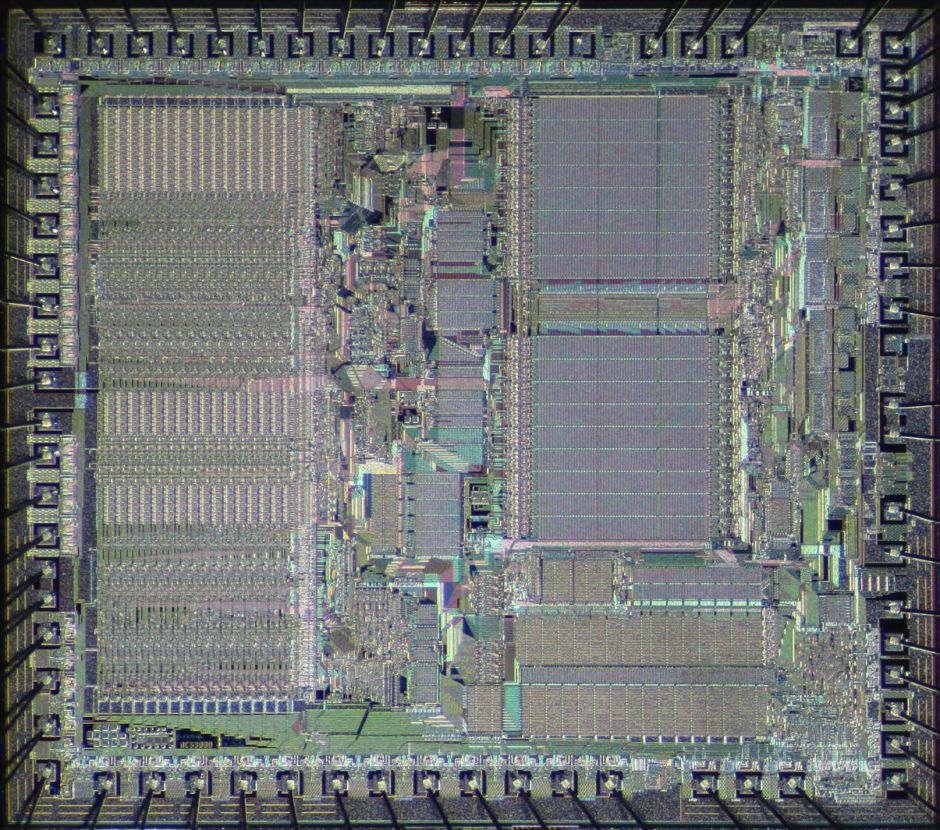

There was a time when a Mac’s heart was simply one processor, originally a Motorola 68000-series component, later a mightier PowerPC from the IBM-Motorola-Apple collaboration. But the thirst for speed added co-processors, specially designed to take load off the central processing unit (CPU). The first co-processors were for high-speed floating point calculations, but the launch of the Quadra 840AV in 1993 brought a digital signal-processing (DSP) unit intended to accelerate audio and video work – the ancestor of today’s far more sophisticated GPUs.

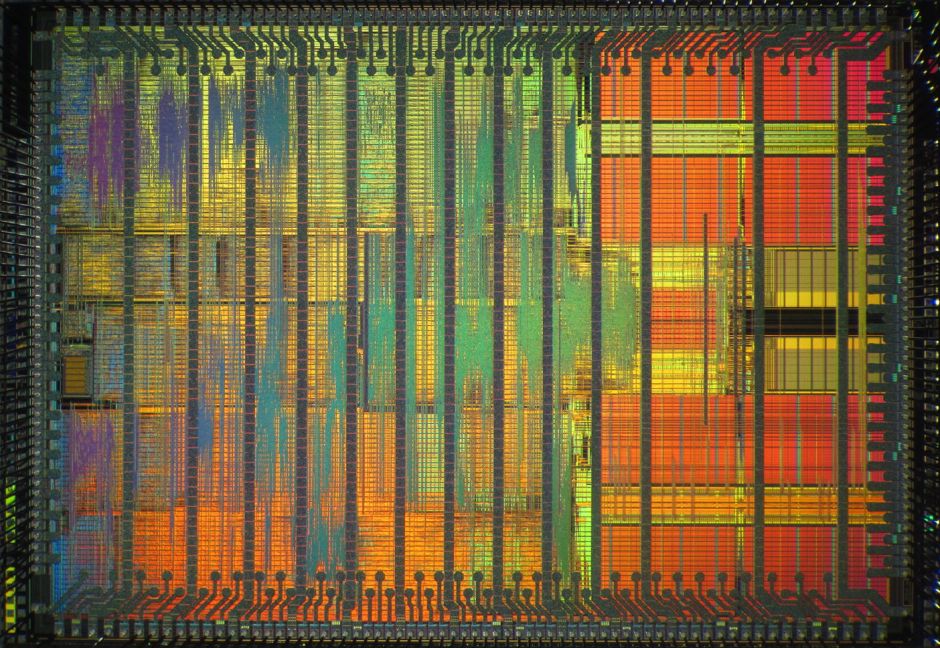

CPUs, GPUs, and co-processors all work by performing a sequence of operations, such as taking a number from one store, adding it to another, and putting the result in another store. Each processing step takes a set number of ‘ticks’ of the processor’s clock, so one simple way of making a processor work more quickly is to increase the frequency of those ticks, by increasing the clock speed.

However there are fairly strict electronic limits to the maximum clock speed at which each processor can operate. You could not just take an old Motorola 68040 running at a leisurely 40 MHz (40 million ticks every second) and crank it up to today’s gigahertz clock speeds – the processor would fail long before it hit 2-3 billion ticks per second.

Processor designers have used many strategies to increase the speed of their products, in addition to increasing sophistication in design and manufacture to progressively raise clock speed.

These have included a smaller range of instructions that the processor can perform (reduced instruction set computing, RISC), or the exact opposite (complex instruction set computing, CISC), putting different parts of a processor into a ‘pipeline’ that can perform more than one operation at any time, and using multiple processors in concert. Modern Macs use all of these in some way or another to wring maximum performance from their chips.

Parallel processing

Using more than one processor at a time is one of the oldest solutions, and was first exploited on a commercial scale by a British company, INMOS, in Transputer parallel processors in 1987. These anticipated current processors in many ways, as they had a RISC design, pipelines, SoC, and high-speed inter-processor links, but were ultimately unsuccessful.

All current models of Mac, iPad, and iPhone feature two or more processor cores enabling them to run more than one software process simultaneously. Only the Apple Watch still has a single-core processor.

This is quite different from the pre-emptive multitasking that has been a feature of OS X since its first release. In multitasking, a single processor switches rapidly between different active processes to give the impression that they are running simultaneously whilst all the time they are each only getting a part share of the processor’s whole capacity. There is significant overhead in switching between tasks, and the more processes that are running, the slower each gets.

The delight of having multiple processor cores is that, when they are evenly loaded, the more cores you have, the faster active processes will run. The perfect situation is when you have one major active process running on each core, and OS X contains low-level software that does a very good job of balancing the load on processor cores to try to achieve optimum performance.

The snag is that poorly-designed applications consisting of a single processor-intensive process cannot be shared between cores, and force the load on one core towards 100% while other cores remain lightly loaded.

Enter the GPU

OS X and many applications, in particular games, are rich in graphics, and make heavy demands when displaying moving images. Early primitive graphics custom chips have been replaced by specialised graphics processing units (GPUs) that can either be installed as expansion cards in modular designs, such as in old Mac Pros, or in those built into other models.

Whilst a CPU has to be able to perform a wide variety of functions, from shifting data around to floating point arithmetic, GPUs have specialised instruction sets determined by the operations needed to accelerate OpenGL.

Most recently, access to the GPU has been opened up to other processes which could benefit from their power. OS X supports the Open Computing Language (OpenCL, pioneered by Apple) so that enabled applications can run their processes in parallel on the available CPU and GPU cores. For your Mac to benefit from OpenCL acceleration, it needs to be running OS X 10.6 or later on an Intel Core i5, i7, or Xeon processor, and GPUs supported now include all Mac models. Each major release of OS X brings further enhancements to OpenCL and related features.

Limitations

Having lots of fast cores and applications that can be spread onto them as separate processes does not guarantee that everything will run quickly, though. File sharing, databases, and the other activities of servers are notoriously storage bound, so there is little point in throwing lots of processor power at them. The exception to this is with server virtualisation: as it becomes possible to run server operating systems in software virtual machines, there will be server setups that need multiple cores.

Working out whether you will get any benefit from paying the hefty price tag for, say, a 12-core Mac Pro against a four-core iMac, or even a two-core mini, is very difficult.

You need insight into where the bottlenecks are in your current system, and where they will be if you invest in processing power rather than, for example, a solid-state disk (SSD). It is also essential that you study the performance of your major working applications, so that you can see whether they are monolithic processes bound to a single core, or consist of multiple processes that will distribute well.

The proof remains in the processing.

Tools: Benchmarking Performance

Assessing the processing capability of your or any other Mac is a matter of choosing the right benchmarks.

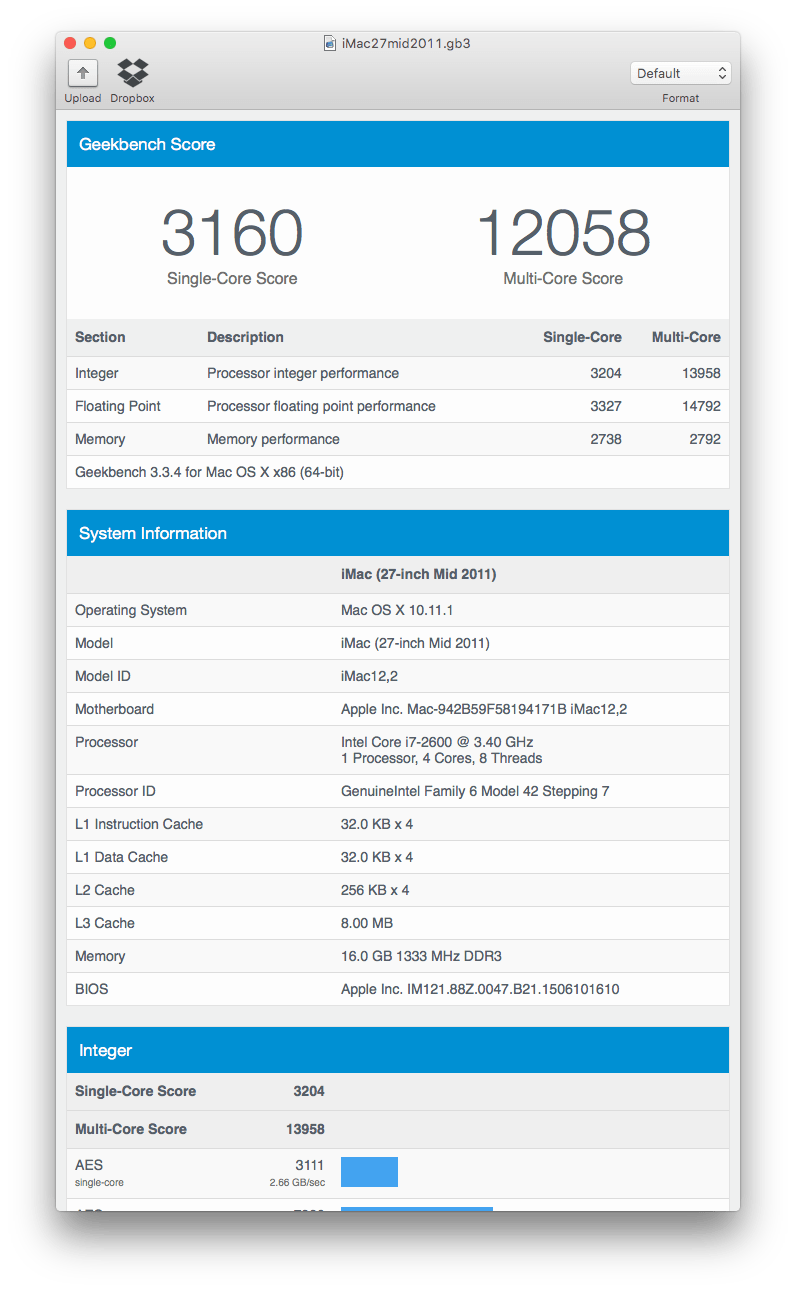

The purest measures of processor performance can be obtained from tools such as Geekbench (£4.49 from the Mac App Store). These run a series of tests designed to measure different aspects of the processor, such as its speed performing simple arithmetic operations using integers (whole numbers), and floating point (decimals).

Geekbench is unusual in that it is cross-platform, so that you can compare similar processors running OS X, Windows, Linux, and now even iOS. Although useful for comparing different models, there is a significant gap between these abstract benchmarks and performance in the real world.

So whilst Geekbench’s array of results is a good start, you also need to devise your own real-world benchmarks. Avoid obvious but largely irrelevant measures, such as the time taken to start an application up. That depends on which other applications are running, disk access performance, and is hardly something that you do frequently when using your Mac.

Instead use typical tasks that take time, such as transcoding video, or processing a very large image. Choose a subject file that will take a period of time to process, ideally between 30 and 60 seconds, so that you can time it accurately. Save a master copy of the benchmark file, and write a short note describing exactly how to run the test and how to time it.

When running benchmarks, you need to reduce factors that cause variation in results, unless these are essential to the test. Check when the next Time Machine backup is due to run, and avoid clashing with that, as it will substantially impair disk and processor performance. Quit all other applications that are not required for the test, and close non-essential windows.

Techniques: Investigating Performance

When you are considering replacing or modifying your Mac to improve performance, it is essential to understand where its performance is being limited. There would be no point in buying a model with lots of cores if the software that you use can only run on a single core, and your money would be better invested in buying a better disk drive if your old, slow hard disk was the present bottleneck.

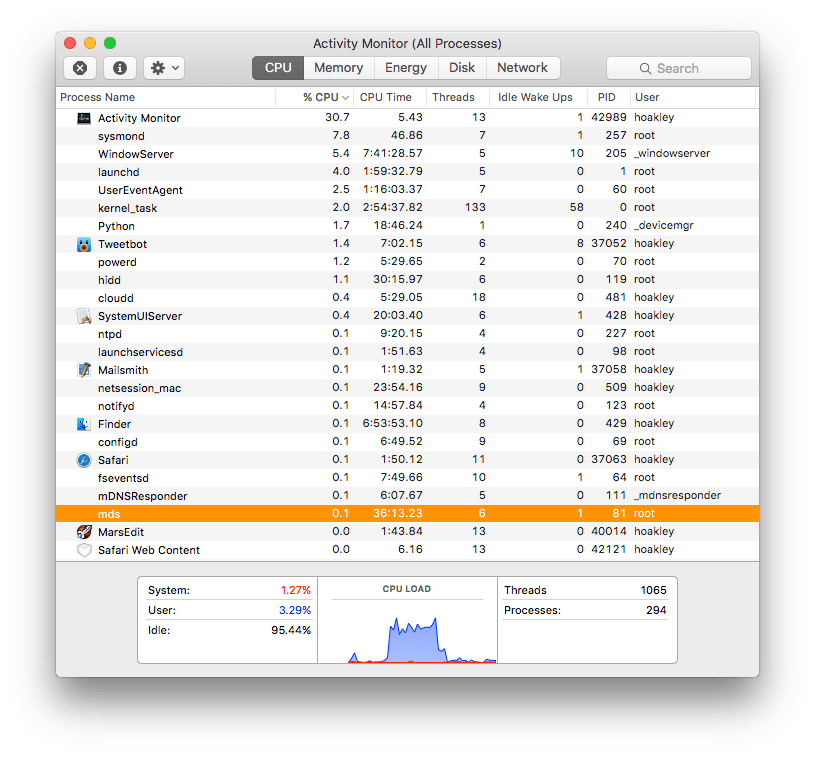

The most essential tool in studying what is going on in your current Mac is the Activity Monitor utility, in particular its floating windows that show processor core load. Open these and watch their bars as you go about your normal activities. You may be surprised at how much of the time your cores are very lightly loaded, and how evenly balanced the load across them is when they are working hard. Other bar charts in the main process window can give valuable information on network and disk load, that will guide you in deciding which components in your system are forming its bottlenecks.

The two snags with Activity Monitor are that it modifies processor load, and that it cannot tell you which features in OS X are being used to enhance performance.

It is one of the basic lessons from modern physics (quantum mechanics) that when you try to measure a system, you inevitably influence and alter the system that you are trying to measure. Activity Monitor imposes its own load on processor cores and graphics, although in comparison to heavyweight applications this is comparatively light.

To discover whether your Mac has the ability to use OpenGL and OpenCL acceleration, use a tool such as OpenGL Extensions Viewer (free from the App Store). CompuBench CL Desktop Edition (also free from the App Store) is a cross-platform OpenCL benchmarking app which you may find useful. However even those cannot inform you how much an application benefits from such features.

Further advice on hardware upgrading for improving performance is here, and on memory management is here.

Updated from the original, which was first published in MacUser volume 27 issue 23, 2011.