OCR was supposed to be an essential tool for turning scanned documents from images into searchable text content. Does it, or has it still failed to deliver?

The best method for teaching children to read is hugely controversial, but once we have mastered the skill, the fastest and best readers recognise not individual letters, or even words, but altogether larger chunks of text. Even when working through faded typescript, scrawly handwriting, or the most extreme of fonts, most of us read accurately and at speed. So how, after decades of research investment, can we still not match that in software? Is Optical Character Recognition (OCR) software consistently inferior in its reading accuracy, and if so, how?

The paperless office saga

OCR was touted as central to the strategy for a paperless office. Recognising that companies and organisations have voluminous legacies of paper records, the solution was a combination of expensive high-speed flatbed scanners and state-of-the-art OCR software that would automatically convert the contents of those thousands of pages into searchable text. In the late 1980s, vendors like Caere Corporation made handsome profits from OmniPage and its competitors before the market cooled.

Offices that had invested substantial sums in scanners and OCR products discovered the real meaning of 98% character accuracy: on an average page there would be ten or more errors, and when scan images or original source material were less than perfect, every other word could be garbled.

Few had even considered the effort or cost involved in proof-reading and correcting OCR output, making content searches a gamble at best. When you are looking for the word ‘contract’, you will miss those instances in which it has been misrecognised as ‘contact’, a problem that fuzzy search features have tried to alleviate. HP’s flagship OCR engine, Tesseract, had further development frozen in 1995, despite being one of the best three performers. It was released as open source ten years later.

But OCR is far from dead. The App Store offers over 50 products, including relatively expensive heavyweights like ABBYY’s FineReader OCR Pro and ExactScan Pro, each around £70-£90. Adobe Acrobat Pro 2015 also features an embedded OCR engine which you can apply to turn scanned PDFs into searchable files, as do some other PDF workhorses like PDFpenPro and PDF Connoisseur.

Is it so hard?

The task attempted by OCR is not simple. Working from a bit-mapped image of a document, it first has to separate each character or glyph, analyse and match it to one in the character set used in the recognition language. Dividing blocks of characters into individual words, each is then checked against its dictionary, and the word that fits best is then output. When no word is a good fit to the characters that have been found, it makes the best of a bad job by giving the characters and marks that have been recognised, in the hope that this might resemble the original document.

At the moment, no mainstream OCR product attempts the next step that humans are so good at: assembling words into the context of a sentence or larger unit; this is partly because of remaining shortcomings in computer comprehension of natural language.

Crisp, perfectly-oriented high-resolution page scans with every character distinct and separate, and free of idiosyncratic vocabulary, are ideal. When the font used is fairly plain and leaves no ambiguity, good modern OCR software can recognise page after page with barely a glitch. The further that your material departs from that ideal, the more likely you are to get better value for money and results from paying a human to copy type it in.

Handwriting

Although online handwriting recognition has become a useful feature in tablets and to a lesser degree in OS X (Inkwell), OCR software usually fails dismally at offline recognition of handwriting, which is a very different task. Online recognition expects letters to be formed in stroke sequences, to be entered individually, and relies on much more than the virtual ink left behind after the writing act. Furthermore, online recognition systems often require at least some training to the quirks of each individual hand. Few OCR products are trainable, because the techniques that they employ lack simple parameters than can be tuned to improve recognition.

Non-English languages

The last fifteen years have seen great improvements in the range of languages over which OCR products are effective. These include European and other languages that use fairly standard Roman alphabets, more complex systems with a rich range of accents and marks, such as Hungarian, non-Roman alphabets such as Cyrillic (Russian) and Greek, Arabic and Hebrew, and even East Asian ideographic systems such as Chinese and Japanese.

If you are paying more than £50 for your product, you should expect it to support at least 100 languages, including many of which you may not have heard. Not all of these will have the benefit of spelling dictionaries, though, making accuracy rates slightly lower. Better products will also allow simultaneous recognition of two or more languages in the same document.

For free?

OCR is also available in open source projects such as HP’s Tesseract-ocr and its successor OCRopus. At the moment, neither has been wrapped in more friendly form for OS X, and only Tesseract is readily compilable to a command shell tool, if you have Apple’s Xcode SDK.

Several PDF tools, including Adobe’s own Acrobat Pro, include their own OCR engine. This is particularly relevant for PDF files, which can contain images of text (for instance, from scanned pages), and the same text laid out as characters. Businesses looking for simpler workflows are often tempted to use these applications to scan, convert to PDF format, and use OCR to make the content searchable. However in practice recognition performance is likely to be more limited than that of a dedicated OCR package, which may well include scanner support and generate thoroughly reliable PDF output incorporating both the original images and recognised text.

Reality

For the occasional user, any of the leading packages should provide good performance, high accuracy, and little need for correction. However you will still need to check and amend pages after they have been through the OCR process. If you need coverage of foreign languages, then provided your chosen app has full support, you will probably find its accuracy greater than that of a non-native typist.

More major tasks, such as converting an archive of paper documents, or long books into fully accessible files, merit careful assessment of the contenders before you commit to one. It is a significant shortcoming of the App Store that you cannot screen their performance in the way that iTunes allows you to preview music. You will also need to budget time and money to ensure that content is read carefully in proof before being committed to your electronic archive, or its users will complain.

If you need support for content that current OCR products do not cater for – unusual languages, strange scripts, or worst of all, handwriting – then there is no easy answer. You may find that investing time training the Tesseract engine to cope with a language is worthwhile, but it would then be worth asking the advice of those involved in the project. Ultimately you may find it quickest and most efficient to get a human to do the recognition for you. We still have our uses.

Tools: FineReader OCR Pro version 12.1.4

Tools: FineReader OCR Pro version 12.1.4

Only just upgraded to be compatible with El Capitan, FineReader OCR Pro has support for almost every language you are likely to encounter this side of Papua New Guinea, although I notice that Georgian is still oddly missing. It now also offers recognition for a small range of programming source code languages, including C/C++ (but not Objective-C or Swift) and Java.

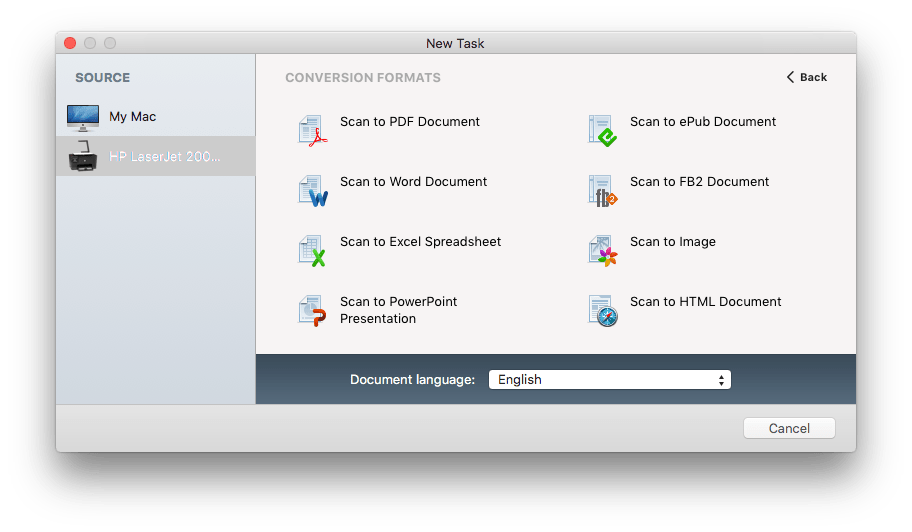

It can either work on previously-obtained images, or can access scanners supported by OS X direct, offering clean and efficient workflows. Although the range of destinations on offer seems to omit some favourites, such as scanning to text, those are actually options within these top-level formats.

It can either work on previously-obtained images, or can access scanners supported by OS X direct, offering clean and efficient workflows. Although the range of destinations on offer seems to omit some favourites, such as scanning to text, those are actually options within these top-level formats.

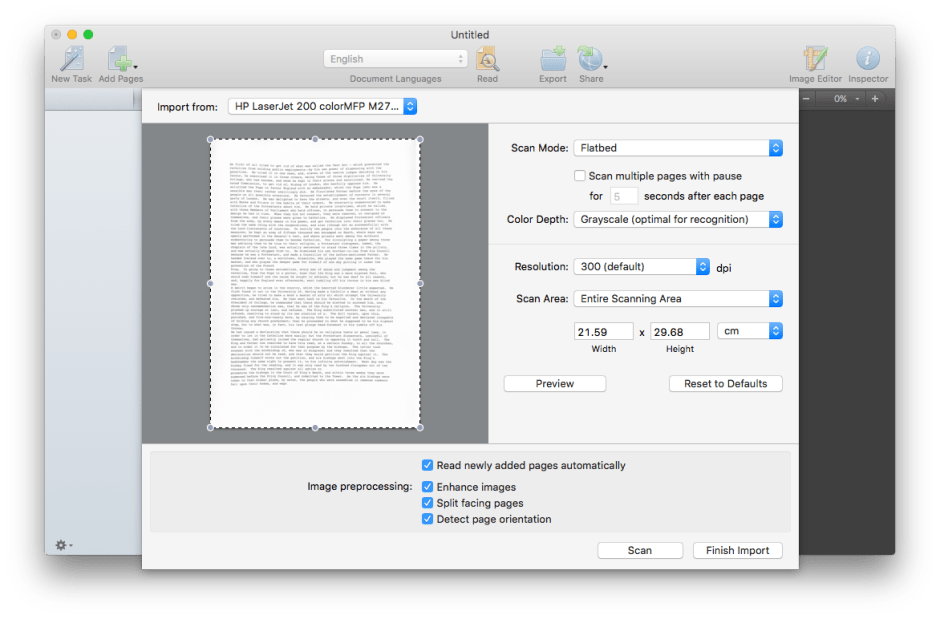

Scanner controls are essentially similar to those used when working directly with your scanner, through the Printers & Scanners pane. Once a page has been previewed, click on the Scan button to capture an image ready for OCR, and click on the Finish import button when that is complete.

Scanner controls are essentially similar to those used when working directly with your scanner, through the Printers & Scanners pane. Once a page has been previewed, click on the Scan button to capture an image ready for OCR, and click on the Finish import button when that is complete.

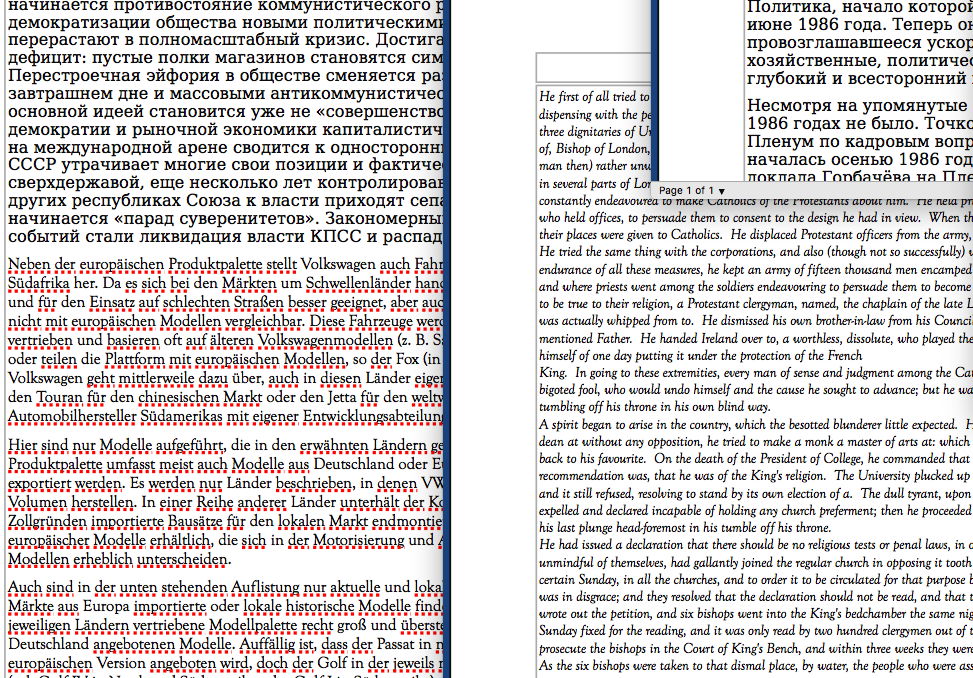

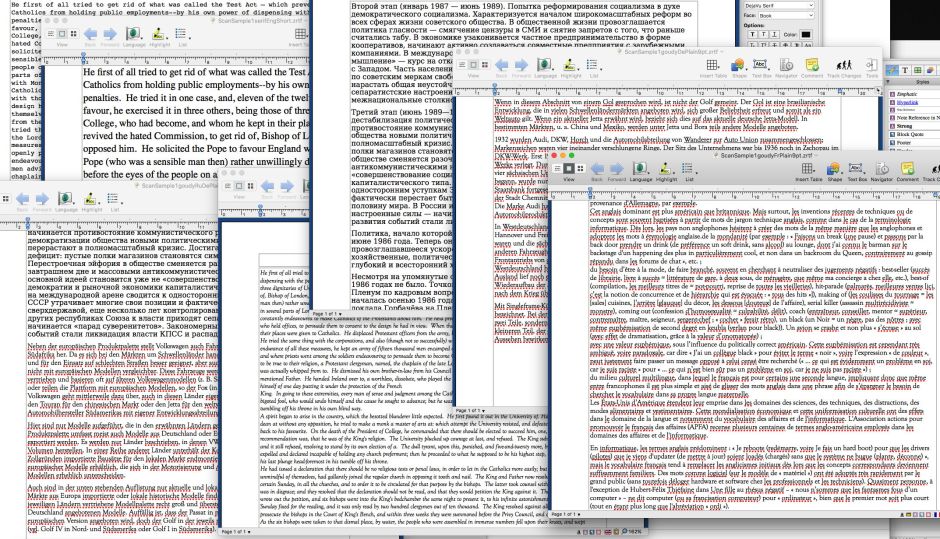

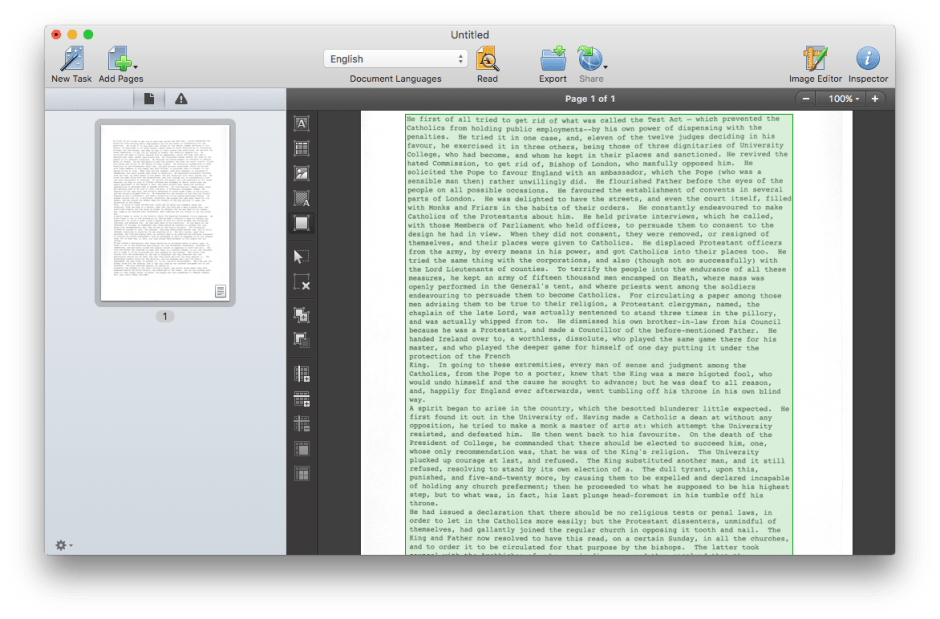

Once scanned, each page is passed to the OCR engine, which recognises blocks of text and may then offer to save the completed document automatically.

Once scanned, each page is passed to the OCR engine, which recognises blocks of text and may then offer to save the completed document automatically.

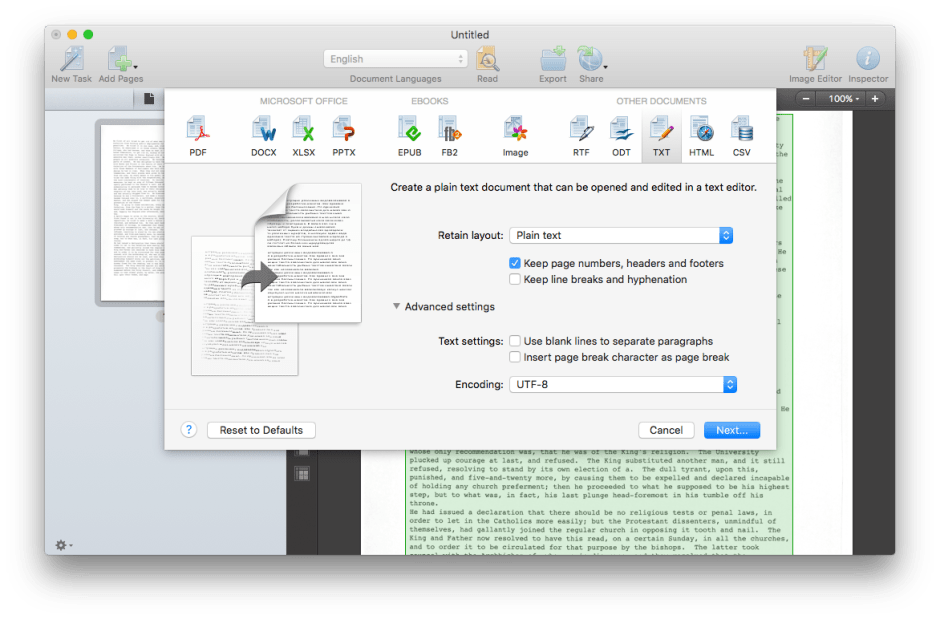

You can also opt to export in any of a very wide range of different formats. Each has a good choice of formatting options. Settings such as these persist between scans, making the process efficient and fuss-free.

You can also opt to export in any of a very wide range of different formats. Each has a good choice of formatting options. Settings such as these persist between scans, making the process efficient and fuss-free.

Technique: The Best Image

Providing your OCR software with perfect images makes a very big difference to its recognition performance. For comparative purposes, I presented ABBYY FineReader Express (2012) and FineReader OCR Pro (2015, version 12.1.4) with a range of different scanned documents, and manipulated images. Text generated was then compared against the original content using BBEdit, counting all errors other than those in white space.

Scans were performed using an HP TopShot all-in-one, as its overhead camera introduces some distortion compared to a slower flatbed. Surprisingly, although that optical distortion was greatest towards the corners of the sheets used, it did not correlate with recognition errors, showing how robust FineReader is to such issues.

A high quality 300 dpi scan of one of the most challenging versions of my test document (846 words set in 9 point Goudy Old Style) resulted in an error rate of 1.2 per thousand words, which rose to 9.5 when using a high-quality image at only 72 dpi. Using 72 dpi scans in non-lossy PNG or lossy JPEG format brought the rate to 4.7. This shows how important it is to use faithful high resolution images for OCR.

Degrading the quality of the image had significant consequences too. A slight blur, as might appear in an original that has been damaged or smudged, gave an error rate of 7.1, addition of 10% noise to a crisp image resulted in a rate of 17.7, and 20% noise doubled the error rate to 35.5. Interestingly today’s FineReader OCR Pro proved far superior to FineReader Express of three years ago: then, 10% noise resulted in an error rate of 67.4, and 20% rendered the text unusable.

If you cannot obtain high quality scans of published documents yourself, you may be able to locate good library copies in either PDF (image only) or DejaVu format. These can then be converted to page images ready for OCR.

Results: Fonts, Formats, and Foreigns

Choice of OCR software plays a major part in determining the rates of recognition error. Using scanned crisply laser-printed English documents, an excerpt from Charles Dickens with most proper names removed, ABBYY FineReader Express (2012) and FineReader OCR Pro (2015, version 12.1.4) performed almost flawlessly across a range of most ‘standard’ fonts and sizes, and Acrobat Pro 2015 had similarly low error rates for the much more limited range of languages which it supports. It was good to see that the current version of Acrobat Pro 2015 achieved much better accuracy than had Acrobat Pro X three years ago.

The nature of the text being recognised is another key factor: for English tests, all words in the test should have been in the OCR app’s dictionary. Feed it technical content, particularly if words are complex and beyond the dictionary, or manipulated in strange ways as in many linguistics works, and you can expect errors to mount.

Departing from standard fonts can induce more errors too. Whilst FineReader OCR Pro romped through Times, Helvetica, Courier, and the finer serifs of Goudy Old Style at 9 points and greater, setting the small Goudy into italic drove the rate up to 16.5 per thousand. FineReader has improved markedly in its performance on plain and italic Goudy Old Style at 9 points when compared with three years ago. Because of differences in the OCR engines in the apps available, font effects will vary according to product.

FineReader boasts very rich support for non-English languages and even non-Roman scripts, and can comfortably cope with up to five different languages in each page; using more slows recognition quite markedly. Increasing the size of the Goudy Old Style to 10 points, it recorded error rates of 12.3 on French (slightly worse than three years ago), and 9.5 per thousand on German (significantly better). Russian set in DejaVu Serif at 10 points impressed with no errors at all, whilst a page containing both German and Russian was a breeze at 6.9 per thousand words: something which probably does outstrip a human.

Its performance on programming source code was not so impressive, though. C and Objective-C came through with 5-6 errors per page, making thorough checking of any OCRed source code essential. This perhaps illustrates how important dictionaries are to its performance on normal languages. If printed out code has line numbers and other page decorations, then you will have to be very careful to strip those out.

Updated extensively from the original, which was first published in MacUser volume 28 issue 16, 2012.