In the first article in this series, I gave a broad overview of viral phenomena, and why there is such interest in them. This second article tries to establish what we know about real intensely viral events, particular in terms of their triggering and evolution.

What to look for

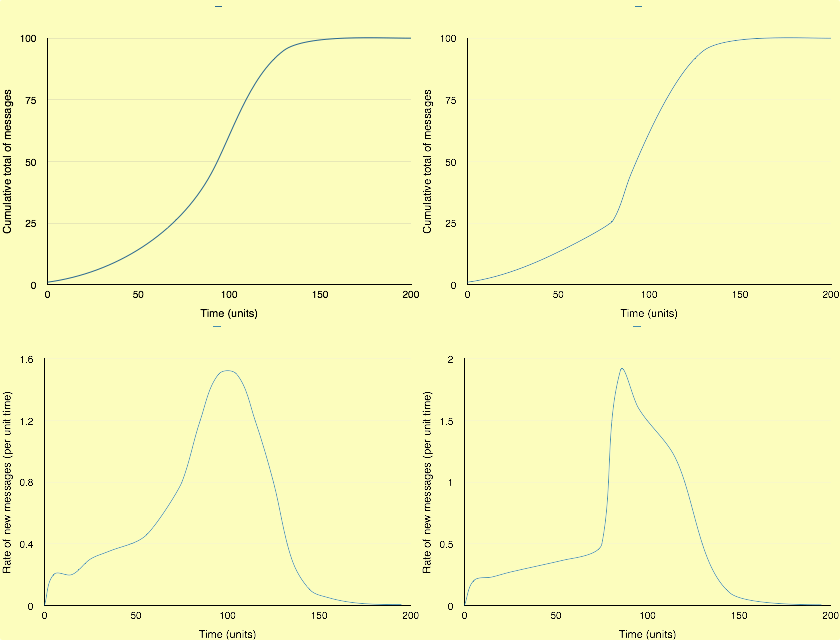

Above I show two possible patterns of intensely viral event, one on the left and the other on the right. For each I show two different graphs: the upper gives the cumulative total of messages over the time course of the event, from inception to its peak; the lower shows the rate of generation of new messages over that period.

Nahon & Hemsley and many other authors consider that the rise to peak virality follows a sigmoid path, as shown on the left. They also stress the need for a sharp acceleration in the number of people who are exposed to the message; that could appear as a step change in rate of rise, the point of inflection shown at about 80 time units in, on the curves on the right.

The difference between these two models may appear subtle, but in terms of mechanism it is important. A steady rate of growth in messages, as on the left, can be predicted by properties expected in a network alone, whilst the ‘critical point’ on the right suggests that there may be other factors involved, perhaps that referred to by some as ‘critical mass’, and is likely to need special explanation in any model.

Scaling the axes is important, and best attempted using some real data.

During the recorded event of the Keith Urbahn tweet about the death of Osama bin Laden in 2011 (Nahon & Hemsley, page 52), the total rate (for all tweets) reached a peak of just over 5000 tweets/s on a previous background rate of about 2700 tweets/s, in a time of about 40 min (2400 s). Thus the likely peak rate of tweets about the death was around 2400 tweets/s, declining rapidly thereafter. The more sustained high rate of about 4500 tweets/s (total) may result from other sources of traffic, so for this purpose I will assume that the Urbahn tweet decayed in frequency after that peak, and would have been swamped by tweets originating from other sources after a total life of around 90 minutes.

For those observed figures and rates, the X axis would thus scale from the 0-200 arbitrary units shown to 0-5000 seconds. The Y axis in the upper graphs (cumulative total of tweets) would scale from 0-100 to 0-3 million, but in the lower graphs (rate of tweets) would scale to 0-2500. I show these below. Note that the initial section of the curve in the upper graph, which appears to be below the X axis, is an artefact of curve fitting, and that the cumulative total during that period was above zero throughout.

In studying the mechanism of virality our greatest interest is in its initiation: the period from inception to the maximum rate of rise in rate, the steepest section of the curve in the lower graph. In other words, in this case, during which the rate of tweets remained below 500 tweets/s. If there is a point of inflection or ‘critical mass’, it is there that it should be manifest, not when the rate of tweets has exceeded 500 or even 1000 tweets/s, when virality has already been established.

Of course these are theoretical and beautifully smooth curves. In reality this type of observation is inherently noisy. Message numbers could be measured precisely, but even in large systems will have a lot of jitter about their trend. In order to get sufficient information to perform a good regression to a model line, which can distinguish whether there is a step change, for instance, we need hundreds of data points, so a high time resolution.

Other intensely viral events, such as that centred on the unfortunate Justine Sacco, are slower and of lower total volume. I have been unable to discover any published estimate of the total number of tweets which composed her ‘Twitterstorm’ on 20 December 2013, but suspect that they would have been of the order of 20,000 to 50,000, over a period of 10-20 hours, or 36,000-72,000 seconds. In such cases the crucial period of interest during the early phase of the event would have occurred at far lower tweet rates than that of the Urbahn event.

What has been reported

In a nutshell, I have been unable to locate any good data showing such an intensely viral event, despite searching many papers and several books.

The Urbahn Twitter event is probably the best-described, meriting over ten pages in Nahon & Hemsley, but the only data shown for the complete event are for total tweets/s traffic in their Figure 3.5, page 52, and do not filter event from other traffic. It is taken from the Twitter image stored here. All other data given are for small analytical subsets for individual Twitter accounts, and Hu et al. provide limited data from an intermittent small sample of the total Twitter traffic.

Nahon & Hemsley provide one example of data which they claim exhibits a sigmoid curve, in Figure 2.2 (page 23). Those data are derived from views of a video logged by referring blogs, with datapoints at daily (8640 s) intervals. They do not therefore comply with their requirements for simultaneous reception and propagation over a “short period of time”, and in any case there are insufficient sampling points on the X axis to attempt anything useful with those data, which could reasonably fit a wide range of different models.

Despite voluminous coverage in a wide range of media, traffic figures or further analyses for the Justine Sacco event do not appear to have been made public. The only other intensely viral event for which traffic data do appear to be widely available is the record tweet rate, set on 3 August 2013, when 143,199 tweets were sent in a single second during the broadcast of the movie Castle in the Sky in Japan. However those data appear to have been filtered or smoothed prior to release.

Where to look

If we are looking for events which I describe as being intense, and which meet Nahon & Hemsley’s tight definition of virality, we need systems which respond very rapidly, within minutes or hours at the most, on which we can measure the rate of message spread both accurately and frequently. Thus the most appropriate has to be Twitter.

However Twitter is also a very difficult choice, because of its relentless high volume, presently an average of around 5700 tweets/s. There are currently two ways of accessing tweets: the ‘Firehose’, which provides a feed of a sample of around 1% of the total tweets, i.e. an average of 57 tweets/s, or via specific database requests using its RESTful API to return JSON data. The latter is ideal for network analyses of historic data, but is not intended to provide the sort of traffic information that this type of analysis needs.

A Firehose feed would need to be filtered to capture only those tweets with usernames and hashtags which are relevant to the event. Assuming that such a filter were perfect (which is most unlikely even in retrospect), to capture the most important early phase of an intense viral event it would depend on capturing up to 5 tweets/s from that average of 57 tweets/s, over a period of less than 1500 seconds (according to the example postulated above for the Urbahn tweet event).

We must also bear in mind that over the last few years Twitter has built large non-English and non-Roman script memberships. Take a look at worldwide trending topics at any time and you will often see English topics in a minority. This is also neatly illustrated in this visualisation of trending topics.

This is looking for a needle in a large torrent of haystack, even performed retrospectively. It is therefore not surprising that published studies appear to have been unable to show such data.

Do they exist?

There seems no doubt from the literature, from media reports, and from personal experience that intensely viral events occur. Characterising their early stages remains a very difficult problem which requires sensitive analysis on a huge data stream, something which could only really be performed by Twitter itself.

So for the time being we can only speculate what might happen during the early stages of such an event. I do not think that it is currently tenable to assume that they follow a classical sigmoidal curve, although they might do. Neither do I see any evidence which could aid prediction of which events become intensely viral (and meet Nahon & Hemsley’s definition of virality), nor why.

References and further reading

Hu M, Liu S, Wei F, Wu Y, Stasko J and Ma K-L (2012) Breaking news on Twitter, Proceedings of the 2012 ACM Annual Conference on Human Factors in Computing, 2751-4. CHI ’12.

Mejova M, Weber I and Macy MW eds. (2015) Twitter, A Digital Socioscope, Cambridge UP. ISBN 978 1 107 50007 5.

Nahon K & Hemsley J (2013) Going Viral, Polity Press. ISBN 978 0 7456 7548 0.

Russell MA (2013) Mining the Social Web. Data Mining Facebook, Twitter, LinkedIn, Google+, Github, and More, 2nd edn, O’Reilly. ISBN 978 1 449 36761 9.