If we want to protect important documents from being corrupted or lost, it’s no good just knowing whether any particular copy of a file has been corrupted. You need some means of recovering or repairing the file so that your master copy is intact and fully usable.

One simple way to do this is to store two complete copies, something commonly done using RAID mirroring. When it works perfectly, it’s great, but is inefficient in terms of storage: you need twice the (total) space to store that file. When those can be 100 GB video, that quickly gets seriously expensive.

There’s another snag with a simple mirror: unless you monitor each copy of the file to ensure it remains intact, you could end up with both copies being corrupted. Although this might seem unlikely, most RAID mirrors are made up of hard disks. Once over about 3 years of age, the chance of one of the disks failing in the next year could be as high as 20%. Normally, that would mean the chance of both failing would be 4%, but in practice hard disks in RAID systems are usually from the same batch, and batch members are likely to fail at around the same time, so the chances of both disks failing in the fourth year could be 10% or greater.

Another recognised problem is that RAID mirrors, when they work properly, write exactly the same data to each of the disks. If the corruption is introduced at or before that stage, rather than in the RAID disk, then you will have two identically corrupt copies of the file.

One slightly smarter way to use copies of a file is to monitor not just the whole of each copy’s integrity (using checksums or digests), but to calculate those for parts of each file. Then if only the first block of a file has become corrupted, you don’t need to find a completely intact copy, but one which has that first block intact, by matching its partial checksum. However, that still doesn’t get around the requirement that you need at least two copies of each file.

The solution to this efficiency problem comes from coding theory – which covers data compression, encryption, error correction, and more. This encodes files in such a way that enables errors and corruption to be repaired with more efficient use of storage than by keeping duplicates. Much of this developed from work to recover data from noisy radio signals, so the introductory examples here apply to signal coding and to stored files with varying degrees of relevance.

In transmitted data, a similar approach to mirroring files is to send each bit in the data twice. To send a zero bit, you thus send two zeroes. If the receiver then receives two zeroes, it knows that they should represent a zero bit. What, then, if the receiver gets one of each, either 0 1 or 1 0? That is clearly an error, but could either have been 1 or 0 originally.

So a twice-repetition code can detect a single error bit, but can’t correct it. If both bits are errors, it won’t even detect it.

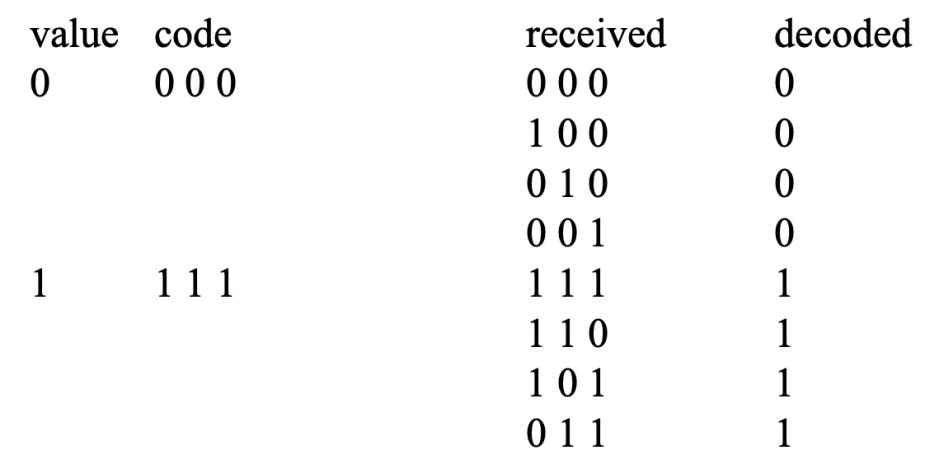

The next step in what are known as linear codes is to try repeating each bit three times. So to send the zero bit, you send three zeroes. If you look at the table below, you’ll see how this is more useful.

A three-times repetition code will not only detect but also correct all single-bit errors, recognising that 0 0 1 should be decoded to 0, for instance. It does this by mapping 1 bit into 3 bits, which is conventionally expressed as it being a (3, 1) code with a ‘code rate’ of 1/3. In storage terms, for each 1 bit of data it would require 3 bits of storage, which is even less efficient than mirrored disks.

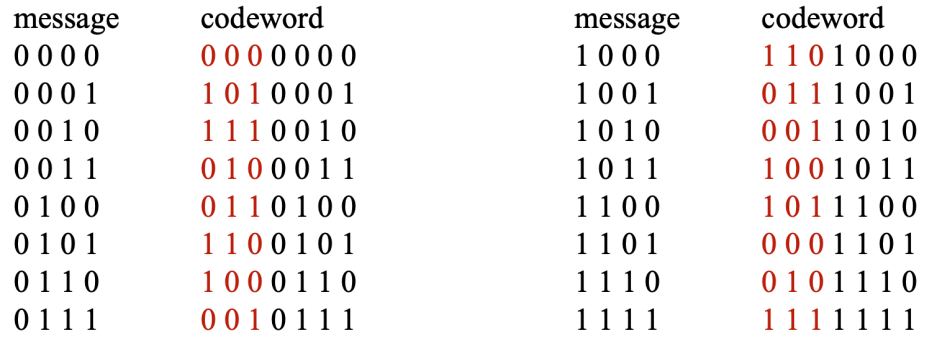

To start seeing where ECC gets to perform significantly better, we move onto a slightly more complex scheme, Hamming code. For this, messages or files are divided into groups of 4 bits, to which 3 bits are added to form the code. These are shown in the table below.

To send or store the four bits 0 1 1 1, we code those with three initial bits 0 0 1, making the complete code 0 0 1 0 1 1 1. In files, those three added bits are often known as parity data, and enable the code to correct all single-bit errors, and to detect all two-bit errors.

For example, if the code 0 1 1 1 1 0 0 is received, that doesn’t match any of the fully-correct codes. The only single-bit change this could represent is the code 0 1 1 0 1 0 0, which is decoded to 0 1 0 0. That could also have resulted from errors in two bits, although if that were the case, there’s more than one possible decoding. So as well as correcting all single-bit errors, the Hamming code also detects (but can’t correct) two-bit errors. It’s a (7, 4) code with a code rate of 4/7, and in storage terms for each 4 bits of data it would require 7 bits of storage. That’s more efficient than mirrored disks, although it isn’t able to correct as many errors.

Richard Wesley Hamming (1915-1998) published his code in 1950. Ten years later, Irving S Reed and Gustave Solomon developed what’s known now as Reed-Solomon code in their honour. These are still used in audio CDs, and can squeeze good levels of error correction for 28 bytes of raw data in just 32 bytes of storage.

Unfortunately, the maths of even basic Reed-Solomon codes is extremely complex and involves juggling polynomials, and to explain them fully invokes Galois fields, which would be a terrifying prospect for any of us.

Just like an audio CD, what a Mac can do is take the original file, divide it up into a series of blocks, then encode the data into a combination of ‘parity’ and original data. These can be stored together or, as in the Parchive format, in separate recovery blocks or parity files. What’s more, you can structure the data in those files so that they too can withstand modest levels of corruption.

There are theoretical and practical limits to what can be achieved with ECC. Obviously, a file whose contents have been completely replaced with zeroes or random bytes can’t be recovered from ‘parity’ data, whereas an intact copy of a file can replace a lost master copy. Depending on how well the chosen ECC performs, you can then make a decision as to the balance struck between ECC and complete copies: they are complementary techniques which work best to guard against different types of loss or damage.