For several weeks, I’ve been focussing on problems of file integrity in macOS. This is an issue which I think is extremely important, and merits more attention than it’s currently getting. In this article, I put my case.

This clay tablet is one of the oldest written records known, and has lasted over four thousand years. It doesn’t contain part of a great literary epic, or even the law of the day, but records the sale of a house.

We have made huge advances in recording important information since. I have stacks of printed books and papers as evidence. Even friable early photos have survived over 150 years, and this example is doubly significant, as the boy shown wasn’t a vagrant at all, but an early example of fake imagery concocted by pioneering photographer Oscar Rejlander.

Since around 1990, my conventional paper and photo records have steadily diminished as more are stored in electronic files. I now have thousands of important records of what I’ve done over those years. They record what I did in my career, public work such as the research that I put into investigating the deaths of 51 people in the Marchioness capsize in 1989, all my software research (on AI) and development, and the childhood of our three children. I want to preserve these files so that they don’t die quietly of bit rot and electronic decay.

Over the last few years there have been advances in the reliability of many types of storage media. We now have low-cost access to Blu-ray which promises more than a decade of reliable storage, possibly significantly longer if manufacturers’ claims are anything to go by, even with the requisite spoonful of scepticism. Modern hard disks and SSDs now incorporate error-correcting technologies to improve their reliability too.

In spite of those, we’re painfully aware that files do still become corrupted. Our understanding of the causes isn’t good, and if you look for recent measurements of the frequency of different types of corruption – including disk errors, file system errors, and ‘bit rot’ – you’re going to be very disappointed.

In theory, no file should ever suffer any corruption, but every so often many of us prove that the problems haven’t been completely solved after all. I’ve been repeatedly told that most types of file corruption don’t happen any more, or are now impossible, which is great news. But the inescapable fact remains that some files do still go bad, for whatever cause, and no matter how careful we are in trying to keep perfect copies of them.

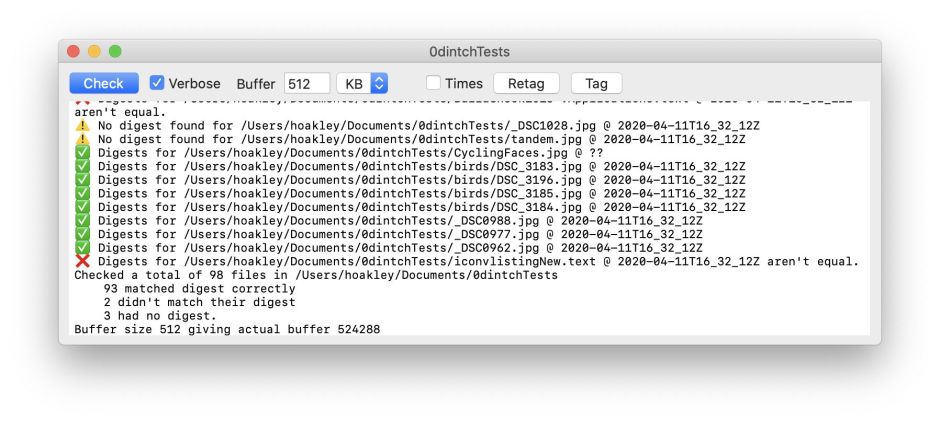

To investigate this, I’ve been deliberately corrupting many files using my new app Vandal. This isn’t intended to model any specific type of damage which they might sustain in real life, though. Several readers have commented that changing single bytes, as Vandal does, isn’t representative of any particular form of damage which is most likely to occur.

Overlooking the fact that we are only guessing what is likely to occur, this isn’t a simulation. If a file format normally suffers irreparable damage when just one byte has changed in a file, then corrupting 512 bytes, or losing them altogether, is only going to be worse in its effects.

The ever-present and unpredictable nature of file corruption is exemplified by a bug in APFS which seems to have appeared in macOS 10.15.4. This can lead to damage and failure during copying of very large files, and seems most likely when using SoftRAID, but it can also occur in AppleRAID. Although APFS has quickly established a reputation for reliability – it’s been in heavy daily use across more than a billion computers and devices for three years now – like any software it hasn’t been and isn’t faultless. That bug is particularly worrying, as it affects one technique which is commonly used to mitigate file corruption, that of storing backups on a RAID mirror array.

When traditional paper or even clay tablets suffer the effects of decay, they usually go slowly. Paper develops marks or foxing, inks may fade slowly. When most files become corrupted, even by just a single bit or byte, the effect on them is worse. In many cases, the file becomes unusable. In the last few days, I have looked at different file formats in common use and randomly corrupted just one or two bytes in test files.

The first action that we can take to preserve the integrity of files which are important to us, and to others, is to store them using formats which are relatively more resistant to the effects of corruption. To discover which formats are more resilient, theoretical arguments and experience can be useful, but the only hard data come from testing out the effects of deliberate and controlled corruption applied to test files.

Next, we need a simple method to detect whether any given file has been corrupted. If the only way to do this is to try opening files, checking thousands is not going to be a practical task. Some file systems provide this as a basic feature, but it isn’t offered even as an option in either HFS+ or APFS, nor is such integrity checking built into the common backup systems used on macOS. Time Machine and other file-based backup systems will only too happily back up files which are corrupt and useless, and can’t ever be opened again.

In addition to being able to detect file corruption, we need methods of recovering undamaged copies of important files. You can do this by keeping several copies of each on a range of different storage media, but unless you can periodically verify their integrity, you could discover that more than one has become damaged. While this may appear unlikely over a period of a few years, with longer periods of storage this becomes increasingly likely. Hard disks in use typically have working lives of 3-5 years; SSDs are normally expected to become unusable after about 10 years; many so-called ‘archival’ optical media don’t even last that long before they too suffer catastrophic failure. The best solution may be to make archival copies to fresh media every five years or so, assuming that we can verify that master copies are still intact.

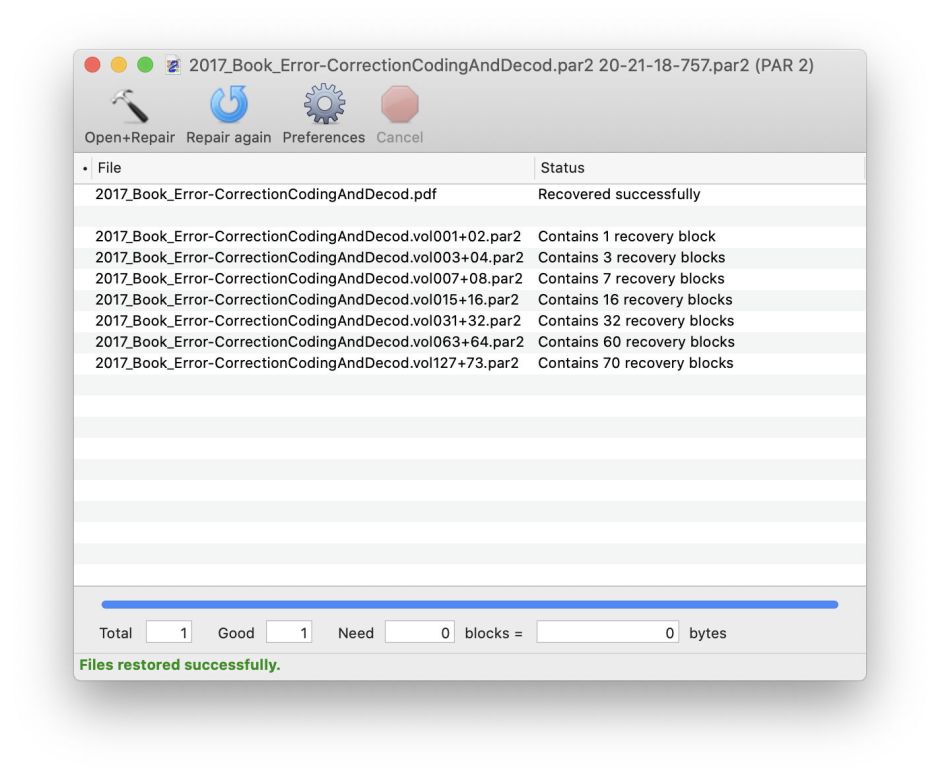

Another approach, which has been used very successfully over the last 70 years or so, is to use error-correcting codes (ECC). Although they’re now widely used in storage media and even in barcodes and QR codes, they aren’t even offered as an option in HFS+ or APFS, nor in macOS itself. Tomorrow I’ll explain more about ECC and how it can be much more efficient than keeping multiple copies of files.

For the moment, let me suggest that the use of ECC, with resilient file formats and efficient detection of file corruption, can be effective ways of protecting the integrity of important files – and far more efficient than filling your storage with multiple copies. That’s where I’m heading, and I hope you can now understand why and how. If we don’t, it may be time to return to clay tablets after all.