One major change in Mojave which has caused a lot of problems is its new approach to privacy, in TCC (Transparency Consent and Control). Although it was described over six months ago at WWDC, the presentations given there remain the least incomplete documentation, and Apple has neglected to provide anything by way of a systematic guide. This has left users and developers largely in the dark, stumbling along by trial and error.

The simplest summary of app signatures and Mojave’s new privacy is that, in general, signatures are not required to negotiate privacy controls successfully. Of course it’s not as simple as that, and plenty of users attribute problems with privacy protection to app signatures, and it’s hard to be certain whether they’re right or wrong. There is, though, one compelling reason to get and sign with a Developer ID, which I’ll explain later.

As a test for this article, I took one of my notarized apps which has detailed privacy settings, and stripped out its signature and notarization ‘ticket’. It was still perfectly capable of gaining access to protected areas, despite the fact that it then had a serious signature error of -67023 “invalid resource directory (directory or signature have been modified)”.

This is in accordance with observed behaviour of TCC in the face of unsigned apps, those with revoked signatures, and other major problems. Although TCC frequently calls for checks of signatures, it behaves very differently from Gatekeeper. It doesn’t have any equivalent to quarantine: each attempt by an app to access protected data or services is considered afresh, and evaluated against the app’s Info.plist and current privacy settings, particularly the lists in the Privacy pane. What’s more, it doesn’t matter whether the app in question is just your own local script: TCC’s rules apply to all executable code, wherever it’s come from.

TCC behaves differently according to whether an app was built using the 10.14 Mojave SDK, or an earlier version or other build system. It also behaves differently with apps which run in a sandbox (all App Store apps and a few others), or have been hardened (generally only when notarized too).

If an app is built with the 10.14 SDK, using Xcode 10, whether or not it’s signed it has to contain certain items in its Info.plist file if it is going to access protected areas and services. Although you can add it to the Full Disk Access list which should ensure that TCC will let it access protected folders, only the app and its Info.plist can do the right things to gain access to the camera and mike, for instance.

Apps which are built with earlier versions of the SDK, and other development systems, shouldn’t need to contain the same entries in their Info.plist, indeed they shouldn’t even require an Info.plist. That said, many users attribute problems with older apps to issues with their signature or Info.plist.

If you don’t fancy trying to decipher an app’s Info.plist, there are several tools which can help. Drag and drop the app onto my utility Taccy, and it will display most of the information relevant to privacy protection and TCC’s requirements.

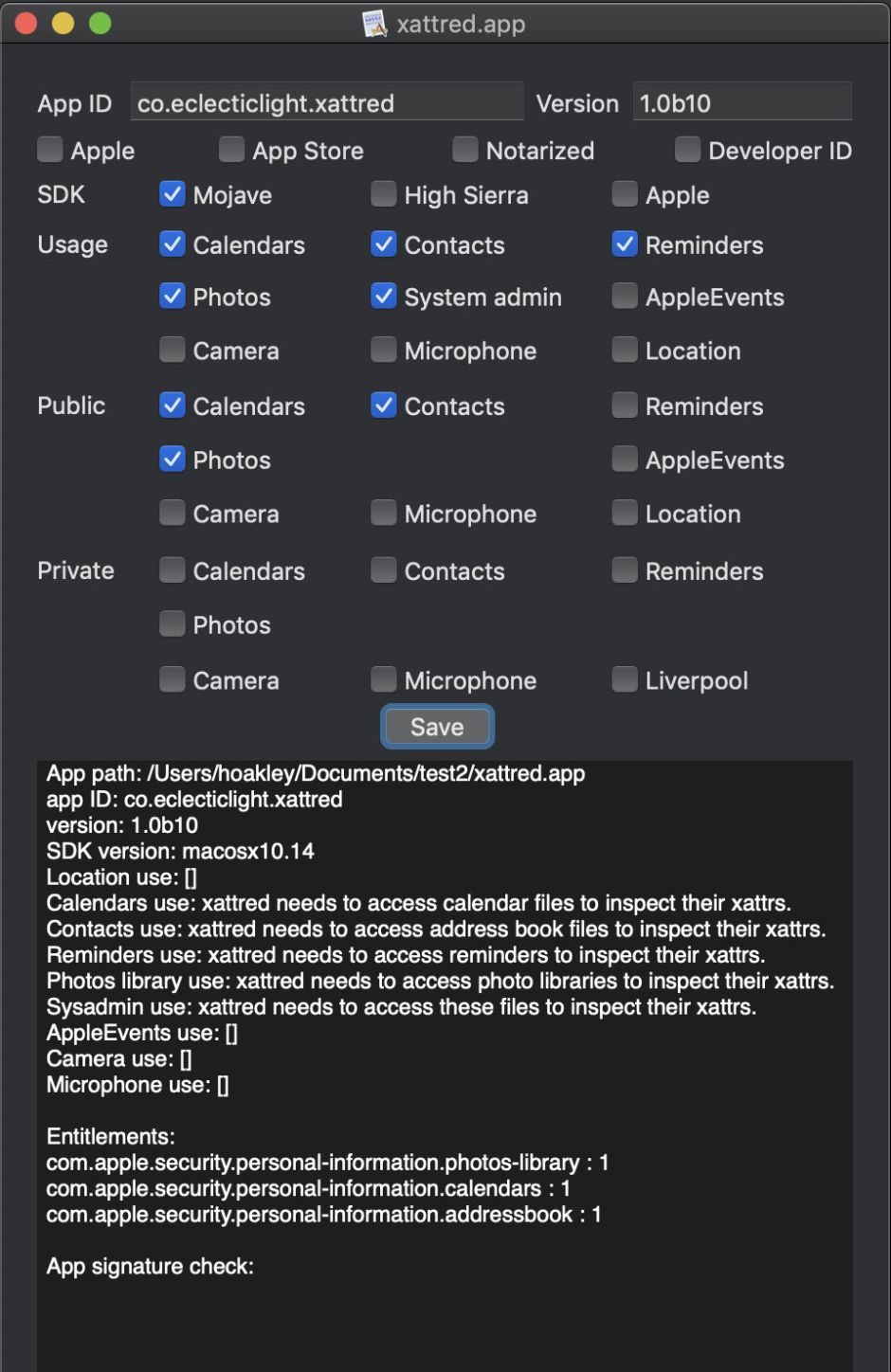

Here’s Taccy’s take on my doctored and (now) unsigned version of xattred. You’ll see at the top that it doesn’t have any form of signature or notarization, not even using a Developer ID. It was, though, built using the Mojave SDK, so TCC requires it to follow the latest set of rules. It has usage declarations for five different protected resources, and claims three entitlements, for access to Calendars, Contacts, and Photos.

Look in the text view below, and you’ll see those usage declarations: these are short chunks of text which are embedded in the dialog which TCC displays when seeking user consent to grant access to those protected areas. If those are omitted from an app built against 10.14, TCC won’t let it access those areas, and if it tries to it may be crashed.

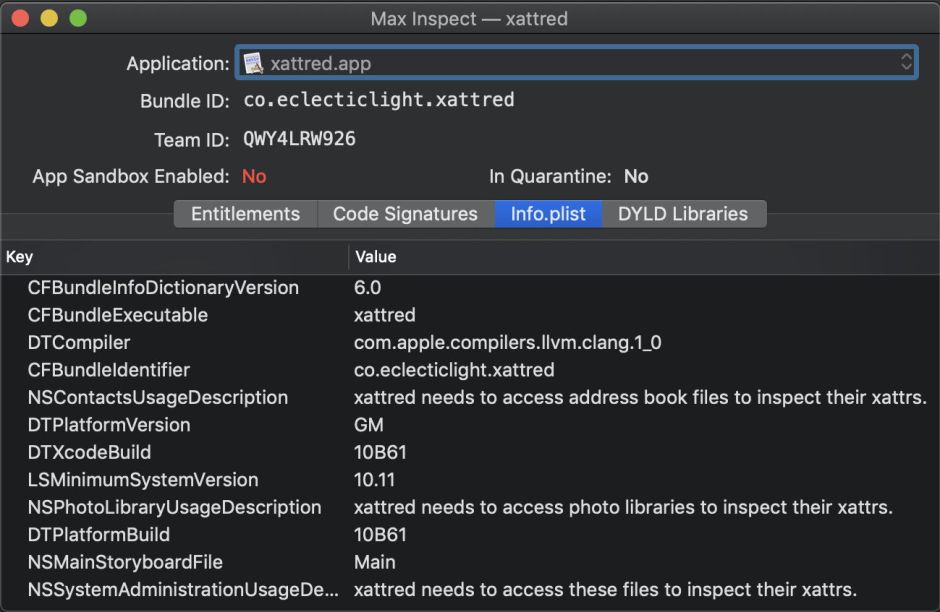

If you want to go even further, use a tool such as Max Inspect from the App Store. This lists separately the app’s entitlements, code signatures (which it doesn’t validate), and the contents of the Info.plist file.

As far as TCC’s protected folders and services go, all this should be reasonably straightforward. Things get more complex when it comes to command tools, and apps which control other apps, usually through scripts.

TCC expects to be able to use a GUI to display dialogs, but shell commands and scripts don’t normally have any such human interface. To address this, TCC constructs an attribution chain, which hopefully leads it to an app like Terminal. It is then Terminal which hosts the dialogs to grant access to protected resources, and the app is added to the appropriate list in the Privacy pane.

Problems come when there isn’t an obvious app at the head of the attribution chain. Sometimes you can add the command tool itself to the Full Disk Access list, but occasionally it’s not clear what you should do. A quick glance at TCC’s messages in the log normally reveals what it considers to be in the attribution chain, which you can then add to the Full Disk Access list and move on.

Controlling other apps, typically using scripting, has inevitably become very complex, and it’s here that TCC’s biggest problems remain. Each connection needs to be agreed by the user: there doesn’t seem to be any way for an app or script to obtain block pre-authorisation. Two of the best resources on the effect of these changes on users’ own scripts are on Mark Alldritt’s blog: Shane Stanley wrote this article on coping with the changes as a whole, and Mark Alldritt has here explained the merits of signing your own apps and applets.

Mark makes perhaps the most important point of all with regard to TCC and code signatures: there’s nothing in Mojave’s new privacy protection which requires code signatures, as far as I can see. But if you sign your app or applet using a valid Developer ID, each time that you change that app, your existing privacy settings remain valid for the new version, as TCC can track your app by its signature. When code is left unsigned, every time that you change it you’re likely to be pestered by the same succession of dialogs before it can access protected resources or services.

Unfortunately, and quite unintentionally, this is another step away from being able to write a quick and dirty script to solve a problem.