Businesses are often built on trust, and one of the most fundamental elements of trust must be in the accuracy of the data on your Mac. How should you ensure that your files remain true and correct?

If it is on your computer, then it must be right. Whether we see it on the web, or dig out an old spreadsheet or other document from a few years back, we all too often place total trust in the accuracy and integrity of computer data.

Yet many of us have had experiences, sometimes quite spooky, where you are sure that what you last saved is not what you see now, as if someone had tampered with the data. In business, this can be critical, with everything resting on the correct location of a decimal point, for example.

The requirement

In the UK, you have a legal obligation, under the Data Protection Act 1998, to ensure that all personal or sensitive data remain intact and correct, and do not contain errors. There are similar obligations in other European States, in North America, Australia, and many other administrations across the globe.

Inaccuracies, or corrupt records, are also unacceptable in accounts, tax records for companies, individuals, and for VAT purposes. They bring disaster in court proceedings, contracts, financial transactions, almost anything of importance to your business. The long and the short of it is that documents, records, and data must be kept correct at all times.

Risks and solutions

The problem is simplest when dealing with a document that is created once, and merely has to be maintained in that pristine state. What you then need is a record of its creation, and robustly-stored copies that are demonstrably the same as that original. For everyday purposes, this is achieved using the time and date stamp associated with the document, but this is prone to failure and spoofing.

Most forms of digital storage are vulnerable to very infrequent errors. Studies at labs like CERN, which store massive amounts of data, have confirmed that individual bits become altered as data are copied repeatedly.

This is hardly a worry if you have a few gigabytes of important files, and are only copied one or twice a year, but the more data you have, and the more frequently it is moved around or copied, the more likely it will succumb to ‘bit rot’. Although semi-permanent storage media such as optical disks can be more archival than hard disks, they too are prone to the problem.

The best solution is to take a numerical fingerprint of each file, in the form of a checksum or hash key that changes when contents change.

This is employed by advanced file systems such as Sun’s ZFS, which uses file fingerprints at each step in the transfer of data with and within file storage. It is smart enough to spot an error when reading from one disk in a mirror set, obtain a correct copy of the file from the other disk in the set, then automatically correct the corrupt copy of the file.

Trails and logs

Data that change have more complex requirements if you are to be able to demonstrate their integrity. In this case, you need an audit trail to show who changed what and when, the sort of thing that a professionally-developed database will do automatically, and which you can see in all good Wikis, for example.

Audit trails or change logs can be operated manually in conjunction with saved and protected copies of interim versions of a document or other data, although even when kept meticulously they remain open to doubt. The best and most robust audit trails are those that are maintained automatically, and locked away read-only, features of comprehensive version control systems, professionally-written databases, and similar software that is fit for business.

No matter how good and tamper-free an audit trail is, though, it does not prevent loss of data integrity, only records when and how it occurs.

Locks

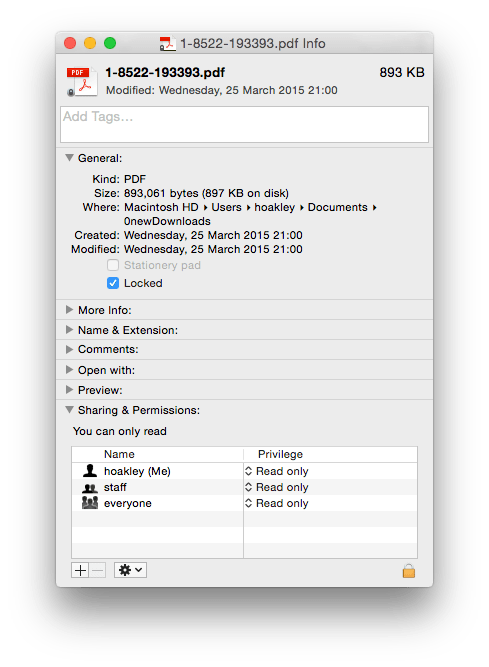

The crucial control is that over write (or change) permissions. Many professional products let you lock documents or records, so that a robust password prevents others from intentionally or inadvertently making changes.

This feature is surprisingly under-used, despite normally being tough to crack; there are password crackers available for some popular locking systems, such as Adobe Acrobat’s for PDFs, and various of the Microsoft Office suite, but these are only for the most determined cracker.

Without document locking, it can be remarkably difficult to have any confidence in data integrity. It is easy when opening an unprotected document to accidentally press a key, such as the space bar. Even if the application tracks carefully whether a document has changed (and many do not, marking every opened document as ‘dirty’), many users will automatically accept the kind offer to save the changed document when it is closed. This alters the date of last modification, and calls into question its integrity.

Record locking and comprehensive audit trails are particularly important in databases containing personal or sensitive information.

There are popular packages in everyday use that permit most users not only to edit old records that they originally created, but also to alter entries of other users. Although they keep an audit log, that may not record what is changed, and most users are unaware of the importance of checking the audit log before accepting the fidelity of records.

Audit trails and change logs are an essential complement to data when examining the latter, for instance during legal proceedings, another common point of failure.

OS X features

OS X provides several features useful in assessing and maintaining data integrity.

These include secure user accounts (when properly used), permissions (including ACLs when deployed), time and date metadata attached to every file, and Time Machine backups for relatively recent changes to files.

Unfortunately file-system journalling is not intended for this purpose and has essentially no value here, although it helps heal minor errors in the file system. OS X Server, particularly when configured with Home folders on the server, extends these basic facilities to workgroups, but neither client nor Server editions provide automatic checking of data integrity in the way that ZFS does, nor version control or audit trails.

Most of these features in Mac OS X can be circumvented or abused if they are not used properly. Shared or weak passwords make it impossible to know who has accessed documents, and should never be tolerated in any business or organisation. Permissions need to be used with discipline: putting unprotected copies of key documents in shared file areas is a sure way to lose integrity, as is poor moderation of group membership.

Backup and security policies must be pitched appropriately to help users respect integrity (and the processes that ensure it), not to drive them to find dangerous workarounds because policies are over-restrictive. Make users change 12-character randomised passwords every week and they will be forced to scribble them on sticky notes.

Nightly backups are generally good, but if you discard them after a week you will be unable to retrieve an original document that went missing a month ago.

Preserving copies of all key projects and documents on archival media is excellent, but if you do not transfer them to newer formats before losing access to older ones (such as magneto-optical and optical disks, and tape), your archives will be effectively inaccessible.

Preservation of data integrity is an essential consideration in everything that you do in your business, but once robust systems are established, they should be easy and cheap to maintain.

Technique: Checksums and Hash Keys

These are relatively simple techniques that are widely used to verify that the data stored in a file or record are intact.

Checksums are the simpler, computationally quicker and cheaper, but have a small chance that they may fail, and can be deliberately cheated. An easy way of using checksums to validate Mac files is to put them in disk images and log their CRC32 checksums using Disk Utility.

The idea is to use modulo arithmetic and similarly quick operations to add together the numeric values of all the bytes in the data: 150 + 50 = 200 (decimal), but 150 + 110 = 005 (decimal, mod 255). This ensures that the sum does not grow too large, but remains a fixed and convenient size.

The snag is that random alteration to the data (as might occur in ‘bit rot’) has a small chance that it might not alter the checksum, allowing the error to pass unnoticed. Certain types of checksum, such as the CRC32 technique, have become very widely used, but are known to be surprisingly weak in practice, and vulnerable to scams.

Hash keys, most popularly the SHA-1 and SHA-2 forms, are more complex to understand and compute, require more storage, but have almost no chance of failure or of deliberate cheating.

In essence, they use a mixture of mathematical functions to compress all the bytes in the data down to a single, very large number. Each time that they are applied to the same data, they generate exactly the same result, but you cannot of course convert a hash key back into its original data.

When Apple and many other vendors release downloadable updates, they usually provide SHA-1 keys for the update files, so that you can check that each file that you have downloaded is what it claims to be, and has neither been tampered with nor corrupted along the line. The App Store is full of utilities to compute hash keys and checksums, such as Hash (free).

Checksums are extremely common in a lot of different systems, to check data integrity. They are used in hard disk storage, and estimates are sometimes given that your drives spend up to 25% of their active time checking data integrity, in network communications, particularly wireless, and much more.

Technique: Version Control

Version control systems (VCS) function as document databases. When you want to add a document to your system, it is locked away in the VCS database, which might be implemented using MySQL or another popular heavyweight database server. This controls access to the document, with specified users being able to check the document out, modify it, and check it back into the database.

Depending on the VCS and its configuration, it may store entire copies of every version of a controlled document when they were checked in, or it may examine each new version and store just the changes made to the document (known as the ‘delta’, from the Greek letter used to denote differences in maths).

The idea is simple, that at any moment you can examine the document at every stage during its lifetime, to discover what its state was in the past, and details of previous and subsequent changes made to it.

VCS work best with many relatively small, plain text documents, and are widely used in software development, where they allow programmers to track changes and locate bugs, and the like.

It is less usual with large or binary documents, where it can quickly make unreasonable demands on storage. This is compounded by typical binary file formats, which are seldom amenable to the economies of the ‘delta’ option. For instance, if you save two revisions of a 100 MB file each working day, not using deltas, you will need an extra 1 GB of database storage a week, and that will propagate through every backup made too.

VCS databases that store their documents in a monolithic file can likewise grow to the point where they take over entire storage systems. A versatile VCS therefore must be able to archive out inactive documents and then reclaim space in its database.

Further details of VCS in the management of text (and related) documents is covered in this article.

Updated from the original, which was first published in MacUser volume 27 issue 11, 2011.