RAID arrays used to be the preserve of serious servers and high-end workstations. Should they now be on your shopping list?

Redundant Arrays of Independent (originally Inexpensive) Disks – RAID systems – were a good solution for a bygone age. Devised in the 1980s when disk capacities were measured in megabytes not terabytes, and access times were glacially slow compared to those of even cheap consumer drives now, they were deservedly popular in most servers and specialist systems.

Traditional RAID

The basic idea in a RAID system is to harness two or more disks together and use them in concert, to speed up disk access, increase storage reliability, or any balance of those in combination. In those days, if you had to access a database that was 25 MB (huge for the time), you might set up six 10 MB hard disks as a mirrored pair of three-disk sets.

Within those three disks, your data would be striped across the surfaces of the disks to accelerate access. The whole assembly would have been cheaper than a single 30 MB drive of comparable performance, and maintaining two copies of the contents would have prepared you for the inevitably frequent hard disk errors.

New RAID

With wickedly quick drives of 1 terabyte and larger readily available, fatal disk errors a relative rarity, and the rise of Cloud storage, you might assume that RAID was now no more than a historical curiosity. However we tend to push hardware to its performance limits, so many more users are working with media files in the tens or hundreds of gigabytes that have to be stored locally, and do not want to wait seconds waiting for them to load or save.

Two recent hardware advances have increased the potential gains offered by RAID. For those seeking extreme performance, SSDs are an excellent solution, but still seriously expensive in larger capacities. Intel’s Thunderbolt external bus has also brought a step improvement in data transfer rates with external storage, to the point where disk access speed is once again the bottleneck in performance.

SSDs are sufficiently affordable as to be offered as standard in the new MacBook, MacBook Air and MacBook Pro models, and are increasingly popular as startup and scratch storage disks for demanding use, including servers. At present they become disproportionately costly in capacities greater than about 512 GB, so assembling several smaller SSDs into a larger logical disk can be cost effective. By striping your data across several SSDs you can also achieve even greater performance.

Early experience with Thunderbolt external storage is that it does deliver the greatly enhanced performance that is promised. However data transfer over the Thunderbolt bus is now quicker than can be supported by reasonably-priced hard disks.

The latter are likely to achieve a theoretical maximum transfer rate of 3 Gb/s, or in some cases 6 Gb/s, which is a good match for eSATA at 3 Gb/s or USB 3 at 5 Gb/s. But currently a single SATA drive cannot exploit Thunderbolt’s 10 Gb/s to the full. In this respect Thunderbolt delivers performance similar to the best Fibre Channel operating over fibre-optic cable, which is usually matched with RAID arrays.

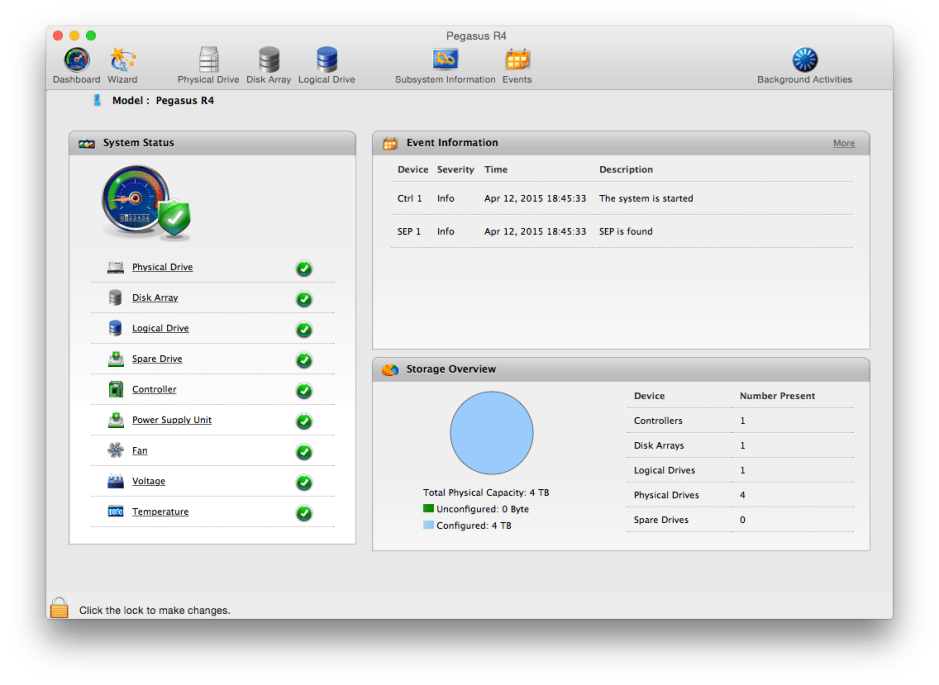

In use

As far as your Mac is concerned, a RAID system is like any other storage: apps write to a single logical device, and read from that, without any concern over how many drives the data may be stored on. The RAID controller or software takes a single stream of data being written and distributes it to two or more hard disks forming the RAID set.

When reading data from the disks, the controller should read from all the drives required. If there are drives containing duplicate copies, that arriving first at the controller is sent over Thunderbolt to your Mac, ensuring optimum speed.

RAID levels

RAID distributes data across the drives in a set using three different schemes. It can be spread across two or more disks in stripes, so that each disk only contains a portion of the data. When the size of data in each stripe is carefully matched to the capabilities of each disk, this can accelerate both read and write performance. However in its basic form, known as RAID level 0, loss of any disk in the striped set renders the data on the remaining disks unusable: that is a fatal error.

The second storage scheme used in RAID is to duplicate the contents of one drive to another by mirroring, RAID level 1. Each disk in the set, normally just a pair, then holds identical contents, so that single disk failure does not lose any data. Implemented in hardward, this does not alter performance, but should increase the resilience of storage.

You can thus compensate for the increased risk of data loss from basic striping (level 0) by mirroring the contents of a striped set onto another RAID set; this is known as RAID level 10, 1+0, or ‘mirroring plus striping’, and is widespread, simple and effective, but not as efficient as some other levels.

The third storage scheme uses various checks for data integrity coupled with stripe design, to make a striped set more robust in the face of single disk failure; these include RAID levels 2 to 6. For instance level 5 requires at least three disks in the RAID set with parity checking and ingenious design, so that they preserve all your stored data if one of the drives in the set fails, but work together more quickly than a single drive.

Reliability

An important design principle to observe when building any RAID system is to avoid using hard disks from the same manufacturing batch. Studies of massive collections of hard-worked disks in data centres have shown that hard disks from the same batch tend to fail at the same time. Whichever level of RAID that you choose, near-simultaneous failure of two or more disks is very likely to break a set and lose its contents.

If you can, opt for an enclosure that supports hot-swapping of disks; otherwise you will have to shut the whole system down and open it up when a drive fails.

One neat way of generating complete backups is to configure a mirror (level 1) and to repeatedly swap out one half of that mirror to store off-site or in another safe location. At the end of each working day, prepare that half of the mirror for transport, then pop the drive(s) and replace them with fresh ones. Overnight the RAID system will rebuild the mirror, ready for use the next day.

Better RAID systems also allow you to keep spare, unused drives in the enclosure ready to bring into use in the event of failure of a drive within a set that is in use. Standby drives can minimise the time required to recover your data store when there is a hardware failure.

However that is particularly dependent on the RAID system monitoring drives for incipient failure, and alerting you in the event of S.M.A.R.T. or other indicators crossing set thresholds. As drives often do not give any early warning of failure, you will also need to be prepared to swap a standby in without notice.

RAID systems are enjoying renewed popularity, even in smaller workgroups and for single users. Once again the performance, resilience, and economy that they offer is proving worthwhile.

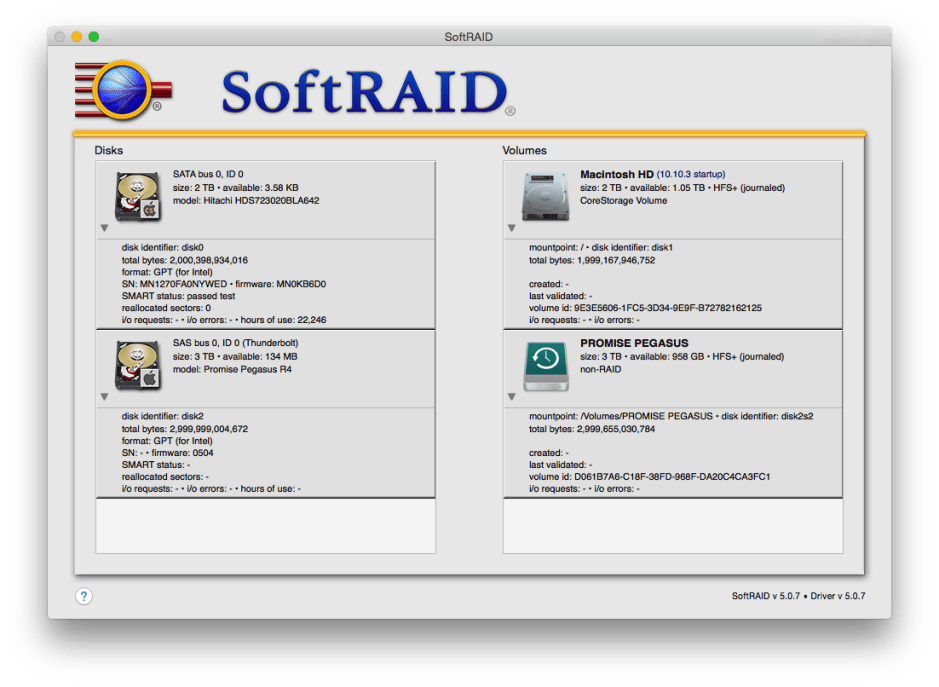

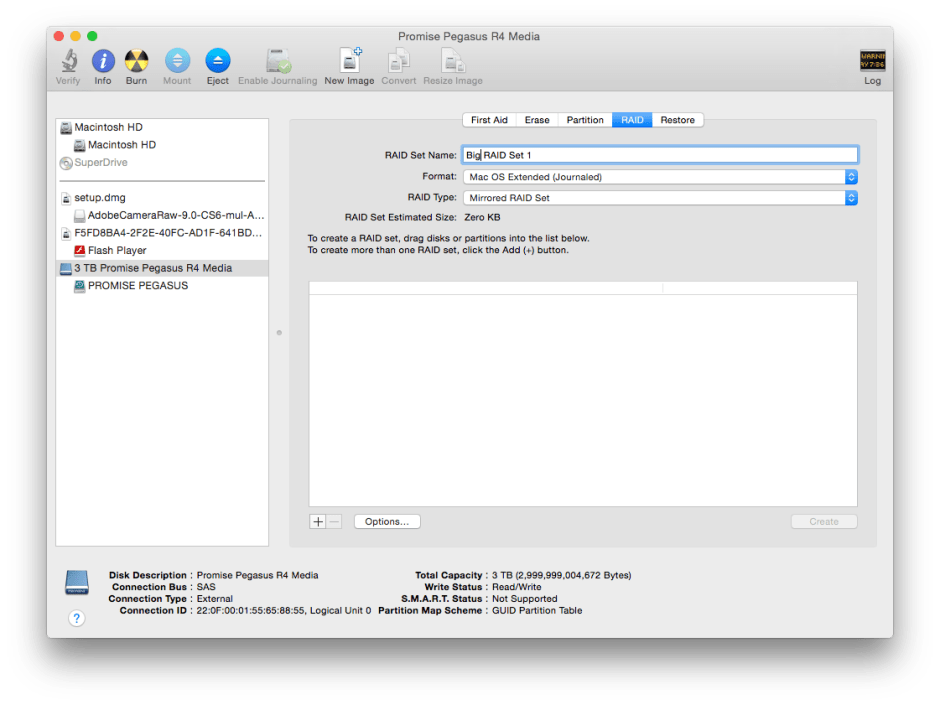

Tools: Software RAID

If you can implement RAID using a dedicated hardware RAID controller, you will get best performance and greatest robustness, at greater cost. The cheapest way of building a RAID system is to buy a regular multi-drive enclosure and implement its control in software, either using the support built into OS X and Disk Utility, or a dedicated third-party product such as SoftRAID.

Software RAID systems interpose an additional step between calls to write to or read from a disk, and the storage system, placing greater load on your Mac and the bus connecting it to the RAID set. For example, if an application wants to write to a software mirror set, the single call to write data results in the data being sent separately from your Mac to each disk in the set.

Because of Thunderbolt’s greater bandwidth, it is able to cope much better with this than USB 2, FireWire 800, or even eSATA. However you are still likely to notice the difference in performance between software RAID and that provided by a good firmware implementation.

The RAID software is itself vulnerable to bugs and crashes, which are far less probable in good firmware RAID systems. A few users of AppleRAID and of SoftRAID claim to have suffered problems with their unreliability, although others appear to have kept software RAID systems running faultlessly for long periods. Some of the problems claimed with SoftRAID may result from conflicts with the system-level driver that it relies on, or perhaps failure to keep that driver up to date.

Before betting your business or its crucial data on any RAID system, particularly one implemented in software, you should build confidence that it will prove reliable in your specific application, with both your Macs and the hard drives that they will control.

Technology: ZFS – the Mac file system that almost was

RAID builds multiple physical disks into single logical drive units. This suits the Mac Extended File System (HFS+), which likes to map between the physical and logical in simple ways: you can of course partition a single physical disk up into several logical volumes.

ZFS is Sun’s thoroughly modern file system that gives complete flexibility in mapping logical storage units to physical ones. You can throw it a bunch of disks in different enclosures, spanning volumes across multiple disks at your will. Apple looked seriously at offering built-in support for ZFS in OS X, but has so far refrained from doing so in any general release, although developer previews have offered experimental ZFS support.

Recently one of Apple’s main advocates of ZFS, Don Brady, founded Ten’s Complement with the aim of bringing a commercial implementation of ZFS (ZEVO) to the Mac market, and this was acquired by GreenBytes, who released the free ZEVO Community Edition, but last year were swallowed by Oracle. The remaining open source implementations available are MacZFS and Open-ZFS.

Those who have used ZFS with Sun and other systems sing its praises, as offering total flexibility in how you deploy and utilise physical storage systems. However it has heavy costs: its guidelines recommend that your Mac has 1 GB of of active replacement cache (ARC) memory for every 1 TB of storage; the initial ZEVO release is limited to an ARC of 16 GB, thus is restricted to a total of 16 TB storage.

Configuring and maintaining ZFS systems is also notoriously complex, because of its great power. RAID – known as Vdev and RAIDZ – can be spread across physical disks in a novel way, for instance. If you want to get a flavour of the issues involved, ZFS Build makes harrowing reading. The free implementations of MacZFS and Open-ZFS are run from the command line, and lack facile graphical tools.

Updated from the original, which was first published in MacUser volume 28 issue 22, 2012.